Imagine a ballet performance where dancers, musicians, and stage crew seamlessly collaborate to create a breathtaking show. Similarly, in the realm of operations and technology, orchestration plays a pivotal role by seamlessly coordinating various elements to achieve desired outcomes. Whether it’s automating intricate IT processes, managing data flows across different systems, or orchestrating the deployment of applications in a cloud environment, orchestration empowers organizations to tackle complexity with finesse.

At its core, orchestration goes beyond mere automation; it encapsulates the intelligent sequencing, monitoring, and optimization of tasks to ensure smooth and efficient execution. As businesses continue to embrace digital transformation and navigate intricate landscapes, the importance of orchestration becomes increasingly evident. It not only enhances productivity but also reduces errors, accelerates time-to-market, and provides the agility needed to adapt to ever-evolving demands.

Best Orchestration Tools Comparison Table

| Orchestration Tools | Key Features | Use Cases |

| Kubernetes | Container orchestration, scaling, self-healing | Cloud-native apps, microservices |

| Docker Swarm | Native clustering, simplicity | Small-scale deployments |

| Apache Airflow | Workflow automation, scheduling | Data pipelines, ETL |

| Terraform | Infrastructure as code, multi-cloud support | Cloud resource management |

| HashiCorp Nomad | Dynamic scheduling, multi-platform support | Microservices, batch processing |

| OpenShift | Container platform, multi-cloud compatibility | Modernizing apps, hybrid cloud |

| AWS EKS | Managed Kubernetes service | Cloud-native applications |

| Azure AKS | Managed Kubernetes service | Application deployment on Azure |

| Google GKE | Managed Kubernetes service | Scalable containerized applications |

| Jenkins X | Automated CI/CD, Kubernetes-native | Continuous delivery for Kubernetes |

| Spinnaker | Multi-cloud CD, deployment pipelines | Large-scale, multi-environment deployment |

Top Orchestration Tools in the Market

Kubernetes

Kubernetes plays a pivotal role in simplifying the complexity of deploying and managing containerized applications. It acts as a conductor, seamlessly coordinating and optimizing the deployment of containers across clusters of machines.

Image Source: kubernetes.io

Features:

Automatic Scaling

Kubernetes introduces a game-changing feature in the form of automatic scaling. It dynamically adjusts the number of container instances based on factors like resource utilization or incoming traffic.

Load Balancing

Kubernetes incorporates built-in load balancing mechanisms to distribute incoming traffic across multiple containers, ensuring optimal resource utilization and preventing bottlenecks. This enables high availability and reliability, even in the face of sudden surges in demand.

Self-Healing

One of Kubernetes’ standout attributes is its self-healing capabilities. If a container or node fails, Kubernetes automatically detects the failure and takes corrective actions, such as restarting containers or replacing failed nodes. This results in increased resilience and reduced downtime.

Kubernetes boasts a vibrant and expansive community of developers, contributors, and users. This community-driven approach ensures that Kubernetes evolves rapidly, with new features, enhancements, and best practices continuously emerging. Additionally, Kubernetes is designed to be cloud-agnostic, allowing seamless integration across various cloud providers like Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP). This flexibility enables organizations to harness the power of Kubernetes while leveraging their preferred cloud infrastructure.

Kubernetes is a true game-changer in the realm of container orchestration, offering a sophisticated yet user-friendly platform to manage the complexity of deploying and scaling containerized applications. Its role in automating tasks like scaling, load balancing, and self-healing drastically simplifies operations, enhances reliability, and accelerates the deployment of modern applications. With its strong community support and seamless integration with cloud providers, Kubernetes empowers organizations to harness the full potential of containers while focusing on innovation and business growth.

Ready to orchestrate? Contact us today and unlock your true operational potential with cutting-edge orchestration tools!

Terraform

Terraform stands as a cornerstone in the realm of Infrastructure as Code (IaC), a paradigm that revolutionizes the management of cloud resources and infrastructure. IaC entails describing and provisioning infrastructure using code, allowing for consistent, repeatable, and automated deployment and management. Terraform acts as a powerful tool within this paradigm, enabling developers and operators to define and manage infrastructure in a programmatic, efficient, and scalable manner.

Terraform’s strength lies in its declarative syntax, which empowers users to describe their desired infrastructure state rather than specifying the steps to reach that state. Users define resources, their configurations, relationships, and dependencies in a human-readable configuration file. Terraform then intelligently interprets this configuration and orchestrates the provisioning and management of resources across various cloud providers. This approach eliminates the need for manual intervention and ensures that the desired infrastructure state is accurately maintained.

Examples of IaC Workflows and Benefits:

Scalable Web Application Deployment

With Terraform, a developer can define infrastructure components such as virtual machines, load balancers, databases, and networking in code. This enables the rapid and consistent deployment of scalable web applications across different environments, ensuring reliability and reducing deployment errors.

Immutable Infrastructure

Terraform promotes the concept of immutable infrastructure, where changes are made by replacing existing resources rather than modifying them. This ensures consistent, predictable updates and simplifies rollback procedures.

Automated Scaling

Leveraging Terraform’s declarative syntax, teams can implement automated scaling rules. As demand fluctuates, Terraform adjusts the number of resources (e.g., VM instances) based on predefined conditions, ensuring optimal performance and resource utilization.

Disaster Recovery

By defining disaster recovery infrastructure in Terraform, organizations can quickly recreate essential resources in a secondary location in case of a primary site failure. This approach ensures business continuity and reduces downtime.

? Read more: Exploring the Terraform Cloud Adoption Framework: A Powerful Solution for Enterprise Migration

Apache NiFi

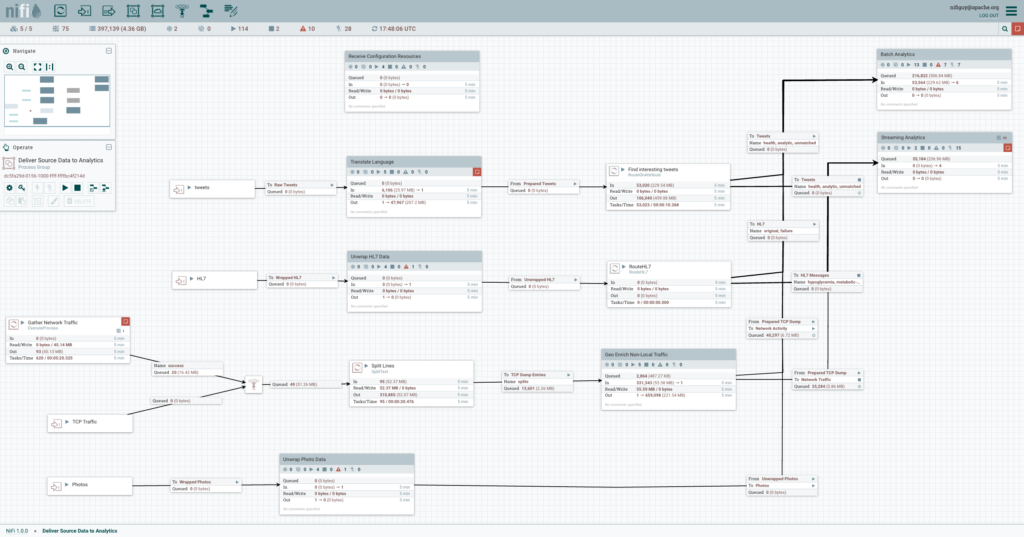

Apache NiFi emerges as a powerful data orchestration tool designed to streamline, automate, and optimize the flow of data across diverse systems and environments. It serves as a conductor that harmonizes data movement, transformation, and processing, allowing organizations to achieve seamless data integration, efficient workflows, and real-time insights.

At the core of Apache NiFi lies its innovative data flow architecture. NiFi empowers users to design data flows through a visual interface, where components known as processors, connectors, and controllers are connected to create orchestrated data pipelines. These pipelines handle a wide spectrum of tasks, including data routing, filtering, enrichment, transformation, and transformation. NiFi’s modular architecture fosters flexibility, enabling users to build complex data workflows with ease.

Image Source: nifi.apache.org

Use Cases in Data Integration, IoT, and Real-time Analytics:

Data Integration

Apache NiFi excels in consolidating data from various sources, such as databases, APIs, and file systems. It seamlessly orchestrates the movement of data across on-premises and cloud environments, ensuring accurate, consistent, and timely data availability for analysis and reporting.

Internet of Things (IoT)

NiFi’s data routing and processing capabilities make it a valuable asset in IoT scenarios. It collects, filters, and transforms data from IoT devices, enabling organizations to extract meaningful insights and trigger responsive actions based on real-time sensor data.

Real-time Analytics

NiFi supports real-time data processing by ingesting, enriching, and delivering data to analytics systems. It enables organizations to gain immediate insights from streaming data, enabling timely decision-making and enhancing operational efficiency.

Data Enrichment and Transformation

NiFi’s powerful processors allow data to be enriched with additional information, converted into different formats, or merged with other datasets. This facilitates data preparation for advanced analytics and reporting.

? Ready to take the leap? Let us help you navigate the orchestration landscape. Our experts are here to guide you on your journey towards streamlined workflows and enhanced productivity.

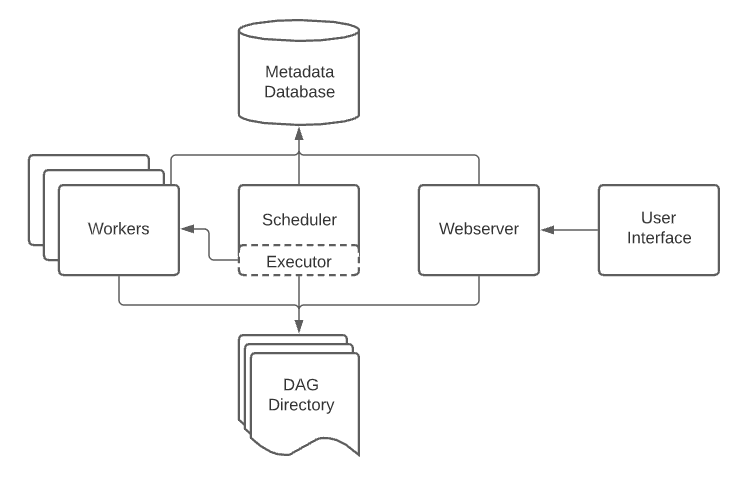

Apache Airflow

Apache Airflow is a popular open-source platform designed for orchestrating and automating complex workflows. Its primary role is to streamline the execution of tasks and processes in a structured manner, offering a comprehensive solution for managing everything from simple data transformations to intricate ETL (Extract, Transform, Load) pipelines and beyond. Airflow’s intuitive interface allows users to create, schedule, monitor, and manage workflows efficiently, contributing to enhanced operational efficiency and reliability.

At the heart of Apache Airflow’s power lies its DAG (Directed Acyclic Graph) architecture. A Directed Acyclic Graph is a collection of tasks with defined dependencies, forming a visual representation of the workflow’s flow and order of execution. In Airflow, users create DAGs using Python scripts, where each task is a unit of work with defined inputs, outputs, and execution logic. These tasks are orchestrated based on their dependencies, allowing for precise control over the workflow’s sequence and execution triggers.

Real-World Use Cases and Success Stories:

Data Warehousing and ETL

Many organizations utilize Apache Airflow to automate ETL processes, seamlessly moving data from various sources to a data warehouse. Its DAG-based approach enables efficient data transformation, validation, and loading, ensuring data quality and accuracy.

Data Pipeline Orchestration

Companies leverage Airflow to orchestrate end-to-end data pipelines, encompassing data ingestion, processing, transformation, and delivery. This ensures data consistency and timely delivery for business analytics and reporting.

Model Training and Deployment

Machine learning practitioners use Airflow to automate the training and deployment of machine learning models. Airflow’s scheduling capabilities enable regular model updates, maintaining accurate predictions and insights.

Cloud Infrastructure Management

Airflow is employed to automate cloud infrastructure provisioning, management, and optimization. It helps businesses scale resources as needed, enhancing resource utilization and cost efficiency.

Workflow Automation

Airflow’s versatility extends beyond data-related tasks. It’s utilized to automate a wide range of workflows, such as orchestrating software deployment, testing, and monitoring in DevOps practices.

? Ready to streamline? Let’s talk orchestration. Our expertise, your efficiency!

OpenShift

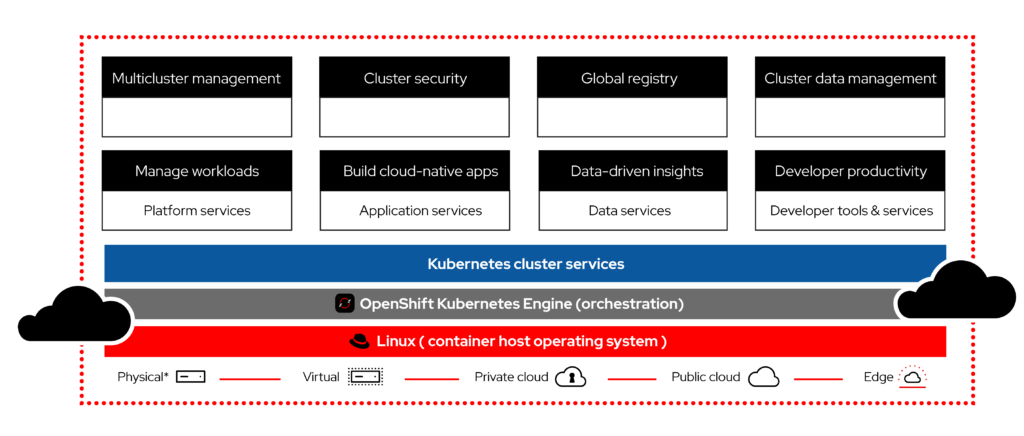

OpenShift, developed by Red Hat, is a leading containerization and application platform designed to streamline the deployment, scaling, and management of containerized applications. By providing a comprehensive set of tools and functionalities, OpenShift empowers organizations to harness the full potential of containers while simplifying the complexities associated with their deployment and orchestration.

Image Source: https://www.openshift.com

Use Cases:

Application Modernization

OpenShift aids in transitioning legacy applications to modern container-based architectures, enabling organizations to leverage the benefits of microservices and cloud-native approaches.

Microservices Architecture

OpenShift supports the development and deployment of microservices, facilitating the creation of scalable and modular applications.

Hybrid and Multi-Cloud Deployments

Organizations can use OpenShift to build and manage applications that span across on-premises infrastructure and multiple cloud providers.

DevOps and CI/CD

OpenShift integrates seamlessly with DevOps practices, allowing organizations to automate application deployment, testing, and delivery.

In essence, OpenShift serves as a bridge between developers and IT operations, providing a unified platform for container-based application development, deployment, and management. Its comprehensive features and compatibility with various cloud environments make it a versatile tool for organizations seeking agility, scalability, and efficiency in their application lifecycle management.

HashiCorp Nomad

HashiCorp Nomad is an open-source application orchestration platform that simplifies the deployment and management of applications across diverse infrastructure environments. With its declarative job specification model, dynamic scaling, and multi-cloud support, Nomad empowers organizations to efficiently allocate resources and optimize application performance.

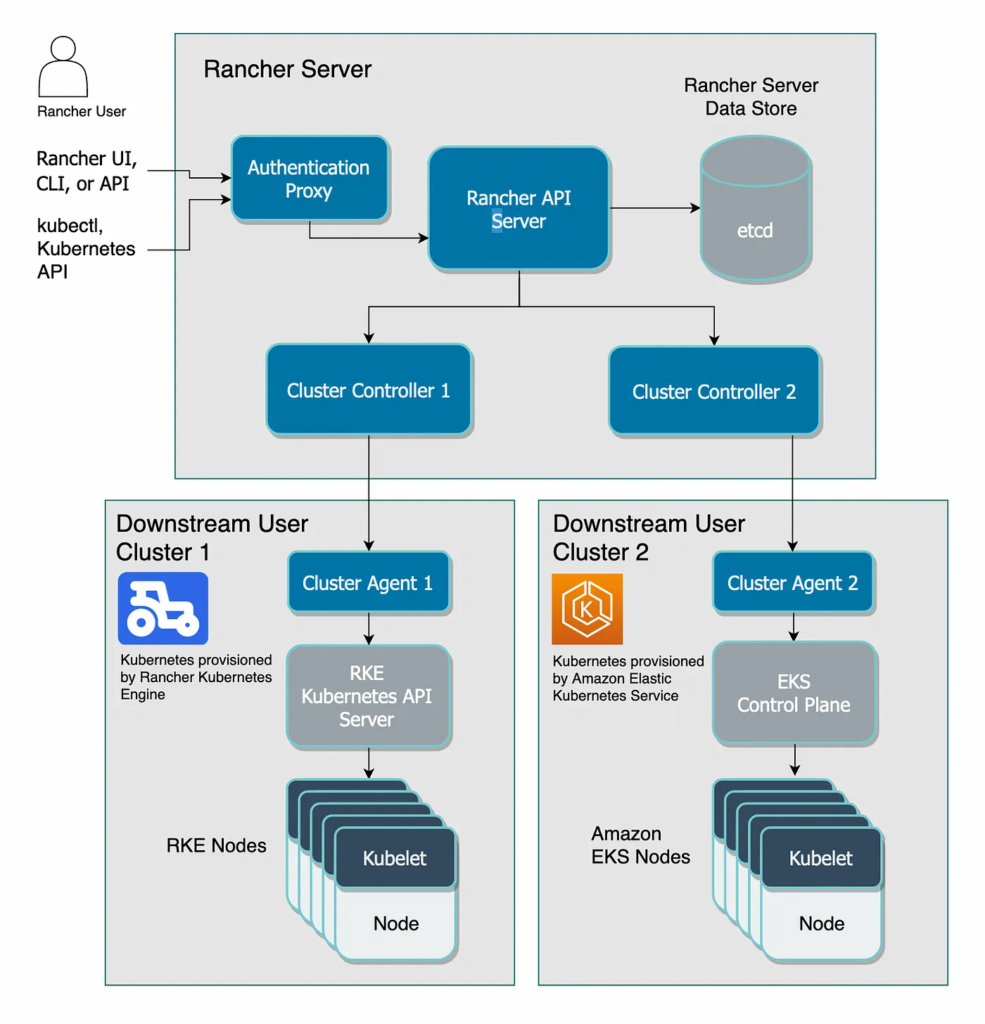

Docker Swarm

Docker Swarm is a native clustering and orchestration solution for Docker containers. It allows users to create and manage containerized applications across a cluster of machines, simplifying container deployment, scaling, and load balancing while leveraging Docker’s familiar tools and APIs.

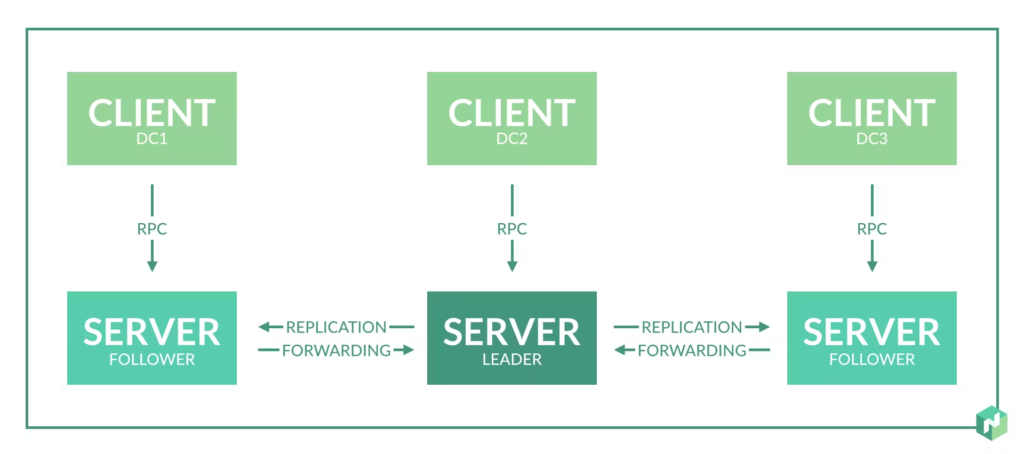

Rancher

Rancher is a container management platform that provides tools for managing Kubernetes, Docker Swarm, and other container orchestration systems. It offers a user-friendly interface for provisioning and managing containerized applications, enabling organizations to streamline deployment and administration tasks.

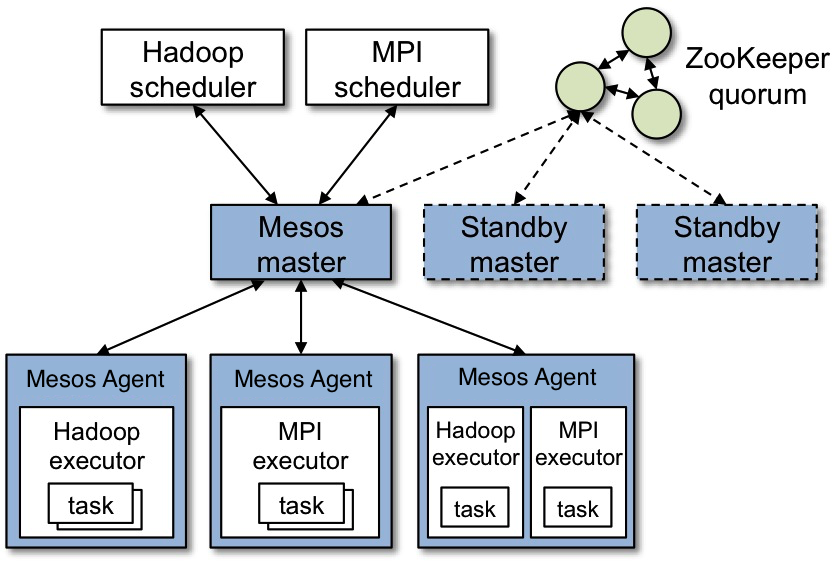

Mesos

Apache Mesos is a distributed systems kernel that abstracts CPU, memory, storage, and other compute resources across a cluster of machines. It enables efficient resource sharing and dynamic allocation, making it suitable for running diverse workloads and applications in a data center or cloud environment.

Image Source: mesos.apache.org

Managed Container Orchestration Tools

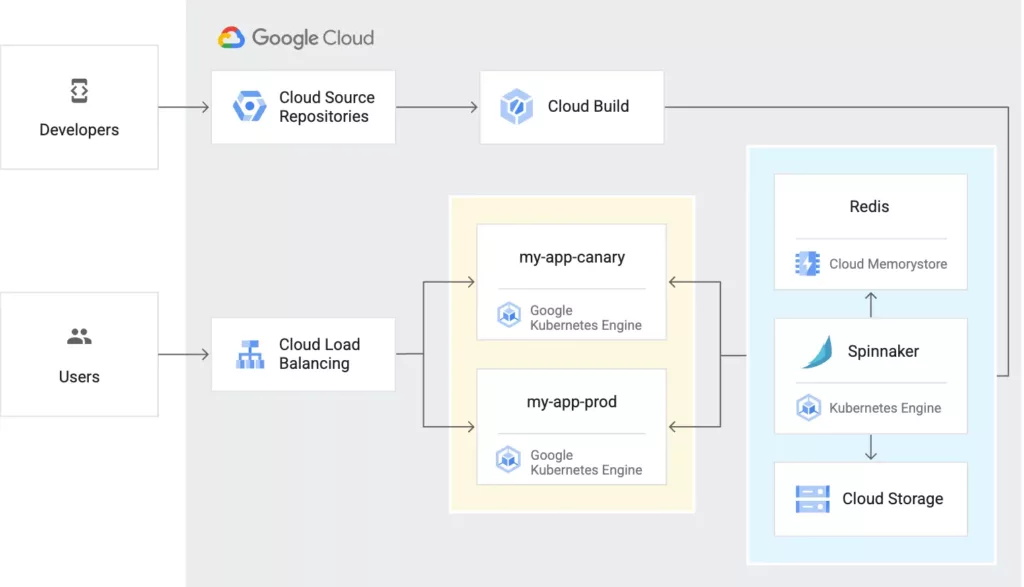

Google Container Engine (GKE)

GKE is Google Cloud’s managed Kubernetes service that simplifies the deployment, management, and scaling of containerized applications using Kubernetes. It offers automated updates, scaling, and seamless integration with Google Cloud services.

Image Source: cloud.google.com

Google Cloud Run

Google Cloud Run enables developers to deploy containerized applications in a serverless environment, automatically managing scaling and infrastructure. It’s ideal for stateless workloads that demand rapid deployment.

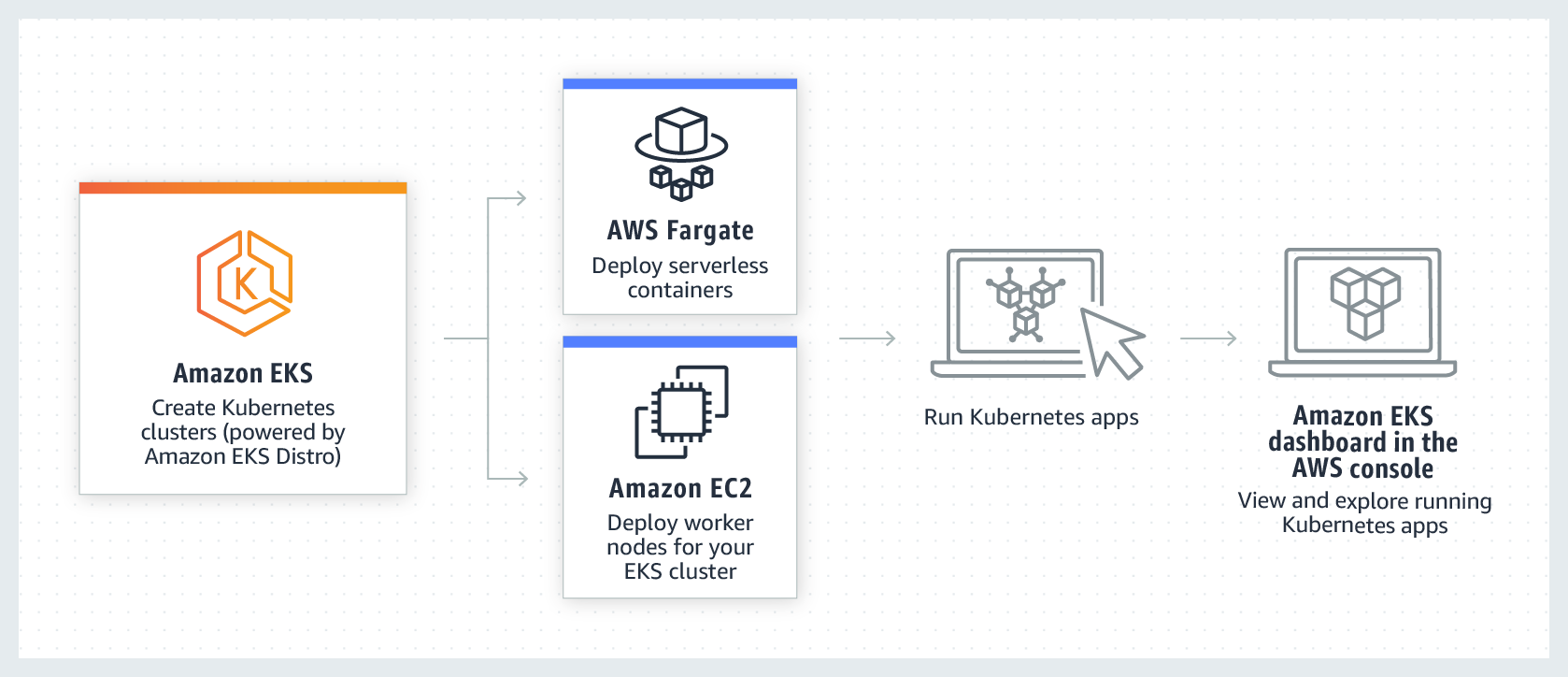

AWS Elastic Kubernetes Service (EKS)

AWS EKS is Amazon’s managed Kubernetes service, offering simplified setup and operation of Kubernetes clusters. It integrates seamlessly with other AWS services, enabling efficient management of containerized applications.

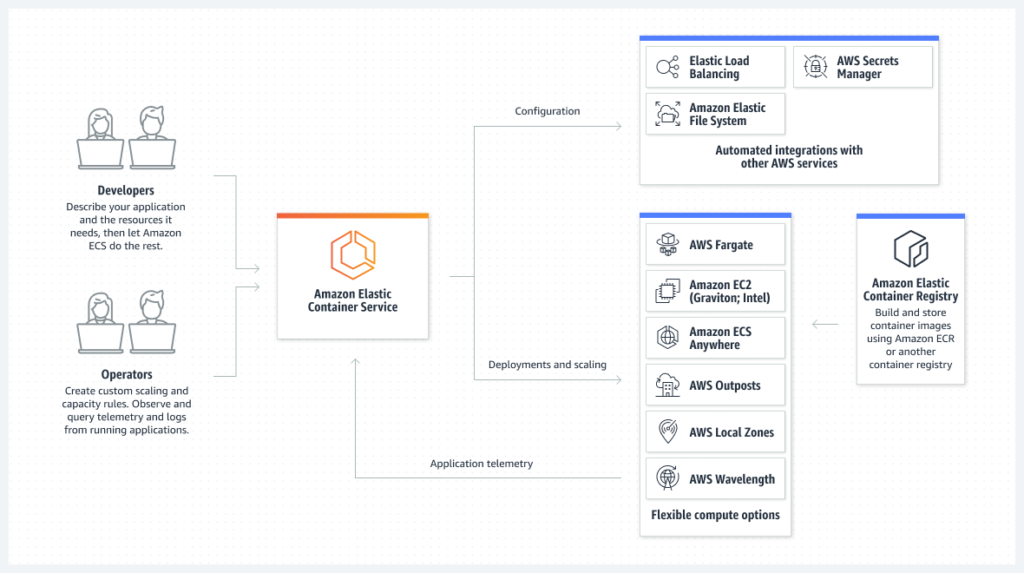

Amazon EC2 Container Service (ECS)

Amazon ECS is a scalable container orchestration service that simplifies deployment and management of Docker containers. It provides a high level of control and integrates well with the AWS ecosystem.

Image Source: aws.amazon.com

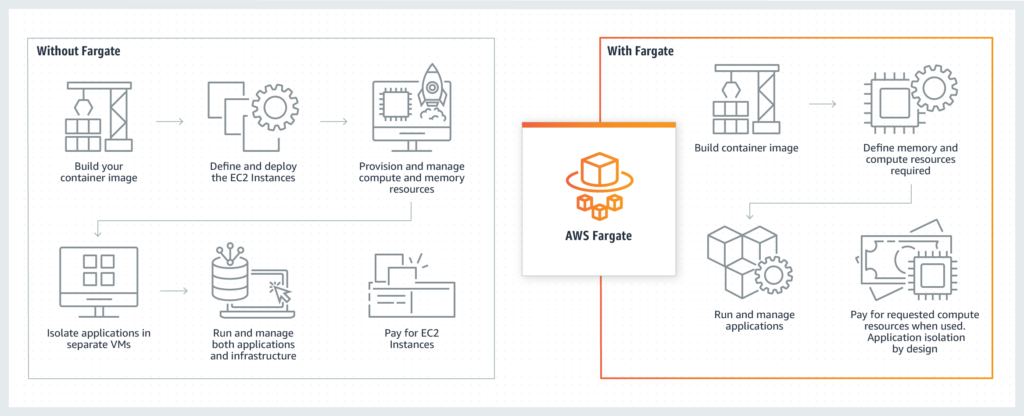

AWS Fargate

AWS Fargate is a serverless compute engine that works with ECS and EKS. It abstracts away the underlying infrastructure, allowing developers to focus solely on deploying and managing containers.

Azure AKS Service

Azure AKS is Microsoft’s managed Kubernetes service, providing an easy way to deploy, manage, and scale containerized applications using Kubernetes. It integrates with Azure services for a comprehensive cloud solution.

Azure Container Instances

Azure Container Instances offers the ability to run individual containers without managing the underlying infrastructure. It’s designed for lightweight, isolated workloads.

Digital Ocean Kubernetes Service

Digital Ocean Kubernetes Service is a managed Kubernetes service that simplifies container orchestration on the Digital Ocean platform. It enables users to deploy, manage, and scale containerized applications efficiently.

Linode Kubernetes Engine

Linode Kubernetes Engine is a managed Kubernetes service by Linode, providing users with the tools to deploy, manage, and scale containerized applications on the Linode cloud platform.

Managed vs. Self-Hosted Container Orchestration Tools

As organizations embrace containerization to streamline application deployment and management, they encounter the decision of whether to opt for managed or self-hosted container orchestration tools. Each approach has its own merits and considerations, catering to distinct operational preferences and requirements.

| Aspect | Managed Container Orchestration | Self-Hosted Container Orchestration |

| Operational Complexity | Low | Moderate to High |

| Deployment Speed | Fast | Moderate to Slow |

| Scalability | Automatic | Manual |

| Resource Efficiency | High | Variable |

| Integration with Cloud Services | Seamless | Dependent on Implementation |

| Control and Customization | Limited | High |

| Flexibility and Portability | Platform-Specific | Platform-Agnostic |

| Security Customization | Limited | High |

| Cost Considerations | Variable (Monthly Fees) | Infrastructure and Operational Costs |

| Support and Maintenance | Vendor-Provided Support | Internal Responsibility |

| Learning Curve | Low | Moderate to High |