Site Reliability Engineering (SRE) monitoring and application monitoring are two sides of the same coin: both exist to keep complex distributed systems reliable, performant, and transparent. For engineering teams managing microservices, Kubernetes, and cloud-native architectures, knowing what to measure—and how to act on it—is the difference between a 15-minute incident and an all-night outage.

This guide explains how the four Golden Signals serve as the foundation of production-grade application monitoring, how to connect them to SLIs, SLOs, and error budgets, and how to build dashboards and alerting workflows that actually reduce your MTTR.

KEY TAKEAWAYS

Golden Signals (latency, errors, traffic, saturation) are the universal language of SRE application monitoring across any tech stack.

Connecting signals to SLIs and SLOs turns raw metrics into reliability commitments your team can own.

Alert thresholds must be derived from baseline data and SLOs—the examples in this article are illustrative starting points, not universal rules.

After implementing Golden Signals, Gart clients have reduced MTTR by up to 60% within two months. Read the full case study context below.

What is SRE Monitoring?

SRE monitoring is the practice of continuously observing the health, performance, and availability of software systems using the methods and principles defined by Google's Site Reliability Engineering discipline. Unlike traditional system monitoring—which often tracks dozens of low-level infrastructure metrics—SRE monitoring is intentionally opinionated: it focuses on the signals that directly reflect user experience and system reliability.

At its core, SRE monitoring answers three questions at all times:

Is the system currently serving users correctly?

How close are we to breaching our reliability commitments (SLOs)?

Which service or component is responsible when something breaks?

This user-centric orientation is what separates SRE monitoring from generic infrastructure monitoring. An SRE team does not alert on "CPU at 80%"—they alert when that CPU spike is burning through their monthly error budget faster than expected.

Application Monitoring in the SRE Context

Application monitoring is the discipline of tracking how software applications behave in production: response times, error rates, throughput, resource consumption, and end-user experience. In an SRE context, application monitoring is the primary layer where Golden Signals are measured and where the gap between infrastructure health and user experience becomes visible.

A database node may be running at 40% CPU—perfectly healthy by infrastructure standards—while every query takes 4 seconds because of a missing index. Infrastructure monitoring shows green; application monitoring shows a latency crisis. This is why SRE teams invest heavily in application-level telemetry: it captures what infrastructure metrics miss.

Modern application monitoring spans three pillars:

Metrics — numerical time-series data (latency percentiles, error counts, RPS).

Logs — structured event records that capture request context and error detail.

Traces — distributed request journeys that map latency across service boundaries.

The Golden Signals framework unifies these pillars into four actionable categories that any team can monitor, regardless of their technology stack.

The Four Golden Signals in SRE

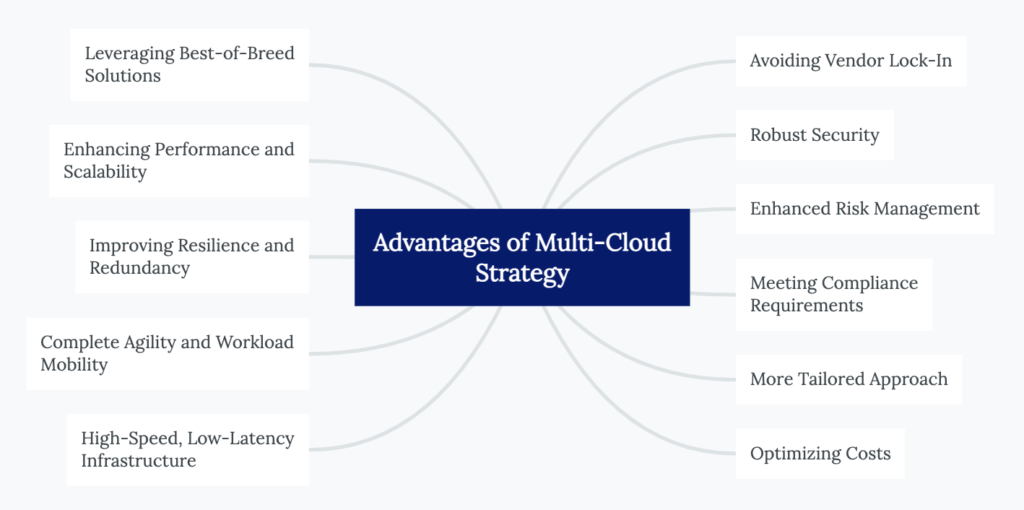

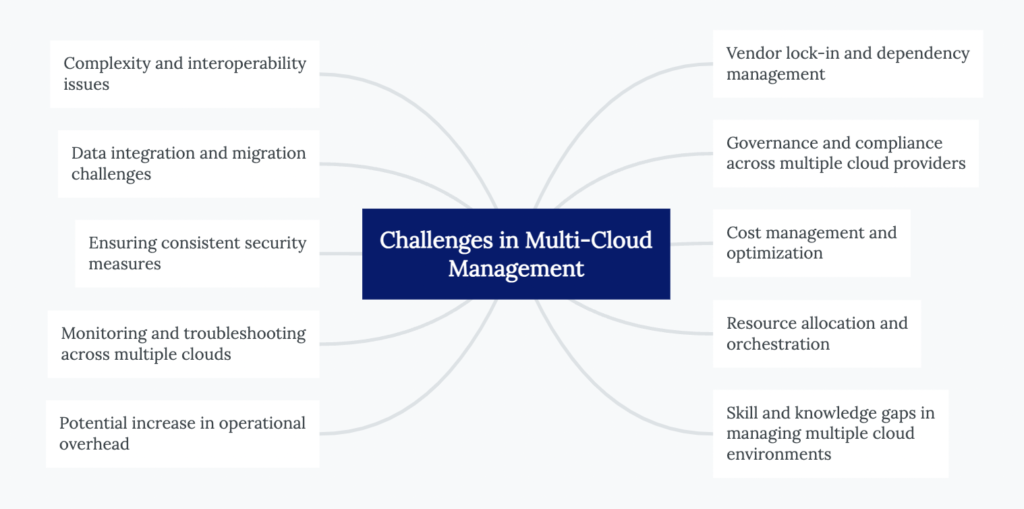

SRE principles streamline application monitoring by focusing on four metrics—latency, errors, traffic, and saturation—collectively known as Golden Signals. Instead of tracking hundreds of metrics across different technologies, this focused framework helps teams quickly identify and resolve issues.

Latency:Latency is the time it takes for a request to travel from the client to the server and back. High latency can cause a poor user experience, making it critical to keep this metric in check. For example, in web applications, latency might typically range from 200 to 400 milliseconds. Latency under 300 ms ensures good user experience; errors >1% necessitate investigation. Latency monitoring helps detect slowdowns early, allowing for quick corrective action.

Errors:Errors refer to the rate of failed requests. Monitoring errors is essential because not all errors have the same impact. For instance, a 500 error (server error) is more severe than a 400 error (client error) because the former often requires immediate intervention. Identifying error spikes can alert teams to underlying issues before they escalate into major problems.

Traffic:Traffic measures the volume of requests coming into the system. Understanding traffic patterns helps teams prepare for expected loads and identify anomalies that might indicate issues such as DDoS attacks or unplanned spikes in user activity. For example, if your system is built to handle 1,000 requests per second and suddenly receives 10,000, this surge might overwhelm your infrastructure if not properly managed.

Saturation:Saturation is about resource utilization; it shows how close your system is to reaching its full capacity. Monitoring saturation helps avoid performance bottlenecks caused by overuse of resources like CPU, memory, or network bandwidth. Think of it like a car's tachometer: once it redlines, you're pushing the engine too hard, risking a breakdown.

Why Golden Signals Matter

Golden Signals provide a comprehensive overview of a system's health, enabling SREs and DevOps teams to be proactive rather than reactive. By continuously monitoring these metrics, teams can spot trends and anomalies, address potential issues before they affect end-users, and maintain a high level of service reliability.

SRE Golden Signals help in proactive system monitoring

SRE Golden Signals are crucial for proactive system monitoring because they simplify the identification of root causes in complex applications. Instead of getting overwhelmed by numerous metrics from various technologies, SRE Golden Signals focus on four key indicators: latency, errors, traffic, and saturation.

By continuously monitoring these signals, teams can detect anomalies early and address potential issues before they affect the end-user. For instance, if there is an increase in latency or a spike in error rates, it signals that something is wrong, prompting immediate investigation.

What are the key benefits of using "golden signals" in a microservices environment?

The "golden signals" approach is especially beneficial in a microservices environment because it provides a simplified yet powerful framework to monitor essential metrics across complex service architectures.

Here’s why this approach is effective:

▪️Focuses on Key Performance Indicators (KPIs)

By concentrating on latency, errors, traffic, and saturation, the golden signals let teams avoid the overwhelming and often unmanageable task of tracking every metric across diverse microservices. This strategic focus means that only the most crucial metrics impacting user experience are monitored.

▪️Enhances Cross-Technology Clarity

In a microservices ecosystem where services might be built on different technologies (e.g., Node.js, DB2, Swift), using universal metrics minimizes the need for specific expertise. Teams can identify issues without having to fully understand the intricacies of every service’s technology stack.

▪️Speeds Up Troubleshooting

Golden signals quickly highlight root causes by filtering out non-essential metrics, allowing the team to narrow down potential problem areas in a large web of interdependent services. This is crucial for maintaining service uptime and a seamless user experience.

SRE Monitoring vs. Observability vs. Application Performance Monitoring (APM)

These three terms are often used interchangeably, but they refer to distinct practices with different scopes. Understanding where they overlap—and where they diverge—helps teams invest in the right tooling and processes.

DimensionSRE MonitoringObservabilityApplication Monitoring (APM)Primary questionAre we meeting our reliability targets?Why is the system behaving this way?How is this application performing right now?Core signalsGolden Signals + SLIs/SLOsLogs, metrics, traces (full telemetry)Response time, throughput, error rate, ApdexAudienceSRE / on-call engineersPlatform engineering, DevOps, SREDev teams, operations, managementTypical toolsPrometheus, Grafana, PagerDutyOpenTelemetry, Jaeger, ELK StackDatadog, New Relic, Dynatrace, AppDynamicsScopeService reliability & error budgetsFull system internal stateApplication transaction performanceSRE Monitoring vs. Observability vs. Application Performance Monitoring (APM)

In practice, mature engineering organizations treat these as complementary layers. Golden Signals surface what is wrong quickly; observability tooling explains why; APM dashboards give development teams actionable detail at the code level.

SLIs, SLOs, and Error Budgets in SRE Monitoring

Golden Signals generate raw measurements. SLIs and SLOs transform those measurements into reliability commitments that the business can understand and engineering teams can own.

Service Level Indicators (SLIs)

An SLI is a quantitative measure of a service behavior directly derived from a Golden Signal. For example:

Availability SLI: percentage of requests that return a non-5xx response.

Latency SLI: percentage of requests served in under 300ms (P95).

Throughput SLI: percentage of expected message batches processed within the SLA window.

Service Level Objectives (SLOs)

An SLO is the target value for an SLI over a rolling window. A well-formed SLO looks like: "99.5% of requests must return a non-5xx response over a rolling 28-day window." SLOs are the bridge between Golden Signals and business impact. When your SLO says 99.5% availability and you are at 99.2%, you are burning error budget—and that is the signal your team needs to prioritize reliability work over new features.

Error Budgets

An error budget is the allowable amount of unreliability defined by your SLO. For a 99.5% availability SLO over 28 days, the error budget is 0.5% of all requests—roughly 3.6 hours of complete downtime equivalent. When the error budget is healthy, teams can ship changes confidently. When it is depleted or burning fast, the SRE team has a data-driven mandate to freeze releases and focus on reliability.

Practical tip: Track error budget burn rate alongside your Golden Signals dashboard. A burn rate of 1x means you are consuming the budget at exactly the rate your SLO allows. A burn rate of 3x means you will exhaust your budget in one-third of the SLO window — an immediate escalation trigger.

How to Monitor Microservices Using Golden Signals

Monitoring microservices requires a disciplined approach in environments where dozens of services interact across different technology stacks. Golden Signals provide a clear framework for tracking system health across these distributed systems.

Step 1: Define Your Observability Pipeline per Service

Each microservice should expose telemetry for all four Golden Signals. Integrate them directly with your SLI definitions from day one:

Latency — measure P50, P95, and P99 request duration per service.

Errors — capture 4xx/5xx HTTP codes and application-level exceptions separately.

Traffic — monitor RPS, message throughput, and connection concurrency.

Saturation — track CPU, memory, thread pool usage, and queue depth.

Step 2: Choose a Unified Monitoring Stack

Popular platforms for production-grade application monitoring in microservices include:

Prometheus + Grafana — open-source, highly customizable, excellent for Kubernetes environments.

Datadog / New Relic — full-stack observability with built-in Golden Signals support and auto-instrumentation.

OpenTelemetry — CNCF-backed standard for vendor-neutral telemetry instrumentation.

Step 3: Isolate Service Boundaries

Group Golden Signals by service so you can detect where a problem originates rather than just knowing that something is wrong:

MicroserviceLatency (P95)Error RateTrafficSaturationAuth220ms1.2%5k RPS78% CPUPayments310ms3.1%3k RPS89% MemoryNotifications140ms0.4%12k RPS55% CPU

Step 4: Correlate Signals with Distributed Tracing

Use distributed tracing to map requests across services. Tools like Jaeger or Zipkin let you trace latency across hops, find the exact service causing error spikes, and visualize traffic flows and bottlenecks. A latency spike in the Payments service that traces back to a slow DB query is far more actionable than "P95 latency is high."

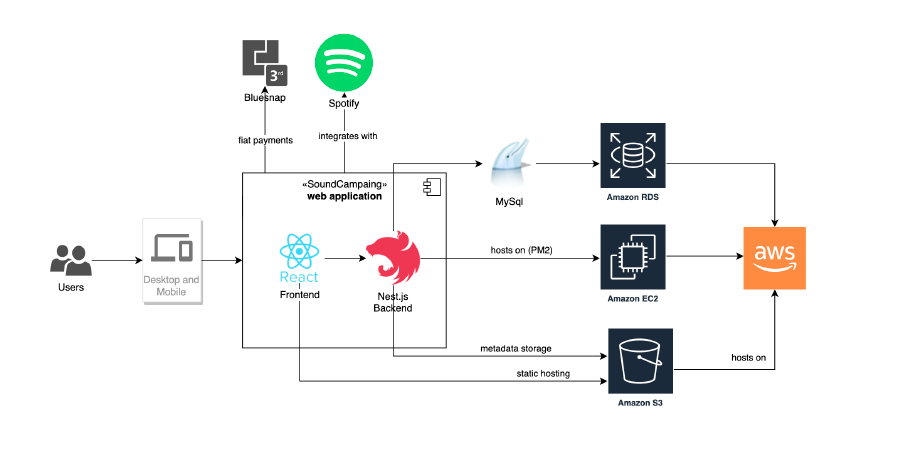

Learn how these principles apply in practice from our Centralized Monitoring case study for a B2C SaaS Music Platform.

Step 5. Automate Alerting with Context

Set thresholds and anomaly detection for each signal:

Latency > 500ms? Alert DevOps

Saturation > 90%? Trigger autoscaling

Error Rate > 2% over 5 mins? Notify engineering and create an incident ticket

Alerting Principles for SRE Teams

Effective application monitoring is only as useful as the alerting layer that translates signals into human action. Alert fatigue is one of the most common—and costly—failure modes in SRE programs. These principles help teams alert on what matters without overwhelming the on-call engineer.

Alert on Symptoms, Not Causes

Alert when the user experience is degraded (latency SLO is burning), not when a machine metric crosses a threshold. "CPU at 80%" is a cause; "P95 latency exceeding 500ms for 5 minutes" is a symptom your SLO cares about.

Use Error Budget Burn Rate as Your Primary Alert

A fast burn rate (e.g., 3x or 6x) on your error budget is a better paging condition than raw signal thresholds. It tells you not just that something is wrong, but how urgently you need to act based on your reliability commitments.

Sample Alert Thresholds (Illustrative Only)

SignalSample ThresholdSuggested ActionUrgencyLatency (P95)>500ms for 5 minPage on-call SREHighError Rate>2% over 5 minCreate incident ticket + notify engineeringHighSaturation (CPU)>90% for 10 minTrigger autoscaling policyMediumError Budget Burn3× rate for 1 hourIncident call, feature freeze considerationCritical

Methodology note: These thresholds are starting-point illustrations. Your production values should be calibrated against your own service baselines, user SLAs, and SLO definitions. A payment service tolerates far less latency than an async batch job.

Practical Application: Using APM Dashboards for SRE Monitoring

Application Performance Management (APM) dashboards integrate Golden Signals into a single view, allowing teams to monitor all critical metrics simultaneously. The operations team can use APM dashboards to get real-time insights into latency, errors, traffic, and saturation—reducing the cognitive load during incident response.

The most valuable APM features for SRE teams include:

One-hop dependency views — shows only the immediate upstream and downstream services of a failing component, dramatically narrowing the root-cause investigation scope and reducing MTTR.

Centralized Golden Signals panels — all four signals per service in one view, eliminating tool-switching during incidents.

SLO burn rate overlays — trend lines showing how quickly the error budget is being consumed, integrated alongside raw Golden Signals.

Proactive anomaly detection — ML-powered tools like Datadog and Dynatrace flag statistically unusual patterns before thresholds breach.

What is the Significance of Distinguishing 500 vs. 400 Errors in SRE Monitoring?

The distinction between 500 and 400 errors in application monitoring is fundamental to correct incident prioritization. Conflating them inflates your error rate SLI and may generate alerts that do not reflect actual service degradation.

Error TypeCauseSeveritySRE Response500 — Server errorSystem or application failureHighImmediate investigation, possible incident declaration400 — Client errorBad input, expired auth token, invalid requestLowerMonitor trends; investigate only on sustained spikes

A good SLI definition for errors counts only server-side failures (5xx) against your reliability budget. A sudden 400-error spike may signal a client SDK bug, a bot campaign, or a broken authentication flow—all worth investigating, but none of them are a service outage.

SRE Monitoring Dashboard Best Practices

A well-structured SRE dashboard makes or breaks incident response. It is not about displaying all available data—it is about surfacing the right insights at the right time. See the official Google SRE Book on monitoring for the principles that underpin these practices.

1. Prioritize Golden Signals and SLO Burn Rate at the Top

Place latency (P50/P95), error rate (%), traffic (RPS), and saturation front and center. Add SLO burn rate immediately below so engineers can assess reliability impact at a glance without scrolling.

2. Use Visual Cues Consistently

Color-code thresholds (green / yellow / red), use sparklines for trend visualization, and heatmaps to identify saturation patterns across clusters or availability zones.

3. Segment by Environment and Service

Separate production, staging, and dev views. Within production, segment by service or team ownership and by availability zone. This isolation dramatically reduces the time to pinpoint which service is responsible during an incident.

4. Link Metrics to Logs and Traces

Make your dashboards navigable: a latency spike should be one click away from the related trace in Jaeger, and a spike in errors should link directly to filtered log output in Kibana or Grafana Loki.

5. Provide Role-Appropriate Views

Use templating (Grafana variables, Datadog template variables) to serve multiple audiences from a single dashboard: SRE/on-call engineers need real-time signal detail; engineering teams need per-service deep dives; leadership needs SLO health summaries.

6. Treat Dashboards as Living Documents

Prune panels that nobody uses, reassess thresholds quarterly against updated baselines, and add deployment or incident annotations so that future engineers understand historical anomalies in context.

How Gart Implements SRE Monitoring in 30–60 Days

Generic best practices are helpful, but implementation details are where most teams struggle. Here is how Gart's SRE team approaches application monitoring engagements from day one, based on hands-on delivery experience across SaaS, cloud-native, and distributed environments—reviewed by Fedir Kompaniiets, Co-founder at Gart Solutions, who has designed monitoring and observability systems across multiple industries.

Days 1–14: Baseline and Instrumentation

Audit existing telemetry: what is already collected, what is missing, what is noisy.

Instrument all services with OpenTelemetry or native exporters for all four Golden Signals.

Deploy Prometheus + Grafana or connect to the client's existing observability platform.

Establish baseline latency, error rate, and saturation profiles per service under normal load.

Days 15–30: SLIs, SLOs, and Initial Alerting

Define SLIs for each critical service in collaboration with product and engineering stakeholders.

Draft SLOs and calculate initial error budgets based on business risk tolerance.

Configure symptom-based alerts (burn rate, not raw thresholds) with PagerDuty or Opsgenie routing.

Stand up the first three dashboards: overall service health, per-service Golden Signals, SLO burn rate.

Days 31–60: Noise Reduction and Handover

Tune alert thresholds against the observed baseline to eliminate alert fatigue.

Remove noisy, low-signal alerts that were generating false pages.

Integrate distributed tracing for the highest-traffic services.

Run a simulated incident to validate the monitoring stack end-to-end before handover.

Deliver runbooks and on-call documentation tied to each alert condition.

Real outcome: After implementing Golden Signals and SLO-based alerting for a B2C SaaS platform, the client reduced MTTR by 60% within two months. The primary driver was eliminating alert fatigue (previously 80+ daily alerts, reduced to 8 actionable ones) and linking every alert to a runbook with a clear first-responder action. Read the full context: Centralized Monitoring for a B2C SaaS Music Platform.

Watch How we Built "Advanced Monitoring for Sustainable Landfill Management"

Conclusion

Ready to take your system's reliability and performance to the next level? Gart Solutions offers top-tier SRE Monitoring services to ensure your systems are always running smoothly and efficiently. Our experts can help you identify and address potential issues before they impact your business, ensuring minimal downtime and optimal performance.

Gart Solutions · Expert SRE Services

Is Your Application Monitoring Ready for Production?

Engineering teams that invest in proper SRE monitoring and application monitoring reduce MTTR, protect error budgets, and ship with confidence. Gart's SRE team has designed and deployed monitoring stacks for SaaS platforms, Kubernetes-native environments, fintech, and healthcare systems.

60%

MTTR reduction for SaaS clients

30

Days to working SLO dashboards

99.9%

Availability target for managed clients

Our services cover the full monitoring lifecycle — from telemetry instrumentation and Golden Signal dashboards to SLO definition, alert tuning, and on-call runbooks.

Golden Signals Setup

SLI / SLO Definition

Prometheus + Grafana

Alert Tuning

Distributed Tracing

Kubernetes Monitoring

Incident Runbooks

Talk to an SRE Expert

Explore Monitoring Services

B2C SaaS Music Platform

Centralized monitoring across global infrastructure — 60% MTTR reduction in 2 months.

Digital Landfill Platform

Cloud-agnostic monitoring for IoT emissions data with multi-country compliance.

Fedir Kompaniiets

Co-founder & CEO, Gart Solutions · Cloud Architect & DevOps Consultant

Fedir is a technology enthusiast with over a decade of diverse industry experience. He co-founded Gart Solutions to address complex tech challenges related to Digital Transformation, helping businesses focus on what matters most — scaling. Fedir is committed to driving sustainable IT transformation, helping SMBs innovate, plan future growth, and navigate the "tech madness" through expert DevOps and Cloud managed services. Connect on LinkedIn.

The cloud offers incredible scalability and agility, but managing costs can be a challenge. As businesses increasingly embrace the cloud, managing costs has become a critical concern. The flexibility and scalability of cloud services come with a price tag that can quickly spiral out of control without proper optimization strategies in place.

In this post, I'll share some practical tips to help you maximize the value of your cloud investments while minimizing unnecessary expenses.

[lwptoc]

Main Components of Cloud Costs

ComponentDescriptionCompute InstancesCost of virtual machines or compute instances used in the cloud.StorageCost of storing data in the cloud, including object storage, block storage, etc.Data TransferCost associated with transferring data within the cloud or to/from external networks.NetworkingCost of network resources like load balancers, VPNs, and other networking components.Database ServicesCost of utilizing managed database services, both relational and NoSQL databases.Content Delivery Network (CDN)Cost of using a CDN for content delivery to end users.Additional ServicesCost of using additional cloud services like machine learning, analytics, etc.Table Comparing Main Components of Cloud Costs

Are you looking for ways to reduce your cloud operating costs? Look no further! Contact Gart today for expert assistance in optimizing your cloud expenses.

10 Cloud Cost Optimization Strategies

Here are some key strategies to optimize your cloud spending:

Analyze Current Cloud Usage and Costs

Analyzing your current cloud usage and costs is an essential first step towards optimizing your cloud operating costs. Start by examining the cloud services and resources currently in use within your organization. This includes virtual machines, storage solutions, databases, networking components, and any other services utilized in the cloud. Take stock of the specific configurations, sizes, and usage patterns associated with each resource.

Once you have a comprehensive overview of your cloud infrastructure, identify any resources that are underutilized or no longer needed. These could be instances running at low utilization levels, storage volumes with little data, or services that have become obsolete or redundant. By identifying and addressing such resources, you can eliminate unnecessary costs.

Dig deeper into your cloud costs and identify the key drivers behind your expenditure. Look for patterns and trends in your usage data to understand which services or resources are consuming the majority of your cloud budget. It could be a particular type of instance, high data transfer volumes, or storage solutions with excessive replication. This analysis will help you prioritize cost optimization efforts.

During this analysis phase, leverage the cost management tools provided by your cloud service provider. These tools often offer detailed insights into resource usage, costs, and trends, allowing you to make data-driven decisions for cost optimization.

Optimize Resource Allocation

Optimizing resource allocation is crucial for reducing cloud operating costs while ensuring optimal performance.

Leverage Autoscaling

Adopt Reserved Instances

Utilize Spot Instances

Rightsize Resources

Optimize Storage

Assess the utilization of your cloud resources and identify instances or services that are over-provisioned or underutilized. Right-sizing involves matching the resource specifications (e.g., CPU, memory, storage) to the actual workload requirements. Downsize instances that are consistently running at low utilization, freeing up resources for other workloads. Similarly, upgrade underpowered instances experiencing performance bottlenecks to improve efficiency.

Take advantage of cloud scalability features to align resources with varying workload demands. Autoscaling allows resources to automatically adjust based on predefined thresholds or performance metrics. This ensures you have enough resources during peak periods while reducing costs during periods of low demand. Autoscaling can be applied to compute instances, databases, and other services, optimizing resource allocation in real-time.

Reserved instances (RIs) or savings plans offer significant cost savings for predictable or consistent workloads over an extended period. By committing to a fixed term (e.g., 1 or 3 years) and prepaying for the resource usage, you can achieve substantial discounts compared to on-demand pricing. Analyze your workload patterns and identify instances that have steady usage to maximize savings with RIs or savings plans.

For workloads that are flexible and can tolerate interruptions, spot instances can be a cost-effective option. Spot instances are spare computing capacity offered at steep discounts (up to 90% off on AWS) compared to on-demand prices. However, these instances can be reclaimed by the cloud provider with little notice, making them suitable for fault-tolerant, interruptible tasks.

When optimizing resource allocation, it's crucial to continuously monitor and adjust your resource configurations based on changing workload patterns. Leverage cloud provider tools and services that provide insights into resource utilization and performance metrics, enabling you to make data-driven decisions for efficient resource allocation.

Implement Cost Monitoring and Budgeting

Implementing effective cost monitoring and budgeting practices is crucial for maintaining control over cloud operating costs.

Take advantage of the cost management tools and features offered by your cloud provider. These tools provide detailed insights into your cloud spending, resource utilization, and cost allocation. They often include dashboards, reports, and visualizations that help you understand the cost breakdown and identify areas for optimization. Familiarize yourself with these tools and leverage their capabilities to gain better visibility into your cloud costs.

Configure cost alerts and notifications to receive real-time updates on your cloud spending. Define spending thresholds that align with your budget and receive alerts when costs approach or exceed those thresholds. This allows you to proactively monitor and control your expenses, ensuring you stay within your allocated budget. Timely alerts enable you to identify any unexpected cost spikes or unusual patterns and take appropriate actions.

Set a budget for your cloud operations, allocating specific spending limits for different services or departments. This budget should align with your business objectives and financial capabilities. Regularly review and analyze your cost performance against the budget to identify any discrepancies or areas for improvement. Adjust the budget as needed to optimize your cloud spending and align it with your organizational goals.

By implementing cost monitoring and budgeting practices, you gain better visibility into your cloud spending and can take proactive steps to optimize costs. Regularly reviewing cost performance allows you to identify potential cost-saving opportunities, make informed decisions, and ensure that your cloud usage remains within the defined budget.

Remember to involve relevant stakeholders, such as finance and IT teams, to collaborate on budgeting and align cost optimization efforts with your organization's overall financial strategy.

Use Cost-effective Storage Solutions

To optimize cloud operating costs, it is important to use cost-effective storage solutions.

Begin by assessing your storage requirements and understanding the characteristics of your data. Evaluate the available storage options, such as object storage and block storage, and choose the most suitable option for each use case. Object storage is ideal for storing large amounts of unstructured data, while block storage is better suited for applications that require high performance and low latency. By aligning your storage needs with the appropriate options, you can avoid overprovisioning and optimize costs.

Implement data lifecycle management techniques to efficiently manage your data throughout its lifecycle. This involves practices like data tiering, where you classify data based on its frequency of access or importance and store it in the appropriate storage tiers. Frequently accessed or critical data can be stored in high-performance storage, while less frequently accessed or archival data can be moved to lower-cost storage options. Archiving infrequently accessed data to cost-effective storage tiers can significantly reduce costs while maintaining data accessibility.

Cloud providers often provide features such as data compression, deduplication, and automated storage tiering. These features help optimize storage utilization, reduce redundancy, and improve overall efficiency. By leveraging these built-in optimization features, you can lower your storage costs without compromising data availability or performance.

Regularly review your storage usage and make adjustments based on changing needs and data access patterns. Remove any unnecessary or outdated data to avoid incurring unnecessary costs. Periodically evaluate storage options and pricing plans to ensure they align with your budget and business requirements.

Employ Serverless Architecture

Employing a serverless architecture can significantly contribute to reducing cloud operating costs.

Embrace serverless computing platforms provided by cloud service providers, such as AWS Lambda or Azure Functions. These platforms allow you to run code without managing the underlying infrastructure. With serverless, you can focus on writing and deploying functions or event-driven code, while the cloud provider takes care of resource provisioning, maintenance, and scalability.

One of the key benefits of serverless architecture is its cost model, where you only pay for the actual execution of functions or event triggers. Traditional computing models require provisioning resources for peak loads, resulting in underutilization during periods of low activity. With serverless, you are charged based on the precise usage, which can lead to significant cost savings as you eliminate idle resource costs.

Serverless platforms automatically scale your functions based on incoming requests or events. This means that resources are allocated dynamically, scaling up or down based on workload demands. This automatic scaling eliminates the need for manual resource provisioning, reducing the risk of overprovisioning and ensuring optimal resource utilization. With automatic scaling, you can handle spikes in traffic or workload without incurring additional costs for idle resources.

When adopting serverless architecture, it's important to design your applications or functions to take full advantage of its benefits. Decompose your applications into smaller, independent functions that can be executed individually, ensuring granular scalability and cloud cost optimization.

Consider Multi-Cloud and Hybrid Cloud Strategies

Considering multi-cloud and hybrid cloud strategies can help optimize cloud operating costs while maximizing flexibility and performance.

Evaluate the pricing models, service offerings, and discounts provided by different cloud providers. Compare the costs of comparable services, such as compute instances, storage, and networking, to identify the most cost-effective options. Take into account the specific needs of your workloads and consider factors like data transfer costs, regional pricing variations, and pricing commitments. By leveraging competition among cloud providers, you can negotiate better pricing and optimize your cloud costs.

Analyze your workloads and determine the most suitable cloud environment for each workload. Some workloads may perform better or have lower costs in specific cloud providers due to their specialized services or infrastructure. Consider factors like latency, data sovereignty, compliance requirements, and service-level agreements (SLAs) when deciding where to deploy your workloads. By strategically placing workloads, you can optimize costs while meeting performance and compliance needs.

Adopt a hybrid cloud strategy that combines on-premises infrastructure with public cloud services. Utilize on-premises resources for workloads with stable demand or data that requires local processing, while leveraging the scalability and cost-efficiency of the public cloud for variable or bursty workloads. This hybrid approach allows you to optimize costs by using the most cost-effective infrastructure for different aspects of your data processing pipeline.

Automate Resource Management and Provisioning

Automating resource management and provisioning is key to optimizing cloud operating costs and improving operational efficiency.

Infrastructure-as-code (IaC) tools such as Terraform or CloudFormation allow you to define and manage your cloud infrastructure as code. With IaC, you can express your infrastructure requirements in a declarative format, enabling automated provisioning, configuration, and management of resources. This approach ensures consistency, repeatability, and scalability while reducing manual efforts and potential configuration errors.

Automate the process of provisioning and deprovisioning cloud resources based on workload requirements. By using scripting or orchestration tools, you can create workflows or scripts that automatically provision resources when needed and release them when they are no longer required. This automation eliminates the need for manual intervention, reduces resource wastage, and optimizes costs by ensuring resources are only provisioned when necessary.

Auto-scaling enables your infrastructure to dynamically adjust its capacity based on workload demands. By setting up auto-scaling rules and policies, you can automatically add or remove resources in response to changes in traffic or workload patterns. This ensures that you have the right amount of resources available to handle workload spikes without overprovisioning during periods of low demand. Auto-scaling optimizes resource allocation, improves performance, and helps control costs by scaling resources efficiently.

It's important to regularly review and optimize your automation scripts, policies, and configurations to align them with changing business needs and evolving workload patterns. Monitor resource utilization and performance metrics to fine-tune auto-scaling rules and ensure optimal resource allocation.

Optimize Data Transfer and Bandwidth Usage

Optimizing data transfer and bandwidth usage is crucial for reducing cloud operating costs.

Analyze your data flows and minimize unnecessary data transfer between cloud services and different regions. When designing your architecture, consider the proximity of services and data to minimize cross-region data transfer. Opt for services and resources located in the same region whenever possible to reduce latency and data transfer costs. Additionally, use efficient data transfer protocols and optimize data payloads to minimize bandwidth usage.

Employ content delivery networks (CDNs) to cache and distribute content closer to your end users. CDNs have a network of edge servers distributed across various locations, enabling faster content delivery by reducing the distance data needs to travel. By caching content at edge locations, you can minimize data transfer from your origin servers to end users, reducing bandwidth costs and improving user experience.

Implement data compression and caching techniques to optimize bandwidth usage. Compressing data before transferring it between services or to end users reduces the amount of data transmitted, resulting in lower bandwidth costs. Additionally, leverage caching mechanisms to store frequently accessed data closer to users or within your infrastructure, reducing the need for repeated data transfers. Caching helps improve performance and reduces bandwidth usage, particularly for static or semi-static content.

Evaluate Reserved Instances and Savings Plans

It is important to evaluate and leverage Reserved Instances (RIs) and Savings Plans provided by cloud service providers.

Analyze your historical usage patterns and identify workloads or services with consistent, predictable usage over an extended period. These workloads are ideal candidates for long-term commitments. By understanding your long-term usage requirements, you can determine the appropriate level of reservation coverage needed to optimize costs.

Reserved Instances (RIs) and Savings Plans are cost-saving options offered by cloud providers. RIs allow you to reserve instances for a specified term, typically one to three years, at a significantly discounted rate compared to on-demand pricing. Savings Plans provide flexible coverage for a specific dollar amount per hour, allowing you to apply the savings across different instance types within the same family. Evaluate your usage patterns and purchase RIs or Savings Plans accordingly to benefit from the cost savings they offer.

Cloud usage and requirements may change over time, so it is crucial to regularly review your reserved instances and savings plans. Assess if the existing reservations still align with your workload demands and make adjustments as needed. This may involve modifying the reservation terms, resizing or exchanging instances, or reallocating savings plans to different services or instance families. By optimizing your reservations based on evolving needs, you can ensure that you maximize cost savings and minimize unused or underutilized resources.

Continuously Monitor and Optimize

Monitor your cloud usage and costs regularly to identify opportunities for cloud cost optimization. Analyze resource utilization, identify underutilized or idle resources, and make necessary adjustments such as rightsizing instances, eliminating unused services, or reconfiguring storage allocations. Continuously assess your workload demands and adjust resource allocation accordingly to ensure optimal usage and cost efficiency.

Cloud service providers frequently introduce new cost optimization features, tools, and best practices. Stay informed about these updates and enhancements to leverage them effectively. Subscribe to newsletters, participate in webinars, or engage with cloud provider communities to stay up to date with the latest cost optimization strategies. By taking advantage of new features, you can further optimize your cloud costs and take advantage of emerging cost-saving opportunities.

Create awareness and promote a culture of cost consciousness and cloud cost Optimization across your organization. Educate and train your teams on cost optimization strategies, best practices, and tools. Encourage employees to be mindful of resource usage, waste reduction, and cost-saving measures. Establish clear cost management policies and guidelines, and regularly communicate cost-saving success stories to encourage and motivate cost optimization efforts.

Real-world Examples of Cloud Operating Costs Reduction Strategies

AWS Cost Optimization and CI/CD Automation for Entertainment Software Platform

This case study showcases how Gart helped an entertainment software platform optimize their cloud operating costs on AWS while enhancing their Continuous Integration/Continuous Deployment (CI/CD) processes.

The entertainment software platform was facing challenges with escalating cloud costs due to inefficient resource allocation and manual deployment processes. Gart stepped in to identify cost optimization opportunities and implement effective strategies.

Through their expertise in AWS cost optimization and CI/CD automation, Gart successfully helped the entertainment software platform optimize their cloud operating costs, reduce manual efforts, and improve deployment efficiency.

Optimizing Costs and Operations for Cloud-Based SaaS E-Commerce Platform

This Gart case study showcases how Gart helped a cloud-based SaaS e-commerce platform optimize their cloud operating costs and streamline their operations.

The e-commerce platform was facing challenges with rising cloud costs and operational inefficiencies. Gart began by conducting a comprehensive assessment of the platform's cloud environment, including resource utilization, workload patterns, and cost drivers. Based on this analysis, we devised a cost optimization strategy that focused on rightsizing resources, leveraging reserved instances, and implementing resource scheduling based on demand.

By rightsizing instances to match the actual workload requirements and utilizing reserved instances to take advantage of cost savings, Gart helped the e-commerce platform significantly reduce their cloud operating costs.

Furthermore, we implemented resource scheduling based on demand, ensuring that resources were only active when needed, leading to further cost savings. We also optimized storage costs by implementing data lifecycle management techniques and leveraging cost-effective storage options.

In addition to cost optimization, Gart worked on streamlining the platform's operations. We automated infrastructure provisioning and deployment processes using infrastructure-as-code (IaC) tools like Terraform, improving efficiency and reducing manual efforts.

Azure Cost Optimization for a Software Development Company

This case study highlights how Gart helped a software development company optimize their cloud operating costs on the Azure platform.

The software development company was experiencing challenges with high cloud costs and a lack of visibility into cost drivers. Gart intervened to analyze their Azure infrastructure and identify opportunities for cost optimization.

We began by conducting a thorough assessment of the company's Azure environment, examining resource utilization, workload patterns, and cost allocation. Based on this analysis, they developed a cost optimization strategy tailored to the company's specific needs.

The strategy involved rightsizing Azure resources to match the actual workload requirements, identifying and eliminating underutilized resources, and implementing reserved instances for long-term cost savings. Gart also recommended and implemented Azure cost management tools and features to provide better cost visibility and tracking.

Additionally, we worked with the software development company to implement infrastructure-as-code (IaC) practices using tools like Azure DevOps and Azure Resource Manager templates. This allowed for streamlined resource provisioning and reduced manual efforts, further optimizing costs.

Conclusion: Cloud Cost Optimization

By taking a proactive approach to cloud cost optimization, businesses can not only reduce their expenses but also enhance their overall cloud operations, improve scalability, and drive innovation. With careful planning, monitoring, and optimization, businesses can achieve a cost-effective and efficient cloud infrastructure that aligns with their specific needs and budgetary goals.

Elevate your business with our Cloud Consulting Services! From migration strategies to scalable infrastructure, we deliver cost-efficient, secure, and innovative cloud solutions. Ready to transform? Contact us today.

[lwptoc]

ChatOps is a unique approach in DevOps, especially when work moves to a shared chat environment. It lets you run commands directly in the chat, and everyone can see the command history, interact with it, and learn from it. This sharing of information and processes benefits the entire team.

Whether it's deploying code, managing server resources, monitoring charts, sending SMS notifications, controlling clusters, or running basic commands, ChatOps allows you to do these tasks right from the chat platform. It simplifies communication with commands like "!deploy," providing a clear overview of your complex CI/CD process. This enhances visibility and reduces complexity during deployment.

In this article, we'll explore the importance of ChatOps for DevOps teams, highlighting its benefits and how it improves collaboration and communication. Whether you're new to ChatOps or looking to improve your current practices, this guide offers insights on effectively using ChatOps in your DevOps workflows.

What is ChatOps?

ChatOps is a collaborative model that smoothly combines people, tools, processes, and automation into a clear workflow. This interconnected flow gathers tasks, ongoing work, and completed work in one place, manned by individuals, bots, and relevant tools. ChatOps' transparency tightens the feedback loop, improves information sharing, and encourages better collaboration among teams, positively impacting team culture and creating cross-training opportunities.

While collaboration through conversations isn't new, ChatOps is its digital-age version—a blend of proven collaboration methods with the latest technology. The result is a straightforward fusion that can potentially revolutionize how we work.

Conversations drive collaboration, learning, and innovation, fueling human progress. The pace of progress is accelerating rapidly, though it may be too subtle to fully grasp in a single lifetime. The world is experiencing exponential collaboration, with each passing year seeing an increased rate of cooperation.

Key Concepts of ChatOps

ChatOps, a key concept in team collaboration, acts as a central hub for communication. It brings team members, tools, and processes into one chat platform, making discussions, decisions, and task execution seamless. This approach boosts coordination, reduces context-switching, and enhances team productivity.

Automation Integration

ChatOps revolves around integrating automation and bots into the chat environment. Automation tools and scripts help automate routine tasks, execute commands, and trigger workflows directly from the chat platform. Bots act as virtual team members, handling repetitive tasks and providing information, freeing up human resources for more complex work.

Real-time Sharing and Transparency

ChatOps prioritizes real-time information sharing. Team members can stay updated on ongoing tasks and projects through conversations, commands, and notifications within the chat platform. This immediacy reduces decision-making delays, fostering transparency, collaboration, and quick responses to incidents or workflow changes.

DevOps Alignment

ChatOps aligns closely with DevOps principles and practices. It promotes collaboration, communication, and shared responsibility among developers, operations teams, and stakeholders in the software development lifecycle. By integrating DevOps methodologies into the chat environment, ChatOps facilitates seamless collaboration, streamlines continuous integration and delivery processes, and enhances overall efficiency and software development quality.

Ready to revolutionize your workflows with ChatOps? Get in touch with Gart to explore their successful use cases and experience in streamlining processes.

Successful Use Cases of ChatOps - Beyond Risk's Experience

Harnessing our extensive knowledge of ChatOps technologies, Gart has crafted an all-encompassing automation framework meticulously designed to meet the specific presale needs of Beyond Risk. Our team has devised an interactive process, allowing non-technical executives to effortlessly create dynamic and fully customized environments.

About the client:

To facilitate real-time communication and updates, Gart integrated Slack as the primary communication channel. All action results were delivered directly to the designated Slack channel, ensuring stakeholders were promptly informed about the request status.

The implementation utilized Slack API for interactive flow, AWS Lambda for business logic, and GitHub Action + Terraform cloud for infrastructure automation. By incorporating a notification step, Gart ensured visibility into the success or failure of the Terraform infrastructure automation processes.

Read more: Streamlining Presale Processes with ChatOps Automation

Need ChatOps for your business? Contact Gart today and discover how they successfully streamlined presale processes through automation.

Implementing ChatOps in Practice

To implement ChatOps effectively, focus on selecting the right chat tools, integrating automation, setting communication guidelines, and promoting cross-functional collaboration.

Choose the Right Chat Tools

Start by picking user-friendly and scalable chat tools like Slack, Microsoft Teams, or Mattermost. Evaluate their integration capabilities and security features to ensure they align with your team's needs.

Integrate Automation and Bots Maximize

ChatOps by integrating automation tools and bots into your chosen chat platform. Explore options like Hubot, ChatOps-enabled plugins, or custom solutions to automate tasks, execute commands, and enhance productivity.

Establish Clear Communication

Ensure successful ChatOps by defining its purpose and expectations within your team. Set clear guidelines for chat channels, naming conventions, and tagging to keep conversations organized. Encourage concise and context-rich messaging to reduce noise.

Promote Cross-Functional Collaboration

Foster collaboration among diverse team members by using ChatOps. Encourage developers, operations, and QA teams to share knowledge, collaborate, and contribute. By breaking down silos, ChatOps facilitates faster issue resolution and collective problem-solving.

5 Best ChatOps Tools to Streamline Devs' Work in 2024

When selecting ChatOps tools, consider factors such as ease of use, integration capabilities with your existing toolset, security features, and scalability to ensure they align with your team's requirements and objectives.

Slack is a popular chat platform widely used for ChatOps. It provides a rich set of features, including real-time messaging, file sharing, and integrations with various tools and services. Slack's robust API and extensive integration capabilities make it a versatile choice for implementing ChatOps workflows.

Microsoft Teams is another widely adopted collaboration platform suitable for ChatOps. It offers chat-based communication, audio/video conferencing, and seamless integration with other Microsoft products like Azure DevOps and Office 365. Teams' integration with Power Automate allows for building automated workflows directly within the platform.

Mattermost is an open-source, self-hosted chat platform that provides a secure and customizable environment for ChatOps. With its focus on privacy and data control, Mattermost is ideal for organizations with strict security requirements. It offers features like threaded conversations, file sharing, and integration with popular DevOps tools.

Hubot is an automation framework designed specifically for ChatOps. It can be integrated with various chat platforms and programmed to execute commands, automate tasks, and provide information on demand. Hubot supports a wide range of scripts and plugins, making it flexible and customizable for different ChatOps workflows.

ChatOps-enabled Tools

Many existing DevOps tools have built-in ChatOps capabilities or integrations with popular chat platforms. For example, tools like Jenkins, GitLab, and GitHub provide plugins or webhooks that allow for triggering builds, deployments, and other actions directly from chat platforms. These integrations help consolidate information and actions in a single location, enhancing collaboration and visibility.

Future Trends in ChatOps

Integration with AI and Natural Language Processing

As ChatOps continues to evolve, we can expect increased integration with AI and natural language processing (NLP) technologies. AI-powered bots and NLP algorithms can enhance the chat experience by enabling more sophisticated interactions, intelligent automation, and contextual understanding. ChatOps platforms may leverage AI to provide smart suggestions, automate routine tasks, and offer advanced analytics based on the conversations within the chat environment.

Expansion to Non-Technical Teams and Departments

While ChatOps has primarily been adopted by technical teams, the future holds potential for its expansion to non-technical teams and departments. By tailoring the chat platforms and workflows to suit the specific needs of different functions, organizations can foster cross-functional collaboration and extend the benefits of ChatOps beyond software development and operations. Teams such as HR, marketing, sales, and customer support can leverage ChatOps to streamline their workflows, enhance communication, and improve overall productivity.

Incorporation of Voice and Video Communication

The future of ChatOps may involve the integration of voice and video communication capabilities within the chat platforms. This expansion would enable teams to have real-time discussions, conduct virtual meetings, and share screens directly within the chat environment. Seamless transitions between text-based chat, voice, and video can enhance collaboration, particularly for distributed teams and remote work setups.

ChatOps as a Driver of Digital Transformation

ChatOps is poised to become a driving force behind digital transformation initiatives. By consolidating communication, automation, and collaboration into a single platform, ChatOps creates an environment conducive to agile workflows, rapid decision-making, and improved transparency. Organizations embracing ChatOps as part of their digital transformation strategies can experience increased operational efficiency, faster time to market, and enhanced customer experiences.

The future trends in ChatOps indicate a continued integration of AI and NLP, expansion to non-technical teams, incorporation of voice and video communication, and its role as a catalyst for digital transformation.

Need ChatOps for your business?

Revolutionize your development landscape with our DevOps solutions. Seamless integration, automated deployment, and enhanced collaboration await. Contact us to embark on a journey of innovation.