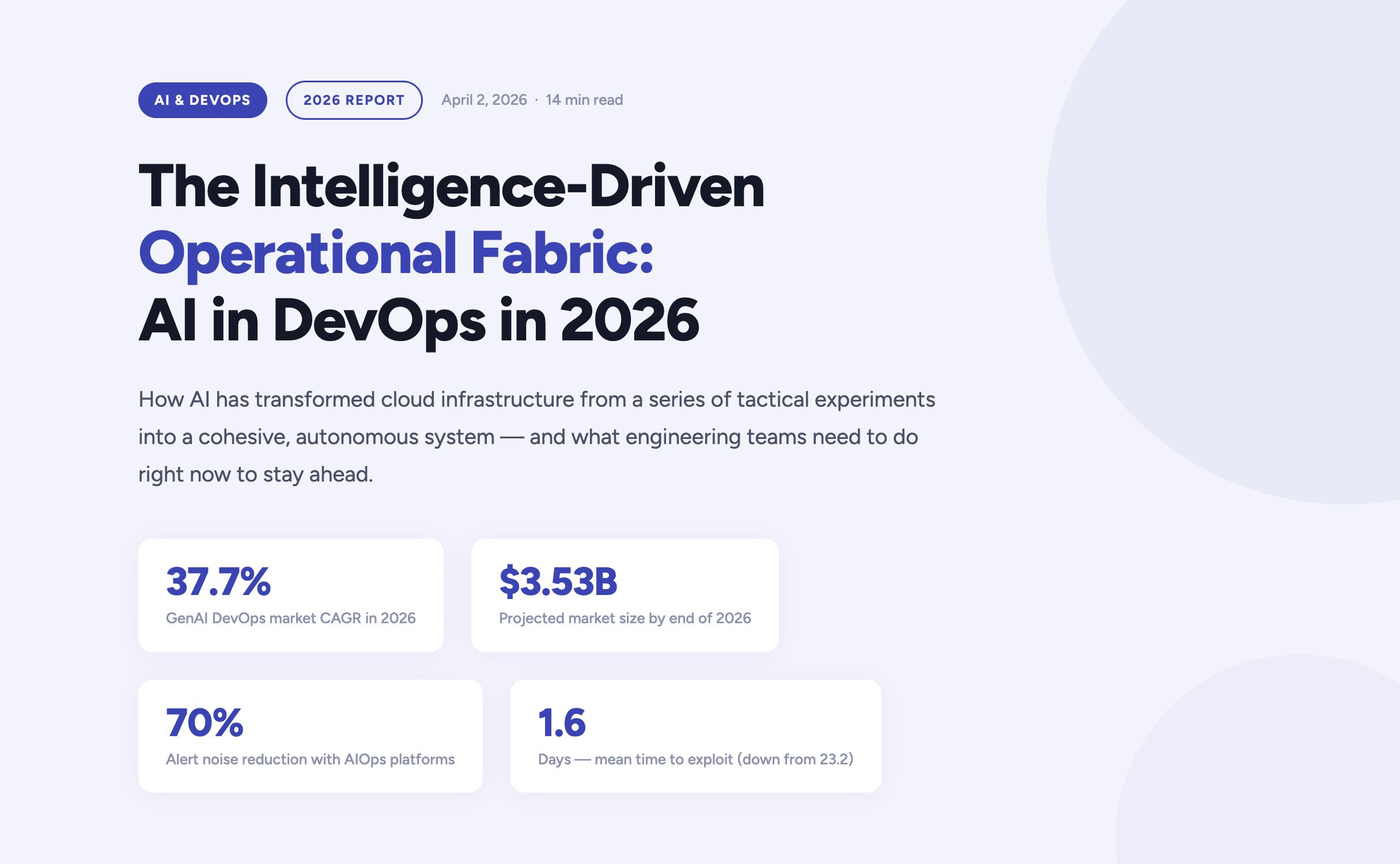

The year 2026 marks a definitive turning point in how enterprises build, deploy, and operate software. Artificial Intelligence has moved far beyond the experimental phase inside DevOps pipelines — it now forms the connective tissue of the entire software delivery lifecycle. According to current market analysis, the generative AI segment of the DevOps market is growing at a compound annual rate of 37.7%, expected to reach $3.53 billion by the end of this year alone.

For engineering teams, platform engineers, and CTOs navigating this shift, the questions are no longer "should we adopt AI?" but rather "how do we govern it?", "where does it amplify our strengths?", and critically — "where does it expose our weaknesses?". This article answers those questions, grounded in the realities of operating cloud infrastructure in 2026.

https://youtu.be/4FNyMRmHdTM?si=F2yOv89QU9gQ7Hif

The AI velocity paradox — why more code isn't always better

One of the most striking findings in the 2026 DevOps landscape is what researchers have begun calling the AI Velocity Paradox. AI-assisted coding tools have dramatically accelerated the code creation phase of the Software Development Life Cycle. However, the downstream delivery systems responsible for testing, securing, and deploying that code have often failed to keep pace — creating a structural mismatch between production and operations capacity.

The data tells a clear story. Teams that use AI coding tools daily are three times more likely to deploy frequently — but they also report significantly higher rates of quality failures, security incidents, and engineer burnout.

The AI DevOps maturity gap — occasional vs. daily AI tool users

The AI DevOps Maturity Gap — 2026 Analysis

Performance Indicator

Occasional AI Usage

Daily AI Usage

Daily deployment frequency

15% of teams

45% of teams

Frequent deployment issues

Minimal

69% of teams

Mean Time to Recovery (MTTR)

6.3 hours

7.6 hours

Quality / security problems

Baseline

51% quality / 53% security

Engineers working overtime

66%

96%

The root cause is structural: a "six-lane highway" of AI-accelerated code generation is funneling into a "two-lane bridge" of operational capacity. Engineers spend an average of 36% of their time on repetitive manual tasks — chasing tickets, rerunning failed jobs, manually validating AI-generated code — while developer burnout now affects 47% of the engineering workforce.

The implication is clear: AI does not automatically improve DevOps outcomes. Applied to brittle pipelines or fragmented telemetry, it accelerates instability. Applied to robust, standardized foundations, it becomes a force multiplier. The organizations that succeed in 2026 are those that modernize their entire delivery system — not just the IDE.

Tech should do more than work — it should do good, and it should scale purposefully."

Fedir Kompaniiets, CEO, Gart Solutions

Intent-to-Infrastructure — the evolution of IaC

Infrastructure as Code has been a DevOps cornerstone for years, but the model is undergoing a fundamental transformation in 2026. The industry is moving away from hand-crafted Terraform scripts and declarative state management toward what practitioners call Intent-to-Infrastructure — AI-powered platforms that interpret high-level business requirements and autonomously provision compliant, cost-optimized environments.

The evolution of Infrastructure as Code

The Evolution of Infrastructure as Code

Generation

Primary Mechanism

Governance Model

Outcome Focus

IaC 1.0 — Legacy

Manual scripting (Terraform, Ansible)

Periodic manual audits

Resource provisioning

IaC 2.0 — Standard

Declarative state management

Automated policy checks

Environment consistency

Intent-Driven (2026)

AI translation of requirements

Continuous autonomous reconciliation

Business-aligned outcomes

In the intent-driven model, a developer can express a requirement in plain language — for example, "provision a production-ready Kubernetes cluster with SOC 2-compliant networking for our EU-West workload" — and the platform autonomously generates, validates, and manages the resources. Compliance is no longer a retrospective audit exercise; it is embedded at the moment of generation.

This approach directly addresses one of the most persistent gaps in enterprise cloud governance: the Confidence Gap. While 77% of organizations report confidence in their AI-generated infrastructure, only 39% maintain the fully automated audit trails needed to actually verify those outputs. Intent-driven platforms close this gap by creating immutable, traceable records of every provisioning decision.

Key IaC Capabilities in 2026

Natural language provisioning — Describe infrastructure requirements in plain English, receiving validated, compliant Terraform or Pulumi code.

Golden path enforcement — Pre-approved patterns ensure every environment is secure by default, reducing misconfiguration risk.

Continuous autonomous reconciliation — AI continuously monitors for drift and self-corrects without human intervention.

Policy-as-code integration — OPA, Sentinel, and custom guardrails are embedded into generation pipelines, not added as an afterthought.

Cost-aware provisioning — FinOps constraints are applied at generation time, preventing over-provisioning before it happens.

AIOps and the new era of observability

As cloud-native architectures scale in complexity, the challenge facing modern platform engineers is no longer the collection of telemetry data — it is the meaningful interpretation of it. According to Gartner, over 60% of production incidents in 2026 are caused by poor interpretation of existing data, not a lack of visibility. Teams are drowning in signals while missing the meaning.

This has driven the rapid maturation of AIOps — Artificial Intelligence for IT Operations — which shifts the operational model from reactive incident firefighting to predictive, self-healing systems. Modern AIOps platforms in 2026 are built on three core capabilities:

Predictive incident management

AI models trained on historical delivery patterns, change velocity data, and error logs can now surface probabilistic risk assessments hours before a service outage occurs. Rather than reacting to pages at 3am, platform teams receive prioritized warnings during business hours with recommended remediation paths.

Autonomous remediation

For well-understood failure patterns — pod OOMKill events, connection pool exhaustion, SSL certificate expiry — AI agents can execute validated runbooks autonomously, patching or scaling systems within seconds of detection. Human intervention is reserved for novel or high-impact scenarios.

Intelligent alert prioritization

By correlating weak signals across application, infrastructure, and network layers, modern AIOps platforms reduce alert noise by up to 70%. Engineers no longer triage a wall of Slack notifications — they engage with a curated, context-rich incident queue.

60%+

Incidents from misinterpretation

70%

Less alert noise via AIOps

36%

Engineer time lost to manual tasks

eBPF

Deep visibility sans code changes

DevSecOps 2.0 — when autonomous security becomes non-negotiable

The security landscape of 2026 is unforgiving. The mean time to exploit a known vulnerability has collapsed from 23.2 days in 2025 to just 1.6 days — faster than any human-speed security process can respond. This has driven a fundamental rearchitecting of DevSecOps, from a set of "shift left" practices to a fully autonomous, self-healing security model.

Traditional vs. AI-Enhanced DevSecOps

Security Metric

Traditional DevSecOps

AI-Enhanced DevSecOps (2026)

Vulnerability identification

Periodic scanning of dependencies

Real-time scanning of code, containers, and runtimes

Threat response

Manual triage and incident response

Automated isolation of compromised resources

Compliance evidence

Manual spreadsheet collection

Automated, immutable audit trails

Risk assessment

Static CVSS vulnerability scoring

Contextual scoring based on reachability and blast radius

For regulated industries — healthcare, financial services, legal — compliance is no longer a quarterly exercise. In 2026, the most resilient organizations implement Compliance-by-Design infrastructure, where HIPAA, HITECH, SOC 2, and PCI-DSS controls are embedded directly into DevOps pipelines. Every commit, every deployment, every configuration change produces a verifiable, immutable compliance artifact — not as overhead, but as a natural byproduct of the engineering workflow.

The shift is cultural as well as technical: compliance is now understood as a growth enabler, not a hindrance. Organizations that can demonstrate real-time security posture attract enterprise customers, pass procurement audits, and move faster through regulated markets.

FinOps and the economics of intelligent infrastructure

Cloud spending has become a top-five P&L line item for most mid-to-large enterprises in 2026. Uncontrolled SaaS sprawl, over-provisioned Kubernetes clusters, and idle development environments have made AI-driven FinOps not just a cost-optimization strategy, but a boardroom-level priority.

The latest generation of FinOps tooling applies AI in two directions: reactive optimization (identifying and eliminating waste in existing infrastructure) and proactive cost governance (embedding unit cost constraints into provisioning workflows before resources are ever created). The results are significant — in some cases, organizations achieve savings of up to 80% on AWS compute budgets through spot instance migration, rightsizing, and automated idle resource termination.

Increasingly, FinOps and sustainability are being treated as two sides of the same coin. By eliminating idle compute and over-provisioned infrastructure, organizations simultaneously reduce cloud spend and digital carbon footprint — what practitioners are calling Green FinOps. At Gart Solutions, 70% of client workloads are optimized to run on green cloud platforms as part of a carbon-neutral-by-default infrastructure strategy.

"Applied to brittle pipelines or fragmented telemetry, AI accelerates instability. Applied to robust, standardized foundations, it becomes the force multiplier that allows organizations to scale resilience at the speed of code."

Roman Burdiuzha, CTO, Gart Solutions

Human-on-the-Loop governance — the new control model

As AI agents take over increasing portions of the operational layer, one of the defining debates of 2026 is where to draw the line on autonomy. The industry consensus has moved away from both extremes — fully manual "Human-in-the-Loop" (HITL) processes that create bottlenecks, and fully autonomous systems that introduce unacceptable risk — toward a middle path: Human-on-the-Loop (HOTL) governance.

In the HOTL model, AI agents operate autonomously within predefined guardrails. Humans shift from being operators to being overseers — setting policies, reviewing exceptions, and vetoing high-stakes decisions. The architecture is built on four pillars:

Step and cost thresholds — Hard limits on the number of actions an agent can execute per session, or the total tokens consumed, prevent infinite loops and runaway infrastructure costs.

The Veto Protocol — For high-risk decisions (budget reallocations, production changes above a defined blast radius), the agent surfaces a structured "Decision Summary" for asynchronous human review before proceeding.

Identity and access control — Agents are granted short-lived, task-scoped credentials. They never hold standing access to production environments; every session is authenticated, logged, and time-bounded.

Immutable audit trails — Every agent action generates a cryptographically signed record, ensuring full traceability for compliance and post-incident review.

This governance model is not a limitation on AI capability — it is what makes AI capability trustworthy enough to deploy at scale in regulated, high-stakes environments.

Industry-specific transformations

Manufacturing — the intelligent shop floor

Manufacturing organizations face a persistent challenge: deeply siloed data environments where Management Execution Systems (MES), ERP platforms, IoT sensor networks, and POS systems rarely communicate in real time. In 2026, cloud-native, AI-powered integration layers are dissolving these silos — enabling predictive maintenance, real-time production analytics, and supply chain transparency from raw material to finished product.

For one manufacturing client, a custom Green FinOps strategy eliminated over-provisioned infrastructure while a blockchain-based supply chain integration created end-to-end product traceability. The combined impact: measurable cost savings, improved regulatory compliance, and a more resilient operational model.

Healthcare — securing the patient data journey

In healthcare, the stakes of a misconfigured infrastructure are clinical as well as financial. DevOps practices in this sector are purpose-built around securing electronic health records, ensuring FDA and HIPAA compliance, and protecting medical device software against zero-day vulnerabilities. AI-driven monitoring continuously scans for "blind spots" that could lead to clinical data loss — not just at deployment time, but across the full runtime lifecycle.

SaaS and fintech — scaling without headcount sprawl

SaaS companies and fintech startups are increasingly turning to DevOps-as-a-Service to manage global availability and rapid iteration cycles without proportional growth in engineering headcount. By embedding automated security tasks, infrastructure-as-code provisioning, and AI-driven observability into every deployment, these teams can scale their products while maintaining the operational quality standards that enterprise customers demand.

Build your intelligent operational fabric

Partner with Gart Solutions for resilient, AI-powered cloud infrastructure.

Talk to an engineer →

Your 2026 AI DevOps roadmap

Organizations that are successfully navigating the AI transition in 2026 share a common pattern. They did not bolt AI onto existing processes — they built the foundations first, then amplified them. The roadmap has four distinct stages:

Data readiness audit

Ensure that observability data — logs, metrics, traces, events — is clean, normalized, and accessible across organizational silos. AI models are only as good as the telemetry they consume. Fragmented, noisy data produces fragmented, unreliable AI recommendations.

High-ROI use case selection

Start with workflows where AI delivers measurable, auditable value — automated testing, incident triage, IaC generation, cost anomaly detection. Build confidence and governance muscle before expanding to higher-risk autonomous operations.

Governance architecture

Establish the guardrails — HOTL oversight protocols, agent identity controls, immutable audit trails, cost thresholds — before deploying autonomous agents into production environments. Governance is not friction; it is what makes speed sustainable.

AI fluency across the engineering organization

Develop the skills required to oversee, interact with, and continuously improve intelligent agents. The competitive advantage in 2027 will belong to teams that can govern AI effectively — not just deploy it.

The 2026 AI-native DevOps toolchain

The toolchain of 2026 is defined by intelligence at every stage of the delivery pipeline. Unlike earlier generations of tooling that added AI as an afterthought, these platforms are AI-native — built from the ground up to learn, adapt, and act autonomously.

The AI DevOps Tooling Landscape (2026)

Tool

Domain

Key AI Capability

Snyk

Security

Real-time AI scanning for dependencies, containers, and IaC

Spacelift

Infrastructure

Multi-tool IaC management with AI policy enforcement

Harness

CI/CD

Intelligent software delivery with autonomous deployment verification

Datadog

Monitoring

AI-augmented full-stack visibility, anomaly detection, log correlation

PagerDuty

Incident Management

ML-based event correlation and intelligent noise reduction

StackGen

Platform Eng.

AI-powered intent-to-infrastructure generation

K8sGPT

Kubernetes

Natural language explanation and diagnosis of cluster errors

Sysdig Sage

DevSecOps

AI analyst for runtime security threat detection and CNAPP

Cast AI

FinOps

Autonomous Kubernetes cost optimization and rightsizing

Conclusion — from manual doers to intelligent orchestrators

The convergence of AI and DevOps in 2026 has redefined what is possible in software delivery. The organizations that thrive are not those that deploy the most AI tools — they are those that build the most resilient foundations and then amplify those foundations intelligently. Cloud infrastructure is no longer a hosting environment. It is an intelligent fabric that predicts, learns, and self-heals.

The transition is as cultural as it is technical. Engineering teams are moving from being manual operators to being intelligent orchestrators — governing not through a queue of tickets, but through the strategic definition of intent and the rigorous enforcement of outcomes. For those willing to make this shift, the competitive advantage is significant, durable, and compounding.

As Gart Solutions has built its entire practice around: tech should do more than work — it should do good, and it should scale purposefully.

Build your intelligent operational fabric with us

A boutique DevOps and cloud infrastructure partner for engineering teams that want to scale reliably, securely, and sustainably — without the overhead of a hyperscaler.

DevOps as a Service

Full-lifecycle CI/CD design, automation, and platform engineering for teams that need reliable, battle-tested delivery pipelines at startup speed.

Cloud migration & adoption

Strategic migration from on-premise or legacy cloud environments to modern, cost-optimized, and green cloud architectures on AWS, GCP, or Azure.

DevSecOps automation

Compliance-by-design infrastructure for regulated industries — embedding HIPAA, SOC 2, and PCI-DSS controls directly into your delivery pipeline.

AIOps & observability

End-to-end observability strategy — from eBPF telemetry and distributed tracing to AI-powered alerting, anomaly detection, and autonomous runbook execution.

FinOps & cloud cost optimization

Cloud cost audits, spot instance migration, idle resource termination, and Kubernetes rightsizing — achieving savings of up to 80% on cloud budgets.

Managed infrastructure

24/7 proactive management of your cloud infrastructure, with SLA-backed uptime guarantees, automated scaling, and continuous compliance monitoring.

The concept of DevOps has taken the tech world by storm. While some are drawn to the field for its attractive salaries, true success in DevOps requires more than just technical skills.

This article dives deeper than the hype. I've spent years implementing DevOps across various companies, drawing on my prior experience as a system administrator and IT director. Now, as CTO & Co-founder of Gart Solutions, I want to share my insights on what it truly means to be a DevOps professional.

Who embodies the DevOps spirit?

The question "what is DevOps?" sparked this article. There's a misconception that DevOps is a specific job title, a collection of tools, or a separate developer department.

DevOps is a cultural shift, a way of working. It's a set of practices that foster collaboration between developers and system administrators. DevOps doesn't change core roles, but it blurs departmental lines and encourages teamwork. Its goals are clear:

Frequent software updates

Faster software development

Streamlined and rapid deployment

There's no single "DevOps tool." While various tools come into play at different stages, they all serve a common purpose.

Having interviewed DevOps candidates for over 5 years, I've observed a crucial gap: those who view DevOps as a job title often face challenges collaborating with colleagues.

Here's a real-life example: a candidate with an impressive resume came in. His short stints at previous startups (5-6 months each) raised concerns. He blamed his departures on unsuccessful ventures and a company where "nobody understood him." Apparently, developers used Windows, and his role was to "package" their code in Docker for the CI/CD pipeline. He spoke negatively about his colleagues and workplace, suggesting a lack of problem-solving skills.

A key question I ask all candidates is:

"What does DevOps mean to YOU?"

I'm interested in their personal understanding. This particular candidate knew the textbook definition but disagreed, clinging to the misconception of DevOps as a job title. This misunderstanding is the root of his struggles, and it's likely true for others with the same perspective.

Employers, drawn to the "magic" of DevOps, seek a silver bullet – a single person to create this magic. Applicants who view DevOps as a job title don't grasp that this approach leads to disappointment. Some might have just added "DevOps" to their resume to ride the trend or for the higher salary, without truly understanding the philosophy.

DevOps Methodology: Putting Theory into Practice

DevOps methodology isn't theoretical; it's about practical application. As mentioned earlier, it's a toolbox of practices and strategies used to achieve specific goals. These practices can vary significantly depending on a company's unique business processes, but that doesn't make one approach inherently better or worse. It's all about finding the right fit.

DevOps Philosophy: Capturing the Essence

Defining DevOps philosophy can be tricky. There's no single, universally accepted answer, likely because practitioners are so focused on getting things done that formalizing the philosophy takes a back seat. However, it's a crucial aspect with deep ties to engineering practices.

Those who delve deeply into DevOps often develop a sense of "spirit" or a holistic understanding of how all company processes interconnect.

Drawing on my experience, I've attempted to formalize some core principles of this philosophy:

DevOps is Integrated: It's not a separate entity; it permeates all aspects of a company's operations.

Company-wide Adoption: For DevOps to be successful, all employees should consider its principles when planning their work.

Streamlining Efficiency: DevOps aims to minimize the time spent on internal processes, ultimately accelerating service development and enhancing customer experience.

Proactive Problem-Solving: In simpler terms, DevOps encourages a proactive approach from every employee to reduce time wasted and improve the quality of the IT products we use.

These "postulates," as I call them, are a springboard for further discussion. They offer a starting point to grasp the core tenets of the DevOps philosophy.

Get a sample of IT Audit

Sign up now

Get on email

Loading...

Thank you!

You have successfully joined our subscriber list.

The Heart of DevOps: Communication

Communication is the lifeblood of DevOps. Because it's a cultural shift and a set of practices, strong communication skills are even more important than technical knowledge for a DevOps engineer.

There's also a fascination with specific tools like Kubernetes. However, using a tool like Kubernetes everywhere, regardless of suitability, can be counterproductive. It's crucial to choose the right tool for the job. While Kubernetes offers advantages, maintaining a cluster can be more expensive than a traditional deployment scheme.

DevOps implementation isn't cheap; it needs to justify its cost through overall business benefits. DevOps engineers act as pioneers, introducing new methodologies and building processes within companies. Success hinges on continuous communication with employees at all levels – from entry-level team members to leadership. Everyone needs to understand the "why" behind DevOps practices for successful implementation.

DevOps engineers might also need to navigate resistance to change. For example, developers accustomed to managing their entire project infrastructure locally on powerful laptops may resist shifting to a more streamlined approach. This is where communication becomes crucial in explaining the benefits of new methods.

However, many teams readily embrace new tools and actively participate in the DevOps transformation. Regardless of the initial reception, clear communication between the DevOps engineer and the team is essential for a smooth transition.

DevOps: Not a One-Size-Fits-All Solution

DevOps isn't a magic bullet for every company. It's crucial to understand when it makes sense and when it doesn't.

Generally, small businesses with static websites or information services that don't directly drive customer satisfaction likely won't see a significant benefit from DevOps.

Here's a good rule of thumb: If a company's success hinges on the quality, availability, and targeted features of its IT products used for customer interaction, then DevOps becomes a strong consideration.

Take a bank, for example. They rely heavily on IT infrastructure for customer interactions, making them a prime candidate for DevOps adoption.

Company Size Isn't the Only Factor

Don't assume that large companies automatically need DevOps. Consider an automaker whose primary customer interaction happens through dealerships via email and phone. Their internal operations may be well-managed with traditional system management approaches, negating the need for a full-blown DevOps implementation.

However, company size doesn't always dictate the need for DevOps. Many small software companies thrive with the DevOps methodology because it allows them to compete effectively.

The Key Factor: IT Product Importance

The ultimate question is: how critical are your IT products to your company's success and customer satisfaction?

If software is your primary revenue driver, regardless of other products or services, then DevOps is likely a worthwhile investment. Online stores and mobile game apps are perfect examples.

Our Expertise at Gart Solutions

At Gart Solutions, we understand the transformative power of DevOps for businesses of all sizes. Our team of passionate DevOps engineers fosters a culture of knowledge sharing through internal training and workshops.

Here's a glimpse into our DevOps toolbox:

CI/CD Pipeline Automation: We leverage industry-leading tools to streamline your software builds, testing, and deployments, ensuring speed and efficiency.

Infrastructure Management: Our experts handle your infrastructure setup, maintenance, and optimization, allowing you to focus on core development activities.

Containerization with Kubernetes: We provide expertise in deploying and managing containerized applications using Kubernetes, offering scalability and portability.

Cloud Solutions: Our team helps you navigate the world of cloud computing, from migration strategies to ongoing cloud management, optimizing your cloud footprint and costs.

Security & Monitoring: We prioritize robust security measures throughout your development lifecycle, while implementing comprehensive monitoring to ensure optimal application performance.

We constantly evaluate and integrate new tools and techniques to ensure our clients have access to the most cutting-edge solutions. Our goal is to be your trusted partner, empowering you to achieve your business objectives through the power of DevOps.

Watch our webinar about DevOps ROI & Value, by Fedir Kompaniiets, founder and CEO of Gart Solutions.

https://youtu.be/k7ao6akcxus?si=1nZPR7CIjM_a_ojO

In Conclusion: The Power of Communication and Collaboration

The journey to becoming a top-tier DevOps engineer hinges on a core skill: effective communication. DevOps is not a solo act; it's about fostering collaboration and breaking down silos. The most successful practitioners take the initiative to connect with colleagues, actively propose solutions, and embrace open discussions. Be prepared for ideas to evolve through team interaction – that's the magic of DevOps!

DevOps - it's just a marketing buzzword with no substance behind it, right? Or perhaps there's more to it than meets the eye. Is DevOps simply a collection of "correct" tools, or is it a specialized culture? And who exactly should be responsible for it, and what does a DevOps engineer entail?

In short, there are some misconceptions abound, some trivial, some deeply rooted in the minds of respected professionals.

Myth #1: DevOps Can Be Done by a DevOps Department or Engineer

To achieve proper DevOps, we hire DevOps engineers or create a DevOps department, and voila! We've got full-fledged DevOps in our company!

This sentiment is quite pervasive. Despite numerous materials explaining why there's no such thing as DevOps engineers or why creating a DevOps department isn't advisable, DevOps departments still emerge, and DevOps engineer vacancies continue to increase.

Here's a scenario I often encounter: a typical company where nothing much happens until the CEO or the board catches the "digital transformation" bug. It's a highly contagious disease usually contracted at conferences for CEOs or top management. Suddenly, terms like Agile, DevOps, digital, product, etc., start popping up in their heads. The CEO gathers the troops and says, "Guys, we need Agile, DevOps, digital, figure it out and make it happen."

In reality, it's not the CEO's task to delve into all of this. They've figured out, for instance, how to increase revenue, value streams, and realized that it correlates with these buzzwords.

Then the team starts brainstorming. They read about DevOps on Wikipedia: "A methodology that unifies developers, operations, and testing for continuous software delivery."

In their company, software delivery is continuous, deployments happen every three months, developers and sysadmins hang out together at bars - there's no issue with collaboration. So, they decide to rename some department to DevOps.

Ultimately, either the quality assurance department gets renamed to DevOps or the operations department - depending on the experience of the individuals involved. But what to do with that department if it's filled with quality/issue engineers? They need to be renamed to DevOps engineers too?

I regularly browse through DevOps job listings because we and our clients are looking for engineers. You can check out job search websites yourself; they're all so diverse!

There's no strict standardization or typification: from people setting up pipelines on Jenkins to testers writing automation scripts; from specialists deploying on AWS to those configuring monitoring, rolling out software, etc. Every possible IT profession is labeled as a DevOps engineer.

The exception is small companies genuinely producing digital products with real continuous software delivery. They seek people who can do it all because it's hard to pinpoint a specific qualification. So, they simply advertise for a DevOps engineer position listing everything - Jenkins, Ansible, Chef, Docker, Kubernetes, Silex, Prometheus.

The most crucial aspect of needing DevOps is to create value flow throughout the company. DevOps should cover everything from analytics to actual operations. It's not a separate profession or a designated role; it's merely a concept that facilitates rapid digital product delivery.

The first mention of this term dates back to 2009 when Patrick Debois said at a conference: "We've started using a new approach. We're now producing digital products, and we have a problem - the old ways aren't working. I alone can't solve it. I invite everyone to join the community to figure out how to live with this. Let's call this problem DevOps."

DevOps is the solution to the problem of producing digital products. It's a methodology. Calling an individual by that term is incorrect.

If you're seeking a DevOps engineer, consider why you need one. It's better to specify a particular skill set and look for it in the market than hire an ambiguous specialist.

For the financially prudent: typically, a system administrator with Docker knowledge differs from a DevOps engineer with Docker knowledge not in skills but in price.

Myth #2: DevOps is about Hiring Jack-of-All-Trades Specialists

Let me share my interpretation of where this problem stems from. When the whole DevOps saga was just beginning, indeed, all the work was done by jack-of-all-trades specialists. For example, one person would build the delivery pipeline, set up monitoring, and so forth. I started from the same point and did everything myself because I didn't know whom to delegate tasks to: I needed to research here, tweak there, and implement something else. As a result, I became a jack-of-all-trades specialist who configured Linux, wrote Chef scripts, integrated with Zabbix API, and more. I had to do it all.

But in reality, this doesn't work.

Productivity:

18 jack-of-all-trades craftsmen capable of making a pin each produce 360 pins per day.

18 craftsmen divided into 18 specializations produce 48,000 pins.

This is a well-known example of production increase in a pin factory from Adam Smith's book. In reality, the story about these pins wasn't invented by him but rather borrowed from a British encyclopedia.

This logic still holds true today. When I hear that specialists should be cross-functional, I actually think that teams should be cross-functional, but certainly not individuals. Otherwise, we end up needing to produce 48,000 pins but simply don't have enough people.

In the book "The Phoenix Project: A Novel about IT, DevOps, and Helping Your Business Win," there's a story about Brandon, who does everything in the company, and everything bottlenecked around him. This is precisely the story of a talented jack-of-all-trades specialist.

But then the question arises - if not hiring a jack-of-all-trades specialist, then whom to hire? There's no clear answer to this question. We don't specify specific specializations for people working in DevOps.

I'll try to outline these roles broadly and invite you to join this work because without role division, we'll always be talking about jack-of-all-trades specialists.

DevOps, other roles:

Developer with an understanding of architecture and production software work (writes tests and infrastructure code)

What sets this developer apart from a typical university graduate? A developer from a typical university can write algorithms - and that's about it. A DevOps developer not only can write descriptions but also knows how these descriptions come to life.

I came to IT from radio electronics, and what amazed me here was that people think that a schematic diagram is a working product. An engineer in radio electronics doesn't have such a misconception, but in IT - it exists.

We need developers who know that a schematic diagram is not a working product. There are few of them; they come from related fields - like radio electronics.

Infrastructure Engineer (writes bindings for infrastructure, provides a platform for developers)

This role is so rich that it can be further broken down. Essentially, it includes a product owner manager who owns the continuous delivery platform product and the individual competencies of people who build this delivery platform. But this is for large companies.

For small companies, it's simpler. This is an engineer who is well-versed in configuration management, knows Docker, Kubernetes, can create a continuous software delivery process based on them, and hand it over to the developer.

Developer of infrastructure services (DBaS, Monitoring as a Service, Logging as a Service)

These are services provided to developers as a product. Note that you can go to Amazon and buy these individual services. Amazon can be considered a DevOps role model; that is, what they sell can be grown internally.

Release Manager (manages the process and dependencies)

This isn't the person who builds the build. They manage dependencies, versions, facilitate team agreements on integration environments, oversee the continuous delivery pipeline, and so forth.

They are the fairy who endows your team with magic so they can continuously deliver software.

Myth #3: We've Been Doing DevOps Since 1995

"We've been developing our corporate IT system for many years and have been releasing every week. Your DevOps is just a bunch of millennials in jeans with rolled-up cuffs who came along and renamed what we've been doing for years."

This myth stems from a misunderstanding of what DevOps truly entails and a misinterpretation of the time-to-market concept. Some organizations believe that because they release software frequently, they are already practicing DevOps. They may view DevOps as simply a rebranding of their existing processes rather than a fundamental shift in approach.

The reality is that DevOps is not just about the frequency of releases; it's about the entire software delivery lifecycle, from development to operations, being optimized for speed, efficiency, and collaboration. While releasing every week is a positive achievement, true DevOps involves more than just release frequency.

One of the key principles of DevOps is continuous improvement, which means constantly refining and optimizing processes to deliver value to customers faster and more reliably. It's about breaking down silos between development, operations, and other teams, fostering a culture of collaboration and shared responsibility.

Another misconception related to this myth is the idea that DevOps only becomes relevant during incidents or emergencies. While it's true that DevOps practices can help teams respond more effectively to incidents, they are equally important during normal operations. DevOps is about building resilience and reliability into the software delivery process from the outset, not just reacting to problems as they arise.

Ultimately, DevOps is about delivering software that meets customer needs quickly and efficiently. It requires a mindset shift and a commitment to continuous improvement, rather than simply sticking to existing processes and routines.

While frequent releases are a positive step, true DevOps involves a holistic approach to software delivery that goes beyond release frequency and encompasses the entire lifecycle of software development and operations. It's not just about what you've been doing for years; it's about how you can do it better.

DevOps Practices for TTM (Time-to-Market)

I've outlined some core DevOps practices focused solely on time-to-market, without which continuous feedback from your customers and the market is unattainable.

Release Strategies (blue-green deployments, canary releases, A/B testing):It sounds good and quite simple — let's roll out to 0.1% of users. But to do this requires monumental effort, such as transitioning from a monolithic architecture to a microservices architecture.

Continuous Monitoring:This is monitoring akin to testing, where developers describe, in code, what needs to be monitored in production. Afterward, the artifact, along with the description of what needs monitoring, is pushed through the pipeline, and everything is added to monitoring at every stage of continuous delivery. This allows a real look at what's happening with the application.When you've deployed to 0.1%, you can understand if the software is working as intended or if something is amiss. It's pointless to deploy to 0.1% if you don't know what's happening with the software.

End-to-End Logging:This is when, via an ID, you can see in the system how customer data has changed: they come in through the frontend, then to the backend, then to the queue, where they're picked up by an asynchronous worker. The asynchronous worker then goes to the database, changes the cache state, and all of this is logged. Without this, figuring out what happened in the system is impossible, and deploying to 0.1% is futile.Imagine you decide to deploy some new software to all 2,000 attendees of the "International Internet Technologies" festival, but you lack end-to-end logging. These 2,000 people complain that it's not working. You ask them what's wrong because from your perspective, it's like some kind of poltergeist. It's a futile endeavor.

Automated Tests (as a form of agreement between teams):Automated testing has long been known and used for improving software quality. However, automated tests need to be approached differently — as a form of agreement between teams. Instead of resolving numerous small issues in the software during integration, teams agree that each team writes automated tests and addresses these issues at the stage of developing individual modules, elements, etc. Writing automated tests is a separate story.

All these described components — DevOps practices for TTM — require a lot of time and effort if you're not starting from scratch, but rather, for example, rewriting a monolith. However, without them, achieving a high time-to-market is impossible.

Myth 4: DevOps is all about the "right" tools

There's a common belief that DevOps can be achieved simply by adopting the "right" tools. It's like assembling a toolkit with Kubernetes, Ansible, Prometheus, Mesosphere, and Docker, and expecting instant DevOps success.

However, this approach often leads to disappointment. Organizations might install Kubernetes, for example, use it for a while, and then realize it's not as effective as they hoped. They find themselves grappling with too many components, encountering glitches, and realizing that manual processes might have been simpler.

While powerful tools are certainly valuable, they're just one piece of the DevOps puzzle. True DevOps isn't just about the tools you use; it's about the cultural and process changes within your organization.

That said, there are indeed tools specifically designed for DevOps, created by the community that emerged around the DevOps movement. These tools come with not only installation instructions but also comprehensive guides on how to leverage them effectively.

For instance, when you install Git, you don't need to understand the intricacies of hashing algorithms. Similarly, with Kubernetes, it's more important for engineers to understand how to work with it rather than the internal workings of the platform.

While the "right" tools are important, they're not a silver bullet for DevOps success. It's crucial to focus on building a culture of collaboration, automation, and continuous improvement alongside tool adoption.

Myth #5: DevOps is only for large organizations

DevOps can benefit businesses of all sizes. Smaller companies may even find it easier to adopt a DevOps culture due to their agility and fewer layers of bureaucracy. DevOps principles like continuous integration and delivery can be implemented incrementally, even with limited resources.

Myth #6: DevOps is only for startups or tech companies

While DevOps originated in the tech industry, it is not limited to startups or tech companies. DevOps practices can be adopted by organizations of any size and across various industries to improve software delivery and operational efficiency.

Myth #7: DevOps requires a complete overhaul of your workflow

DevOps is about continuous improvement, not starting from scratch. You can begin by focusing on small changes that promote collaboration and automation. Gradually, your development and operations processes will become more efficient and streamlined.

Myth #8: DevOps replaces traditional IT roles

DevOps does not replace traditional IT roles like developers, system administrators, or quality assurance professionals. Instead, it promotes cross-functional collaboration, shared responsibilities, and a culture of continuous learning and improvement.

Myth #9: DevOps is a one-time implementation

DevOps is a cultural shift, not a one-time fix. It requires ongoing commitment and adaptation. As your organization and technology landscape evolve, your DevOps practices will need to evolve as well.

Myth #10: DevOps is only for cloud-native or microservices architectures

DevOps practices can be applied to various types of software architectures, including monolithic, service-oriented, or cloud-native applications. The principles of collaboration, automation, and continuous improvement are relevant regardless of the underlying architecture.

By understanding and addressing these myths, organizations can better embrace DevOps as a cultural shift and a set of practices that foster collaboration, automation, and continuous improvement in software delivery and operations.