Site Reliability Engineering (SRE) monitoring and application monitoring are two sides of the same coin: both exist to keep complex distributed systems reliable, performant, and transparent. For engineering teams managing microservices, Kubernetes, and cloud-native architectures, knowing what to measure—and how to act on it—is the difference between a 15-minute incident and an all-night outage.

This guide explains how the four Golden Signals serve as the foundation of production-grade application monitoring, how to connect them to SLIs, SLOs, and error budgets, and how to build dashboards and alerting workflows that actually reduce your MTTR.

KEY TAKEAWAYS

Golden Signals (latency, errors, traffic, saturation) are the universal language of SRE application monitoring across any tech stack.

Connecting signals to SLIs and SLOs turns raw metrics into reliability commitments your team can own.

Alert thresholds must be derived from baseline data and SLOs—the examples in this article are illustrative starting points, not universal rules.

After implementing Golden Signals, Gart clients have reduced MTTR by up to 60% within two months. Read the full case study context below.

What is SRE Monitoring?

SRE monitoring is the practice of continuously observing the health, performance, and availability of software systems using the methods and principles defined by Google's Site Reliability Engineering discipline. Unlike traditional system monitoring—which often tracks dozens of low-level infrastructure metrics—SRE monitoring is intentionally opinionated: it focuses on the signals that directly reflect user experience and system reliability.

At its core, SRE monitoring answers three questions at all times:

Is the system currently serving users correctly?

How close are we to breaching our reliability commitments (SLOs)?

Which service or component is responsible when something breaks?

This user-centric orientation is what separates SRE monitoring from generic infrastructure monitoring. An SRE team does not alert on "CPU at 80%"—they alert when that CPU spike is burning through their monthly error budget faster than expected.

Application Monitoring in the SRE Context

Application monitoring is the discipline of tracking how software applications behave in production: response times, error rates, throughput, resource consumption, and end-user experience. In an SRE context, application monitoring is the primary layer where Golden Signals are measured and where the gap between infrastructure health and user experience becomes visible.

A database node may be running at 40% CPU—perfectly healthy by infrastructure standards—while every query takes 4 seconds because of a missing index. Infrastructure monitoring shows green; application monitoring shows a latency crisis. This is why SRE teams invest heavily in application-level telemetry: it captures what infrastructure metrics miss.

Modern application monitoring spans three pillars:

Metrics — numerical time-series data (latency percentiles, error counts, RPS).

Logs — structured event records that capture request context and error detail.

Traces — distributed request journeys that map latency across service boundaries.

The Golden Signals framework unifies these pillars into four actionable categories that any team can monitor, regardless of their technology stack.

The Four Golden Signals in SRE

SRE principles streamline application monitoring by focusing on four metrics—latency, errors, traffic, and saturation—collectively known as Golden Signals. Instead of tracking hundreds of metrics across different technologies, this focused framework helps teams quickly identify and resolve issues.

Latency:Latency is the time it takes for a request to travel from the client to the server and back. High latency can cause a poor user experience, making it critical to keep this metric in check. For example, in web applications, latency might typically range from 200 to 400 milliseconds. Latency under 300 ms ensures good user experience; errors >1% necessitate investigation. Latency monitoring helps detect slowdowns early, allowing for quick corrective action.

Errors:Errors refer to the rate of failed requests. Monitoring errors is essential because not all errors have the same impact. For instance, a 500 error (server error) is more severe than a 400 error (client error) because the former often requires immediate intervention. Identifying error spikes can alert teams to underlying issues before they escalate into major problems.

Traffic:Traffic measures the volume of requests coming into the system. Understanding traffic patterns helps teams prepare for expected loads and identify anomalies that might indicate issues such as DDoS attacks or unplanned spikes in user activity. For example, if your system is built to handle 1,000 requests per second and suddenly receives 10,000, this surge might overwhelm your infrastructure if not properly managed.

Saturation:Saturation is about resource utilization; it shows how close your system is to reaching its full capacity. Monitoring saturation helps avoid performance bottlenecks caused by overuse of resources like CPU, memory, or network bandwidth. Think of it like a car's tachometer: once it redlines, you're pushing the engine too hard, risking a breakdown.

Why Golden Signals Matter

Golden Signals provide a comprehensive overview of a system's health, enabling SREs and DevOps teams to be proactive rather than reactive. By continuously monitoring these metrics, teams can spot trends and anomalies, address potential issues before they affect end-users, and maintain a high level of service reliability.

SRE Golden Signals help in proactive system monitoring

SRE Golden Signals are crucial for proactive system monitoring because they simplify the identification of root causes in complex applications. Instead of getting overwhelmed by numerous metrics from various technologies, SRE Golden Signals focus on four key indicators: latency, errors, traffic, and saturation.

By continuously monitoring these signals, teams can detect anomalies early and address potential issues before they affect the end-user. For instance, if there is an increase in latency or a spike in error rates, it signals that something is wrong, prompting immediate investigation.

What are the key benefits of using "golden signals" in a microservices environment?

The "golden signals" approach is especially beneficial in a microservices environment because it provides a simplified yet powerful framework to monitor essential metrics across complex service architectures.

Here’s why this approach is effective:

▪️Focuses on Key Performance Indicators (KPIs)

By concentrating on latency, errors, traffic, and saturation, the golden signals let teams avoid the overwhelming and often unmanageable task of tracking every metric across diverse microservices. This strategic focus means that only the most crucial metrics impacting user experience are monitored.

▪️Enhances Cross-Technology Clarity

In a microservices ecosystem where services might be built on different technologies (e.g., Node.js, DB2, Swift), using universal metrics minimizes the need for specific expertise. Teams can identify issues without having to fully understand the intricacies of every service’s technology stack.

▪️Speeds Up Troubleshooting

Golden signals quickly highlight root causes by filtering out non-essential metrics, allowing the team to narrow down potential problem areas in a large web of interdependent services. This is crucial for maintaining service uptime and a seamless user experience.

SRE Monitoring vs. Observability vs. Application Performance Monitoring (APM)

These three terms are often used interchangeably, but they refer to distinct practices with different scopes. Understanding where they overlap—and where they diverge—helps teams invest in the right tooling and processes.

DimensionSRE MonitoringObservabilityApplication Monitoring (APM)Primary questionAre we meeting our reliability targets?Why is the system behaving this way?How is this application performing right now?Core signalsGolden Signals + SLIs/SLOsLogs, metrics, traces (full telemetry)Response time, throughput, error rate, ApdexAudienceSRE / on-call engineersPlatform engineering, DevOps, SREDev teams, operations, managementTypical toolsPrometheus, Grafana, PagerDutyOpenTelemetry, Jaeger, ELK StackDatadog, New Relic, Dynatrace, AppDynamicsScopeService reliability & error budgetsFull system internal stateApplication transaction performanceSRE Monitoring vs. Observability vs. Application Performance Monitoring (APM)

In practice, mature engineering organizations treat these as complementary layers. Golden Signals surface what is wrong quickly; observability tooling explains why; APM dashboards give development teams actionable detail at the code level.

SLIs, SLOs, and Error Budgets in SRE Monitoring

Golden Signals generate raw measurements. SLIs and SLOs transform those measurements into reliability commitments that the business can understand and engineering teams can own.

Service Level Indicators (SLIs)

An SLI is a quantitative measure of a service behavior directly derived from a Golden Signal. For example:

Availability SLI: percentage of requests that return a non-5xx response.

Latency SLI: percentage of requests served in under 300ms (P95).

Throughput SLI: percentage of expected message batches processed within the SLA window.

Service Level Objectives (SLOs)

An SLO is the target value for an SLI over a rolling window. A well-formed SLO looks like: "99.5% of requests must return a non-5xx response over a rolling 28-day window." SLOs are the bridge between Golden Signals and business impact. When your SLO says 99.5% availability and you are at 99.2%, you are burning error budget—and that is the signal your team needs to prioritize reliability work over new features.

Error Budgets

An error budget is the allowable amount of unreliability defined by your SLO. For a 99.5% availability SLO over 28 days, the error budget is 0.5% of all requests—roughly 3.6 hours of complete downtime equivalent. When the error budget is healthy, teams can ship changes confidently. When it is depleted or burning fast, the SRE team has a data-driven mandate to freeze releases and focus on reliability.

Practical tip: Track error budget burn rate alongside your Golden Signals dashboard. A burn rate of 1x means you are consuming the budget at exactly the rate your SLO allows. A burn rate of 3x means you will exhaust your budget in one-third of the SLO window — an immediate escalation trigger.

How to Monitor Microservices Using Golden Signals

Monitoring microservices requires a disciplined approach in environments where dozens of services interact across different technology stacks. Golden Signals provide a clear framework for tracking system health across these distributed systems.

Step 1: Define Your Observability Pipeline per Service

Each microservice should expose telemetry for all four Golden Signals. Integrate them directly with your SLI definitions from day one:

Latency — measure P50, P95, and P99 request duration per service.

Errors — capture 4xx/5xx HTTP codes and application-level exceptions separately.

Traffic — monitor RPS, message throughput, and connection concurrency.

Saturation — track CPU, memory, thread pool usage, and queue depth.

Step 2: Choose a Unified Monitoring Stack

Popular platforms for production-grade application monitoring in microservices include:

Prometheus + Grafana — open-source, highly customizable, excellent for Kubernetes environments.

Datadog / New Relic — full-stack observability with built-in Golden Signals support and auto-instrumentation.

OpenTelemetry — CNCF-backed standard for vendor-neutral telemetry instrumentation.

Step 3: Isolate Service Boundaries

Group Golden Signals by service so you can detect where a problem originates rather than just knowing that something is wrong:

MicroserviceLatency (P95)Error RateTrafficSaturationAuth220ms1.2%5k RPS78% CPUPayments310ms3.1%3k RPS89% MemoryNotifications140ms0.4%12k RPS55% CPU

Step 4: Correlate Signals with Distributed Tracing

Use distributed tracing to map requests across services. Tools like Jaeger or Zipkin let you trace latency across hops, find the exact service causing error spikes, and visualize traffic flows and bottlenecks. A latency spike in the Payments service that traces back to a slow DB query is far more actionable than "P95 latency is high."

Learn how these principles apply in practice from our Centralized Monitoring case study for a B2C SaaS Music Platform.

Step 5. Automate Alerting with Context

Set thresholds and anomaly detection for each signal:

Latency > 500ms? Alert DevOps

Saturation > 90%? Trigger autoscaling

Error Rate > 2% over 5 mins? Notify engineering and create an incident ticket

Alerting Principles for SRE Teams

Effective application monitoring is only as useful as the alerting layer that translates signals into human action. Alert fatigue is one of the most common—and costly—failure modes in SRE programs. These principles help teams alert on what matters without overwhelming the on-call engineer.

Alert on Symptoms, Not Causes

Alert when the user experience is degraded (latency SLO is burning), not when a machine metric crosses a threshold. "CPU at 80%" is a cause; "P95 latency exceeding 500ms for 5 minutes" is a symptom your SLO cares about.

Use Error Budget Burn Rate as Your Primary Alert

A fast burn rate (e.g., 3x or 6x) on your error budget is a better paging condition than raw signal thresholds. It tells you not just that something is wrong, but how urgently you need to act based on your reliability commitments.

Sample Alert Thresholds (Illustrative Only)

SignalSample ThresholdSuggested ActionUrgencyLatency (P95)>500ms for 5 minPage on-call SREHighError Rate>2% over 5 minCreate incident ticket + notify engineeringHighSaturation (CPU)>90% for 10 minTrigger autoscaling policyMediumError Budget Burn3× rate for 1 hourIncident call, feature freeze considerationCritical

Methodology note: These thresholds are starting-point illustrations. Your production values should be calibrated against your own service baselines, user SLAs, and SLO definitions. A payment service tolerates far less latency than an async batch job.

Practical Application: Using APM Dashboards for SRE Monitoring

Application Performance Management (APM) dashboards integrate Golden Signals into a single view, allowing teams to monitor all critical metrics simultaneously. The operations team can use APM dashboards to get real-time insights into latency, errors, traffic, and saturation—reducing the cognitive load during incident response.

The most valuable APM features for SRE teams include:

One-hop dependency views — shows only the immediate upstream and downstream services of a failing component, dramatically narrowing the root-cause investigation scope and reducing MTTR.

Centralized Golden Signals panels — all four signals per service in one view, eliminating tool-switching during incidents.

SLO burn rate overlays — trend lines showing how quickly the error budget is being consumed, integrated alongside raw Golden Signals.

Proactive anomaly detection — ML-powered tools like Datadog and Dynatrace flag statistically unusual patterns before thresholds breach.

What is the Significance of Distinguishing 500 vs. 400 Errors in SRE Monitoring?

The distinction between 500 and 400 errors in application monitoring is fundamental to correct incident prioritization. Conflating them inflates your error rate SLI and may generate alerts that do not reflect actual service degradation.

Error TypeCauseSeveritySRE Response500 — Server errorSystem or application failureHighImmediate investigation, possible incident declaration400 — Client errorBad input, expired auth token, invalid requestLowerMonitor trends; investigate only on sustained spikes

A good SLI definition for errors counts only server-side failures (5xx) against your reliability budget. A sudden 400-error spike may signal a client SDK bug, a bot campaign, or a broken authentication flow—all worth investigating, but none of them are a service outage.

SRE Monitoring Dashboard Best Practices

A well-structured SRE dashboard makes or breaks incident response. It is not about displaying all available data—it is about surfacing the right insights at the right time. See the official Google SRE Book on monitoring for the principles that underpin these practices.

1. Prioritize Golden Signals and SLO Burn Rate at the Top

Place latency (P50/P95), error rate (%), traffic (RPS), and saturation front and center. Add SLO burn rate immediately below so engineers can assess reliability impact at a glance without scrolling.

2. Use Visual Cues Consistently

Color-code thresholds (green / yellow / red), use sparklines for trend visualization, and heatmaps to identify saturation patterns across clusters or availability zones.

3. Segment by Environment and Service

Separate production, staging, and dev views. Within production, segment by service or team ownership and by availability zone. This isolation dramatically reduces the time to pinpoint which service is responsible during an incident.

4. Link Metrics to Logs and Traces

Make your dashboards navigable: a latency spike should be one click away from the related trace in Jaeger, and a spike in errors should link directly to filtered log output in Kibana or Grafana Loki.

5. Provide Role-Appropriate Views

Use templating (Grafana variables, Datadog template variables) to serve multiple audiences from a single dashboard: SRE/on-call engineers need real-time signal detail; engineering teams need per-service deep dives; leadership needs SLO health summaries.

6. Treat Dashboards as Living Documents

Prune panels that nobody uses, reassess thresholds quarterly against updated baselines, and add deployment or incident annotations so that future engineers understand historical anomalies in context.

How Gart Implements SRE Monitoring in 30–60 Days

Generic best practices are helpful, but implementation details are where most teams struggle. Here is how Gart's SRE team approaches application monitoring engagements from day one, based on hands-on delivery experience across SaaS, cloud-native, and distributed environments—reviewed by Fedir Kompaniiets, Co-founder at Gart Solutions, who has designed monitoring and observability systems across multiple industries.

Days 1–14: Baseline and Instrumentation

Audit existing telemetry: what is already collected, what is missing, what is noisy.

Instrument all services with OpenTelemetry or native exporters for all four Golden Signals.

Deploy Prometheus + Grafana or connect to the client's existing observability platform.

Establish baseline latency, error rate, and saturation profiles per service under normal load.

Days 15–30: SLIs, SLOs, and Initial Alerting

Define SLIs for each critical service in collaboration with product and engineering stakeholders.

Draft SLOs and calculate initial error budgets based on business risk tolerance.

Configure symptom-based alerts (burn rate, not raw thresholds) with PagerDuty or Opsgenie routing.

Stand up the first three dashboards: overall service health, per-service Golden Signals, SLO burn rate.

Days 31–60: Noise Reduction and Handover

Tune alert thresholds against the observed baseline to eliminate alert fatigue.

Remove noisy, low-signal alerts that were generating false pages.

Integrate distributed tracing for the highest-traffic services.

Run a simulated incident to validate the monitoring stack end-to-end before handover.

Deliver runbooks and on-call documentation tied to each alert condition.

Real outcome: After implementing Golden Signals and SLO-based alerting for a B2C SaaS platform, the client reduced MTTR by 60% within two months. The primary driver was eliminating alert fatigue (previously 80+ daily alerts, reduced to 8 actionable ones) and linking every alert to a runbook with a clear first-responder action. Read the full context: Centralized Monitoring for a B2C SaaS Music Platform.

Watch How we Built "Advanced Monitoring for Sustainable Landfill Management"

Conclusion

Ready to take your system's reliability and performance to the next level? Gart Solutions offers top-tier SRE Monitoring services to ensure your systems are always running smoothly and efficiently. Our experts can help you identify and address potential issues before they impact your business, ensuring minimal downtime and optimal performance.

Gart Solutions · Expert SRE Services

Is Your Application Monitoring Ready for Production?

Engineering teams that invest in proper SRE monitoring and application monitoring reduce MTTR, protect error budgets, and ship with confidence. Gart's SRE team has designed and deployed monitoring stacks for SaaS platforms, Kubernetes-native environments, fintech, and healthcare systems.

60%

MTTR reduction for SaaS clients

30

Days to working SLO dashboards

99.9%

Availability target for managed clients

Our services cover the full monitoring lifecycle — from telemetry instrumentation and Golden Signal dashboards to SLO definition, alert tuning, and on-call runbooks.

Golden Signals Setup

SLI / SLO Definition

Prometheus + Grafana

Alert Tuning

Distributed Tracing

Kubernetes Monitoring

Incident Runbooks

Talk to an SRE Expert

Explore Monitoring Services

B2C SaaS Music Platform

Centralized monitoring across global infrastructure — 60% MTTR reduction in 2 months.

Digital Landfill Platform

Cloud-agnostic monitoring for IoT emissions data with multi-country compliance.

Fedir Kompaniiets

Co-founder & CEO, Gart Solutions · Cloud Architect & DevOps Consultant

Fedir is a technology enthusiast with over a decade of diverse industry experience. He co-founded Gart Solutions to address complex tech challenges related to Digital Transformation, helping businesses focus on what matters most — scaling. Fedir is committed to driving sustainable IT transformation, helping SMBs innovate, plan future growth, and navigate the "tech madness" through expert DevOps and Cloud managed services. Connect on LinkedIn.

In my experience optimizing cloud costs, especially on AWS, I often find that many quick wins are in the "easy to implement - good savings potential" quadrant.

[lwptoc]

That's why I've decided to share some straightforward methods for optimizing expenses on AWS that will help you save over 80% of your budget.

Choose reserved instances

Potential Savings: Up to 72%

Choosing reserved instances involves committing to a subscription, even partially, and offers a discount for long-term rentals of one to three years. While planning for a year is often deemed long-term for many companies, especially in Ukraine, reserving resources for 1-3 years carries risks but comes with the reward of a maximum discount of up to 72%.

You can check all the current pricing details on the official website - Amazon EC2 Reserved Instances

Purchase Saving Plans (Instead of On-Demand)

Potential Savings: Up to 72%

There are three types of saving plans: Compute Savings Plan, EC2 Instance Savings Plan, SageMaker Savings Plan.

AWS Compute Savings Plan is an Amazon Web Services option that allows users to receive discounts on computational resources in exchange for committing to using a specific volume of resources over a defined period (usually one or three years). This plan offers flexibility in utilizing various computing services, such as EC2, Fargate, and Lambda, at reduced prices.

AWS EC2 Instance Savings Plan is a program from Amazon Web Services that offers discounted rates exclusively for the use of EC2 instances. This plan is specifically tailored for the utilization of EC2 instances, providing discounts for a specific instance family, regardless of the region.

AWS SageMaker Savings Plan allows users to get discounts on SageMaker usage in exchange for committing to using a specific volume of computational resources over a defined period (usually one or three years).

The discount is available for one and three years with the option of full, partial upfront payment, or no upfront payment. EC2 can help save up to 72%, but it applies exclusively to EC2 instances.

Utilize Various Storage Classes for S3 (Including Intelligent Tier)

Potential Savings: 40% to 95%

AWS offers numerous options for storing data at different access levels. For instance, S3 Intelligent-Tiering automatically stores objects at three access levels: one tier optimized for frequent access, 40% cheaper tier optimized for infrequent access, and 68% cheaper tier optimized for rarely accessed data (e.g., archives).

S3 Intelligent-Tiering has the same price per 1 GB as S3 Standard — $0.023 USD.

However, the key advantage of Intelligent Tiering is its ability to automatically move objects that haven't been accessed for a specific period to lower access tiers.

Every 30, 90, and 180 days, Intelligent Tiering automatically shifts an object to the next access tier, potentially saving companies from 40% to 95%. This means that for certain objects (e.g., archives), it may be appropriate to pay only $0.0125 USD per 1 GB or $0.004 per 1 GB compared to the standard price of $0.023 USD.

Information regarding the pricing of Amazon S3

AWS Compute Optimizer

Potential Savings: quite significant

The AWS Compute Optimizer dashboard is a tool that lets users assess and prioritize optimization opportunities for their AWS resources.

The dashboard provides detailed information about potential cost savings and performance improvements, as the recommendations are based on an analysis of resource specifications and usage metrics.

The dashboard covers various types of resources, such as EC2 instances, Auto Scaling groups, Lambda functions, Amazon ECS services on Fargate, and Amazon EBS volumes.

For example, AWS Compute Optimizer reproduces information about underutilized or overutilized resources allocated for ECS Fargate services or Lambda functions. Regularly keeping an eye on this dashboard can help you make informed decisions to optimize costs and enhance performance.

Use Fargate in EKS for underutilized EC2 nodes

If your EKS nodes aren't fully used most of the time, it makes sense to consider using Fargate profiles. With AWS Fargate, you pay for a specific amount of memory/CPU resources needed for your POD, rather than paying for an entire EC2 virtual machine.

For example, let's say you have an application deployed in a Kubernetes cluster managed by Amazon EKS (Elastic Kubernetes Service). The application experiences variable traffic, with peak loads during specific hours of the day or week (like a marketplace or an online store), and you want to optimize infrastructure costs. To address this, you need to create a Fargate Profile that defines which PODs should run on Fargate. Configure Kubernetes Horizontal Pod Autoscaler (HPA) to automatically scale the number of POD replicas based on their resource usage (such as CPU or memory usage).

Manage Workload Across Different Regions

Potential Savings: significant in most cases

When handling workload across multiple regions, it's crucial to consider various aspects such as cost allocation tags, budgets, notifications, and data remediation.

Cost Allocation Tags: Classify and track expenses based on different labels like program, environment, team, or project.

AWS Budgets: Define spending thresholds and receive notifications when expenses exceed set limits. Create budgets specifically for your workload or allocate budgets to specific services or cost allocation tags.

Notifications: Set up alerts when expenses approach or surpass predefined thresholds. Timely notifications help take actions to optimize costs and prevent overspending.

Remediation: Implement mechanisms to rectify expenses based on your workload requirements. This may involve automated actions or manual interventions to address cost-related issues.

Regional Variances: Consider regional differences in pricing and data transfer costs when designing workload architectures.

Reserved Instances and Savings Plans: Utilize reserved instances or savings plans to achieve cost savings.

AWS Cost Explorer: Use this tool for visualizing and analyzing your expenses. Cost Explorer provides insights into your usage and spending trends, enabling you to identify areas of high costs and potential opportunities for cost savings.

Transition to Graviton (ARM)

Potential Savings: Up to 30%

Graviton utilizes Amazon's server-grade ARM processors developed in-house. The new processors and instances prove beneficial for various applications, including high-performance computing, batch processing, electronic design automation (EDA) automation, multimedia encoding, scientific modeling, distributed analytics, and machine learning inference on processor-based systems.

The processor family is based on ARM architecture, likely functioning as a system on a chip (SoC). This translates to lower power consumption costs while still offering satisfactory performance for the majority of clients. Key advantages of AWS Graviton include cost reduction, low latency, improved scalability, enhanced availability, and security.

Spot Instances Instead of On-Demand

Potential Savings: Up to 30%

Utilizing spot instances is essentially a resource exchange. When Amazon has surplus resources lying idle, you can set the maximum price you're willing to pay for them. The catch is that if there are no available resources, your requested capacity won't be granted.

However, there's a risk that if demand suddenly surges and the spot price exceeds your set maximum price, your spot instance will be terminated.

Spot instances operate like an auction, so the price is not fixed. We specify the maximum we're willing to pay, and AWS determines who gets the computational power. If we are willing to pay $0.1 per hour and the market price is $0.05, we will pay exactly $0.05.

Use Interface Endpoints or Gateway Endpoints to save on traffic costs (S3, SQS, DynamoDB, etc.)

Potential Savings: Depends on the workload

Interface Endpoints operate based on AWS PrivateLink, allowing access to AWS services through a private network connection without going through the internet. By using Interface Endpoints, you can save on data transfer costs associated with traffic.

Utilizing Interface Endpoints or Gateway Endpoints can indeed help save on traffic costs when accessing services like Amazon S3, Amazon SQS, and Amazon DynamoDB from your Amazon Virtual Private Cloud (VPC).

Key points:

Amazon S3: With an Interface Endpoint for S3, you can privately access S3 buckets without incurring data transfer costs between your VPC and S3.

Amazon SQS: Interface Endpoints for SQS enable secure interaction with SQS queues within your VPC, avoiding data transfer costs for communication with SQS.

Amazon DynamoDB: Using an Interface Endpoint for DynamoDB, you can access DynamoDB tables in your VPC without incurring data transfer costs.

Additionally, Interface Endpoints allow private access to AWS services using private IP addresses within your VPC, eliminating the need for internet gateway traffic. This helps eliminate data transfer costs for accessing services like S3, SQS, and DynamoDB from your VPC.

Optimize Image Sizes for Faster Loading

Potential Savings: Depends on the workload

Optimizing image sizes can help you save in various ways.

Reduce ECR Costs: By storing smaller instances, you can cut down expenses on Amazon Elastic Container Registry (ECR).

Minimize EBS Volumes on EKS Nodes: Keeping smaller volumes on Amazon Elastic Kubernetes Service (EKS) nodes helps in cost reduction.

Accelerate Container Launch Times: Faster container launch times ultimately lead to quicker task execution.

Optimization Methods:

Use the Right Image: Employ the most efficient image for your task; for instance, Alpine may be sufficient in certain scenarios.

Remove Unnecessary Data: Trim excess data and packages from the image.

Multi-Stage Image Builds: Utilize multi-stage image builds by employing multiple FROM instructions.

Use .dockerignore: Prevent the addition of unnecessary files by employing a .dockerignore file.

Reduce Instruction Count: Minimize the number of instructions, as each instruction adds extra weight to the hash. Group instructions using the && operator.

Layer Consolidation: Move frequently changing layers to the end of the Dockerfile.

These optimization methods can contribute to faster image loading, reduced storage costs, and improved overall performance in containerized environments.

Use Load Balancers to Save on IP Address Costs

Potential Savings: depends on the workload

Starting from February 2024, Amazon begins billing for each public IPv4 address. Employing a load balancer can help save on IP address costs by using a shared IP address, multiplexing traffic between ports, load balancing algorithms, and handling SSL/TLS.

By consolidating multiple services and instances under a single IP address, you can achieve cost savings while effectively managing incoming traffic.

Optimize Database Services for Higher Performance (MySQL, PostgreSQL, etc.)

Potential Savings: depends on the workload

AWS provides default settings for databases that are suitable for average workloads. If a significant portion of your monthly bill is related to AWS RDS, it's worth paying attention to parameter settings related to databases.

Some of the most effective settings may include:

Use Database-Optimized Instances: For example, instances in the R5 or X1 class are optimized for working with databases.

Choose Storage Type: General Purpose SSD (gp2) is typically cheaper than Provisioned IOPS SSD (io1/io2).

AWS RDS Auto Scaling: Automatically increase or decrease storage size based on demand.

If you can optimize the database workload, it may allow you to use smaller instance sizes without compromising performance.

Regularly Update Instances for Better Performance and Lower Costs

Potential Savings: Minor

As Amazon deploys new servers in their data processing centers to provide resources for running more instances for customers, these new servers come with the latest equipment, typically better than previous generations. Usually, the latest two to three generations are available. Make sure you update regularly to effectively utilize these resources.

Take Memory Optimize instances, for example, and compare the price change based on the relevance of one instance over another. Regular updates can ensure that you are using resources efficiently.

InstanceGenerationDescriptionOn-Demand Price (USD/hour)m6g.large6thInstances based on ARM processors offer improved performance and energy efficiency.$0.077m5.large5thGeneral-purpose instances with a balanced combination of CPU and memory, designed to support high-speed network access.$0.096m4.large4thA good balance between CPU, memory, and network resources.$0.1m3.large3rdOne of the previous generations, less efficient than m5 and m4.Not avilable

Use RDS Proxy to reduce the load on RDS

Potential for savings: Low

RDS Proxy is used to relieve the load on servers and RDS databases by reusing existing connections instead of creating new ones. Additionally, RDS Proxy improves failover during the switch of a standby read replica node to the master.

Imagine you have a web application that uses Amazon RDS to manage the database. This application experiences variable traffic intensity, and during peak periods, such as advertising campaigns or special events, it undergoes high database load due to a large number of simultaneous requests.

During peak loads, the RDS database may encounter performance and availability issues due to the high number of concurrent connections and queries. This can lead to delays in responses or even service unavailability.

RDS Proxy manages connection pools to the database, significantly reducing the number of direct connections to the database itself.

By efficiently managing connections, RDS Proxy provides higher availability and stability, especially during peak periods.

Using RDS Proxy reduces the load on RDS, and consequently, the costs are reduced too.

Define the storage policy in CloudWatch

Potential for savings: depends on the workload, could be significant.

The storage policy in Amazon CloudWatch determines how long data should be retained in CloudWatch Logs before it is automatically deleted.

Setting the right storage policy is crucial for efficient data management and cost optimization. While the "Never" option is available, it is generally not recommended for most use cases due to potential costs and data management issues.

Typically, best practice involves defining a specific retention period based on your organization's requirements, compliance policies, and needs.

Avoid using an undefined data retention period unless there is a specific reason. By doing this, you are already saving on costs.

Configure AWS Config to monitor only the events you need

Potential for savings: depends on the workload

AWS Config allows you to track and record changes to AWS resources, helping you maintain compliance, security, and governance. AWS Config provides compliance reports based on rules you define. You can access these reports on the AWS Config dashboard to see the status of tracked resources.

You can set up Amazon SNS notifications to receive alerts when AWS Config detects non-compliance with your defined rules. This can help you take immediate action to address the issue. By configuring AWS Config with specific rules and resources you need to monitor, you can efficiently manage your AWS environment, maintain compliance requirements, and avoid paying for rules you don't need.

Use lifecycle policies for S3 and ECR

Potential for savings: depends on the workload

S3 allows you to configure automatic deletion of individual objects or groups of objects based on specified conditions and schedules. You can set up lifecycle policies for objects in each specific bucket. By creating data migration policies using S3 Lifecycle, you can define the lifecycle of your object and reduce storage costs.

These object migration policies can be identified by storage periods. You can specify a policy for the entire S3 bucket or for specific prefixes. The cost of data migration during the lifecycle is determined by the cost of transfers. By configuring a lifecycle policy for ECR, you can avoid unnecessary expenses on storing Docker images that you no longer need.

Switch to using GP3 storage type for EBS

Potential for savings: 20%

By default, AWS creates gp2 EBS volumes, but it's almost always preferable to choose gp3 — the latest generation of EBS volumes, which provides more IOPS by default and is cheaper.

For example, in the US-east-1 region, the price for a gp2 volume is $0.10 per gigabyte-month of provisioned storage, while for gp3, it's $0.08/GB per month. If you have 5 TB of EBS volume on your account, you can save $100 per month by simply switching from gp2 to gp3.

Switch the format of public IP addresses from IPv4 to IPv6

Potential for savings: depending on the workload

Starting from February 1, 2024, AWS will begin charging for each public IPv4 address at a rate of $0.005 per IP address per hour. For example, taking 100 public IP addresses on EC2 x $0.005 per public IP address per month x 730 hours = $365.00 per month.

While this figure might not seem huge (without tying it to the company's capabilities), it can add up to significant network costs. Thus, the optimal time to transition to IPv6 was a couple of years ago or now.

Here are some resources about this recent update that will guide you on how to use IPv6 with widely-used services — AWS Public IPv4 Address Charge.

Collaborate with AWS professionals and partners for expertise and discounts

Potential for savings: ~5% of the contract amount through discounts.

AWS Partner Network (APN) Discounts: Companies that are members of the AWS Partner Network (APN) can access special discounts, which they can pass on to their clients. Partners reaching a certain level in the APN program often have access to better pricing offers.

Custom Pricing Agreements: Some AWS partners may have the opportunity to negotiate special pricing agreements with AWS, enabling them to offer unique discounts to their clients. This can be particularly relevant for companies involved in consulting or system integration.

Reseller Discounts: As resellers of AWS services, partners can purchase services at wholesale prices and sell them to clients with a markup, still offering a discount from standard AWS prices. They may also provide bundled offerings that include AWS services and their own additional services.

Credit Programs: AWS frequently offers credit programs or vouchers that partners can pass on to their clients. These could be promo codes or discounts for a specific period.

Seek assistance from AWS professionals and partners. Often, this is more cost-effective than purchasing and configuring everything independently. Given the intricacies of cloud space optimization, expertise in this matter can save you tens or hundreds of thousands of dollars.

More valuable tips for optimizing costs and improving efficiency in AWS environments:

Scheduled TurnOff/TurnOn for NonProd environments: If the Development team is in the same timezone, significant savings can be achieved by, for example, scaling the AutoScaling group of instances/clusters/RDS to zero during the night and weekends when services are not actively used.

Move static content to an S3 Bucket & CloudFront: To prevent service charges for static content, consider utilizing Amazon S3 for storing static files and CloudFront for content delivery.

Use API Gateway/Lambda/Lambda Edge where possible: In such setups, you only pay for the actual usage of the service. This is especially noticeable in NonProd environments where resources are often underutilized.

If your CI/CD agents are on EC2, migrate to CodeBuild: AWS CodeBuild can be a more cost-effective and scalable solution for your continuous integration and delivery needs.

CloudWatch covers the needs of 99% of projects for Monitoring and Logging: Avoid using third-party solutions if AWS CloudWatch meets your requirements. It provides comprehensive monitoring and logging capabilities for most projects.

Feel free to reach out to me or other specialists for an audit, a comprehensive optimization package, or just advice.

Cost-effectiveness in DevOps and cloud strategy isn’t about finding the cheapest provider — it's about building scalable, sustainable, and efficient systems that reduce total cost of ownership while supporting long-term business growth.

What Does Cost-Effectiveness Mean in DevOps and Cloud?

Cost-effectiveness in this context refers to balancing investment with long-term value, not cutting corners. Instead of opting for the cheapest service or tool available, it’s about making strategic decisions that improve performance, reliability, and scalability over time.

Too often, organizations assume cutting IT spend or chasing free cloud credits is “efficient.” But this can backfire when hidden costs, performance bottlenecks, or non-scalable infrastructure come into play.

Why the Cheapest Option Isn’t Always the Best Long-Term Choice

There are cloud startup programs, but it's essential to approach them carefully. Often, businesses make mistakes in network design and services while using free cloud credits, leading to significant additional infrastructure costs once the free period ends.

One startup leveraged free credits from the Google Cloud Startup Program to quickly build its product. However, when the free period ended, they faced crippling infrastructure costs due to a lack of optimization. Check this case study: DevOps for Microsoft HoloLens Application Run on GCP

Summary:

Choosing the lowest-cost IT or cloud option often leads to technical debt, downtime, and scalability issues, costing more in the long run.

While it's tempting to lean into "free tiers" and minimal upfront expenses, these choices frequently come with hidden costs:

Limited functionality

Lack of support or SLAs

High overage charges after trial periods end

At Gart Solutions, we promote a sustainable approach that maximizes ROI while aligning with business goals, ensuring that every IT dollar contributes to performance, stability, and growth.

Sustainable IT Cost Reductions vs. Short-Term Cuts

Summary:

Cutting costs for immediate savings often leads to long-term inefficiencies. True cost-effectiveness means aligning IT spending with business strategy and future-readiness.

In economic downturns, it’s natural for CIOs and IT leaders to seek cost savings. But reckless budget slashing can do more harm than good.

Avoid These 3 Common Mistakes:

Short-term focus: Cutting across the board can hinder future growth and innovation.

Overreliance on consultants: Consultants often suggest low-hanging fruit, leaving limited potential for long-term savings.

Neglecting stakeholders: Ignoring the impact of IT cuts on business operations can damage relationships and hinder outcomes.

Our Strategy for Cost-Effective DevOps and Cloud Solutions

Summary:

We combine smart savings with strategic investments, helping clients avoid over-engineering while investing wisely in scalable, future-ready infrastructure.

Not every component of your infrastructure needs premium tools or enterprise licenses. At Gart Solutions, we guide clients through intelligent decision-making:

Where to optimize for cost (e.g., Spot VMs, autoscaling, open-source tools)

Where to invest for growth (e.g., security, automation, compliance tooling)

Our goal: make sure every dollar contributes to uptime, user experience, or innovation.

By carefully analyzing your needs and implementing smart strategies, we ensure that you're getting the most out of your IT investments. This approach not only reduces waste but also ensures that every dollar spent contributes directly to your business goals.

Read more: 20 Easy Ways to Optimize Expenses on AWS and Save Over 80% of Your Budget

Strategic Product Design as a Foundation for Cost Savings

The cornerstone of our cost-effective approach is strategic product design. We focus on laying down the right basic architecture from the start, emphasizing long-term stability and scalability. This ensures that your IT solutions can adapt and grow with your business without encountering major issues or requiring extensive reworks.

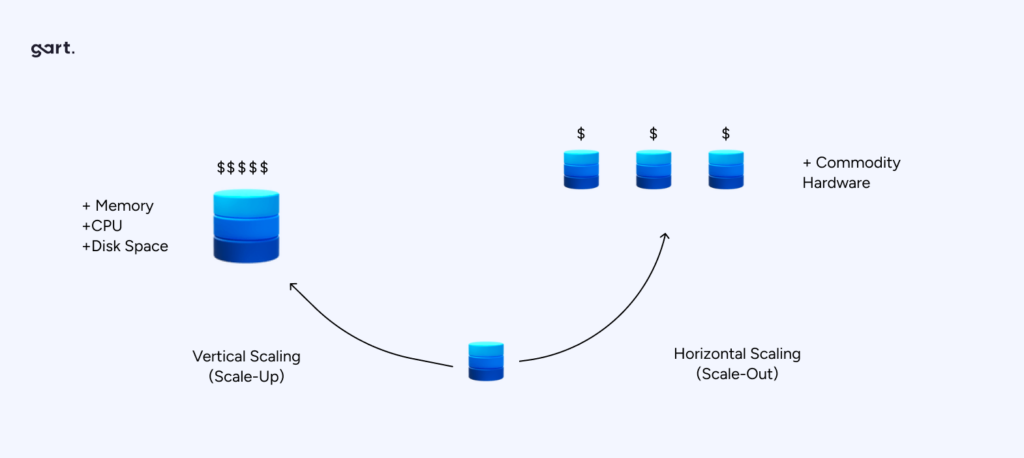

Our solutions are designed with your future in mind. We create systems that can scale seamlessly as your business grows, allowing you to manage costs effectively at every stage of your journey. One of the key benefits of our approach is the ability to avoid future technological problems related to growth, migration, or other common challenges.

This forward-thinking approach prevents the need for costly overhauls down the line and provides a stable foundation for your ongoing success.

Case Study: Azure Spot VMs for Jewelry AI Vision

In one example, we helped a visual AI platform for the jewelry industry cut cloud costs by 81% using Azure Spot VMs. By redesigning workloads for elasticity and resilience, we optimized compute consumption without compromising performance.

Lesson: Design choices made early unlock compounding savings over time.

Check this cost optimization case study: Cutting Costs by 81%: Azure Spot VMs Drive Cost Efficiency for Jewelry AI Vision.

Get a sample of IT Audit

Sign up now

Get on email

Loading...

Thank you!

You have successfully joined our subscriber list.

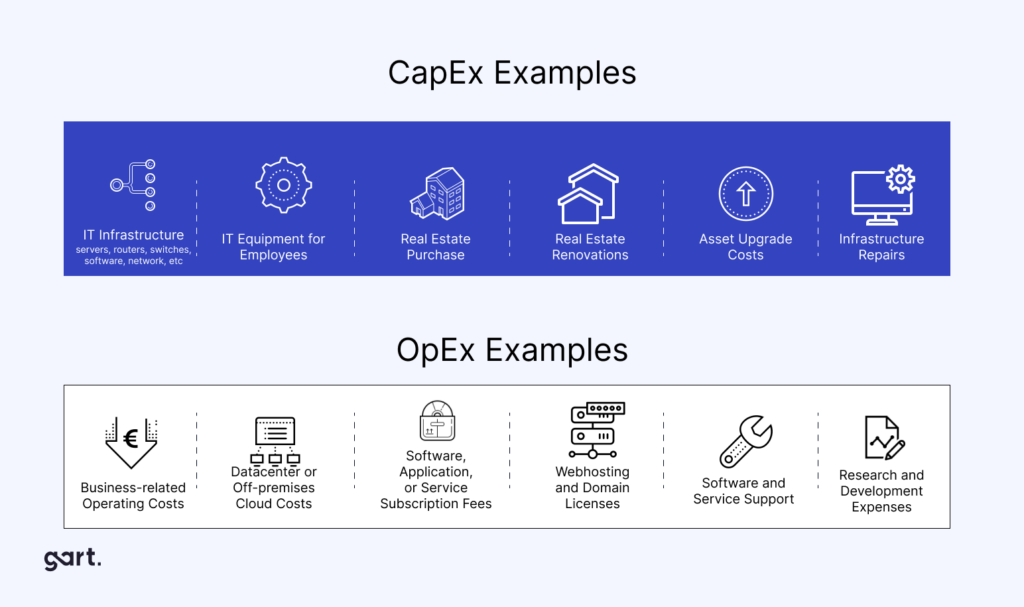

Understanding Cloud Costs in DevOps: OpEx vs. CapEx

Summary:

DevOps-related cloud costs fall into two main categories: Operational Expenses (OpEx) and Capital Expenses (CapEx). Knowing the difference helps you budget and optimize more effectively.

Operational Expenses (OpEx)

OpEx refers to ongoing costs of running DevOps workloads in the cloud, such as:

Cloud instance runtime (compute)

Storage usage

Managed services (like databases or monitoring tools)

Traffic and bandwidth

These costs are typically pay-as-you-go and vary month-to-month.

Capital Expenses (CapEx)

CapEx refers to one-time or upfront investments, such as:

Reserved cloud capacity (e.g., AWS Reserved Instances)

On-premise infrastructure purchases

Software licenses or setup fees

Choosing CapEx can reduce monthly spending, but it requires commitment and forecasting.

What is FinOps and Why Does It Matter in Cost Optimization

Summary:

FinOps (Financial Operations) is a framework that brings financial discipline into DevOps, ensuring cloud spending is aligned with business value and usage.

Defining FinOps in Simple Terms

FinOps helps teams:

Understand where cloud dollars are going

Predict costs before deploying

Optimize spend without stalling innovation

It's the bridge between engineering, finance, and operations.

Why FinOps is a Game-Changer

In traditional IT, budgets are fixed. But in the cloud, expenses are variable and usage-driven. That makes cost control harder, unless teams actively manage and monitor costs.

FinOps brings visibility and accountability across:

Engineers (who build infrastructure)

Finance teams (who manage budgets)

Product managers (who track business value)

Key FinOps Practices:

Real-time cloud cost reporting

Cost forecasting by team/project

Tagging resources for accountability

Optimization sprints focused on spend reduction.

FinOps, or Financial Operations, is an evolving cloud financial management discipline that brings financial accountability to the variable spend model of cloud, enabling distributed teams to make business trade-offs between speed, cost, and quality.

How We Integrate FinOps Into Our DevOps Services

At Gart Solutions, we bake FinOps principles directly into our DevOps pipelines, so clients gain both infrastructure automation and cost control from day one.

Our FinOps Integration Approach Includes:

Cloud cost dashboards visible to stakeholders

Automated alerts for budget thresholds

Resource tagging and cost attribution per environment

Collaboration between engineers and finance on priorities

At Gart Solutions, we integrate FinOps practices into our DevOps and cloud services to further enhance cost-effectiveness and sustainability.

Case Studies: Cost-Effective DevOps in Action

Case Study 1: DevOps for Microsoft HoloLens Application on GCP

Challenge:A startup used Google Cloud's free startup credits to launch an ambitious product. But when the credits expired, they faced massive costs due to inefficient network design and a lack of resource planning.

Solution:Gart audited the infrastructure, implemented CI/CD pipelines, and restructured the architecture to reduce dependency on costly services.

Outcome:

48% reduction in monthly infrastructure spend

Improved performance and deployment speed

A scalable setup ready for product launch

Lesson:Free credits can create hidden risks. A strategic DevOps partner can turn short-term wins into sustainable growth.

Case Study 2: Cutting 81% Cloud Costs with Azure Spot VMs for AI Vision

Challenge:A jewelry AI startup faced high compute bills due to heavy visual processing and machine learning workloads.

Solution:Gart moved workloads to Azure Spot VMs, refactored pipelines for fault tolerance, and automated cost monitoring.

Outcome:

81% reduction in compute costs

Zero downtime during migration

Flexible scaling for future growth

Lesson:Cost savings don’t require cutting features, just smart architecture.

Long-Term Benefits of a Cost-Effective DevOps Strategy

Summary:

Sustainable DevOps isn’t just about saving money now. It helps your business scale smarter, reduce risk, and outperform competitors over time.

1. Lower Total Cost of Ownership (TCO)

You avoid patchwork fixes, re-platforming, and costly downtime. Efficient systems cost less to operate over years, not just months.

2. Greater Reliability

Fewer outages. Better performance. Happier users. And less stress for your team.

3. Future-Proof Architecture

With scalable infrastructure, your systems evolve with your needs, not against them.

4. Better Use of Internal Resources

Your team focuses on innovation instead of fixing things or firefighting budget issues.

DevOps Cost Decision Table – Cheap vs Sustainable

Understanding the difference between cost-cutting and cost-effectiveness is key. Here’s a side-by-side comparison that outlines why strategic investment outperforms bargain-basement decisions over time.

CriteriaCheap DevOps SolutionSustainable DevOps SolutionInitial CostLow upfront spendModerate, aligned with needs and future goalsScalabilityPoor – requires rebuildBuilt to scaleCompliance ReadinessLacks safeguardsAligned with HIPAA, GDPR, etc.Maintenance & SupportLimited or absentIncluded, proactive monitoringTotal Cost Over 12–24 MonthsHigh due to technical debt and reworkLower due to long-term savingsBusiness ImpactRisk of downtime, slower innovationFaster delivery, greater stability

Conclusion:The sustainable path pays off — not just financially, but in operational resilience, scalability, and growth enablement.

Cost Optimization Checklist for IT Leaders

Use this checklist to review your DevOps and cloud setup for waste, inefficiencies, and untapped savings.

✅ Infrastructure & Cloud Usage

Are we using reserved instances or spot pricing effectively?

Are workloads appropriately sized and scheduled?

Are we auto-scaling based on demand?

✅ Monitoring & Observability

Do we track cloud costs by team or project?

Are alert thresholds in place for spending anomalies?

Are we logging usage by service tags?

✅ DevOps & Automation

Are pipelines automated to prevent manual errors?

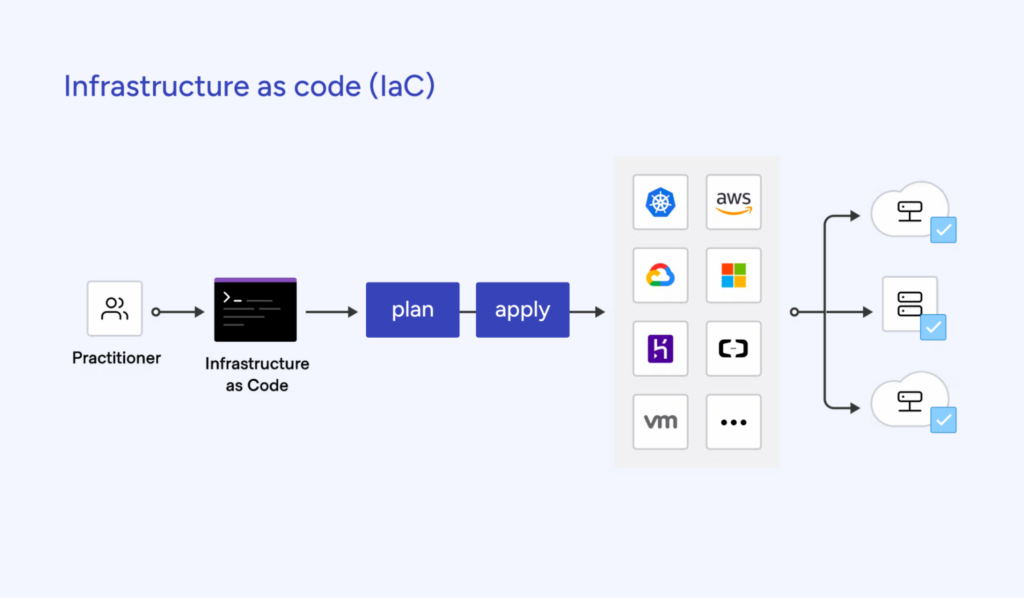

Are we deploying only what’s needed with IaC?

Are environments automatically shut down when idle?

✅ FinOps & Financial Governance

Do we review cloud spend weekly or monthly?

Are budgets and forecasts visible to Dev and Finance?

Have we assigned ownership for each cloud resource?

Conclusion

Sustainable DevOps isn't about spending less — it’s about spending smarter. At Gart Solutions, we believe that true cost-effectiveness is about creating sustainable, high-quality solutions that provide long-term value. By focusing on strategic design, smart resource utilization, and future-proofing your systems, we help you build a robust IT infrastructure that supports your business goals while keeping costs under control.

At Gart Solutions, our mission is to help you achieve IT sustainability and financial efficiency together.

Let’s build something that lasts, without overextending your budget.

Remember, indiscriminate cost-cutting can do more harm than good. A well-planned approach focused on long-term value is key to achieving sustainable IT cost reductions.