Multi-cloud Kubernetes management has moved from experimental to existential for enterprise engineering teams. By 2026, over 87% of organizations running containers span at least two cloud providers — yet fewer than a third report feeling in control of that complexity. This guide cuts through the noise to give you a clear-eyed view of what effective multi-cloud Kubernetes management actually looks like, where the real risks live, and how to build an operating model that scales. If your team is struggling with cross-cloud visibility, cost sprawl, or inconsistent governance, Gart’s Kubernetes managed services can help you regain control without a platform rewrite.

- What Is Multi-Cloud Kubernetes Management?

- Why Enterprises Choose Multi-Cloud Kubernetes — The Real Business Case

- Cloud Adoption Soars, Multi-Cloud Reigns Supreme

- Challenges in Multi-Cloud Kubernetes Deployments

- Multi-Cloud Kubernetes Challenges

- Security Concerns

- Multi-cloud Kubernetes Use Cases

- The Real Perils of Multi-Cloud Kubernetes Management

- Multi-Cloud Kubernetes Management: The Five Core Disciplines

- Multi-Cloud Kubernetes Management Tools: Comparison Matrix (2026)

- Best Practices for Multi-Cloud Kubernetes Management in 2026

- Building a Multi-Cloud Kubernetes Operating Model

- Need Help Managing Kubernetes Across Clouds?

- Conclusion

What Is Multi-Cloud Kubernetes Management?

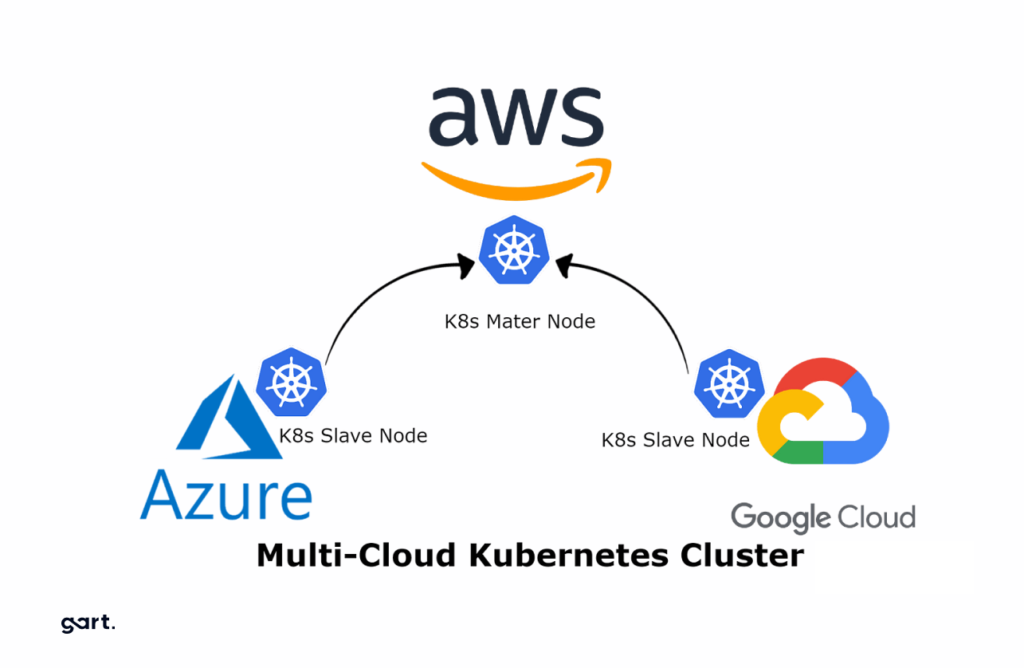

Multi-cloud Kubernetes management is the discipline of deploying, operating, observing, and governing containerized workloads across clusters that run on two or more public clouds – typically some combination of Amazon EKS, Google GKE, Microsoft AKS, and managed distributions like Red Hat OpenShift or Rancher. It encompasses cluster provisioning, workload scheduling, policy enforcement, observability pipelines, cost allocation, and security posture – all applied consistently across an environment that is, by nature, heterogeneous.

The Cloud Native Computing Foundation (CNCF) defines multi-cloud Kubernetes not as a product but as a capability – a set of organizational and technical practices that allow teams to treat cloud-provider-specific managed Kubernetes services as a common substrate. That framing matters: you are not buying multi-cloud Kubernetes, you are building it.

Key distinction: Multi-cloud Kubernetes management is not the same as hybrid cloud Kubernetes. Hybrid cloud involves private on-premises infrastructure alongside public clouds. Multi-cloud is strictly about managing workloads across two or more public cloud providers. Many enterprises operate both simultaneously, compounding the management challenge.

Why Enterprises Choose Multi-Cloud Kubernetes — The Real Business Case

Before engineering leaders can build the right operating model, they need to be honest about why their organization ended up multi-cloud in the first place. There is often a gap between the stated rationale and the actual origin story.

Legitimate Strategic Reasons

- Vendor lock-in avoidance: Preventing a single cloud provider from controlling pricing, SLAs, and product roadmaps over a multi-year horizon.

- Geographic data residency: Some cloud providers have superior coverage in specific regions, making multi-cloud necessary for compliance with GDPR, data sovereignty laws, or latency SLAs.

- Best-of-breed services: GCP’s BigQuery for analytics, AWS’s SageMaker for ML, Azure’s Active Directory integration for enterprise identity—no single cloud wins every capability battle.

- Resilience and disaster recovery: True active-active DR across clouds eliminates a single provider as a blast radius.

- M&A integration: Acquisitions that bring in workloads on a different cloud create multi-cloud by default.

How Organizations Actually End Up Multi-Cloud

In practice, most enterprises discover they are multi-cloud before they decide to be. A team spins up a GCP project because a new hire came from Google. A partner integration requires workloads on Azure. A startup acquisition brings its own AWS infrastructure. The result is unplanned, ungoverned multi-cloud Kubernetes sprawl – and that is the most dangerous form of it.

Cloud Adoption Soars, Multi-Cloud Reigns Supreme

According to a recent survey, a staggering 76% of organizations utilize multiple clouds, with industries like telecom, financial services, and software leading the charge. The reasons behind this shift are clear: reducing vendor dependency (53%), managing costs (45%), and expanding disaster recovery and cloud backup options (42%).

The landscape of cloud computing is rapidly evolving, with a clear preference for multi-cloud deployments emerging. This trend is driven by a desire to avoid vendor lock-in, optimize costs, and leverage the unique strengths of different cloud providers.

As organizations embrace multi-cloud, Kubernetes has emerged as a crucial orchestration tool, enabling seamless application deployment and management across different cloud environments. However, this transition is not without its challenges.

Challenges in Multi-Cloud Kubernetes Deployments

Expertise and Experience

The survey reveals a 6 percentage point increase (58%) in organizations citing inadequate internal experience and expertise as a major hurdle, indicating that IT teams are struggling to keep up with the rapidly growing Kubernetes footprint and multi-cloud operations.

Infrastructure Integration

Difficult integration with current infrastructure emerged as another significant challenge, with a 12 percentage point rise (50%), highlighting the complexities of harmonizing Kubernetes with existing systems.

Application Mobility

While one of the key benefits of Kubernetes is application mobility, 21% of respondents reported lack of app mobility as a concern, likely due to the use of proprietary cloud services or unique features that hinder portability across multiple clouds.

Security Concerns

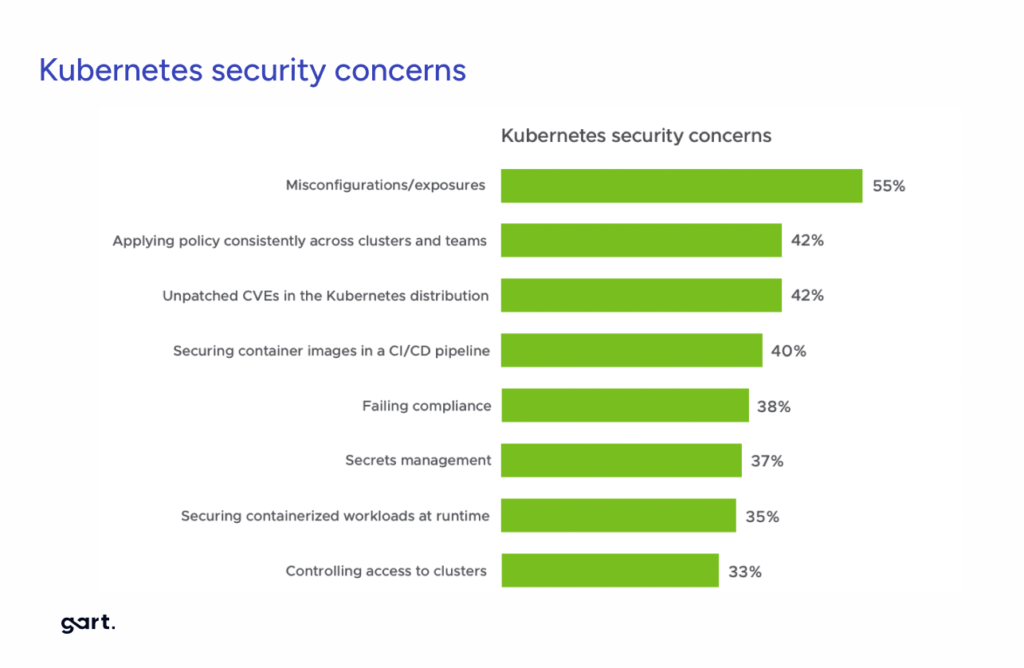

A staggering 97% of stakeholders reported ongoing security challenges, with misconfigurations/ exposures (55%) being the top concern. Applying consistent policies across clusters and teams (42%), unpatched CVEs (42%), failing compliance (38%), and controlling access to clusters (33%) were also significant security worries.

Multi-Cloud Kubernetes Challenges

Kubernetes, a container orchestration platform, is a perfect fit for multi-cloud environments. However, selecting the right Kubernetes distribution is crucial. This year’s survey reveals a growing focus on distributions that are:

- Easy to deploy, operate, and maintain (72%)

- Function well in hybrid environments (55%)

- Offer commercial support (49%)

Choosing the Right Kubernetes Distribution

Organizations are increasingly prioritizing ease of deployment, operation, and maintenance (72%), hybrid cloud compatibility (55%), and availability of commercial support (55%) when selecting a Kubernetes distribution. Vendor maturity, trust, and modularity are also crucial considerations.

Embracing Automation and Policy-Based Management

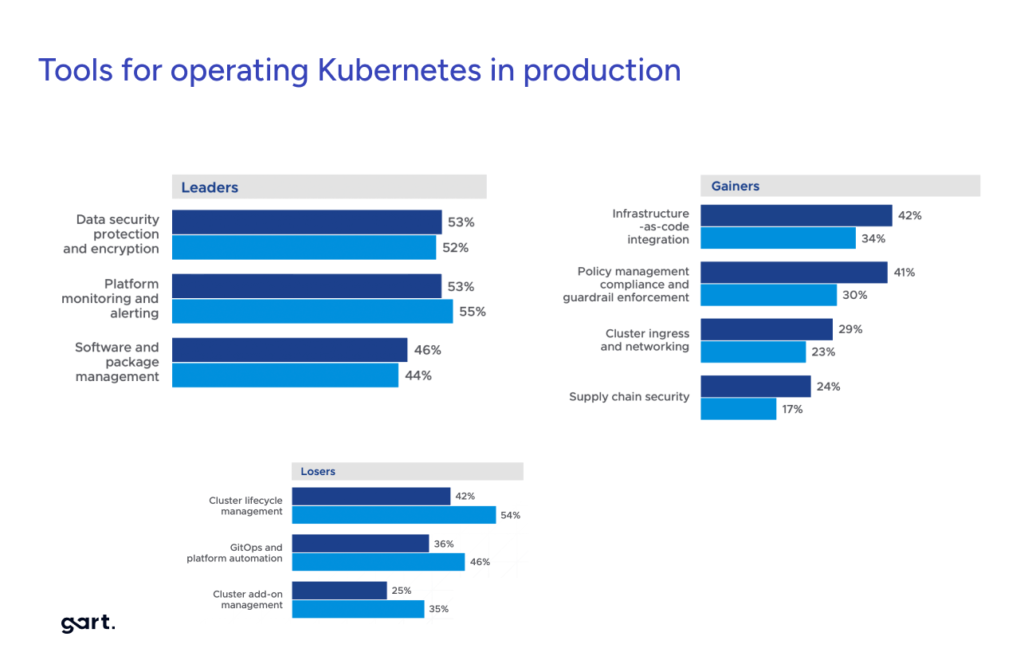

Infrastructure-as-code integration (42%), policy management, compliance, and guardrail enforcement (41%), and cluster ingress and networking (29%) have gained significant traction, enabling organizations to automate and streamline multi-cloud Kubernetes operations.

Investing in Security Tools

With 53% of respondents willing to pay for data security, protection, and encryption tools, organizations are recognizing the importance of robust security solutions in the multi-cloud landscape.

Adopting Service Mesh

The survey revealed that 92% of organizations have deployed some type of service mesh, underscoring its growing importance for enterprise application connectivity in multi-cloud environments.

Security Concerns

Security remains a top concern, with 97% of stakeholders reporting ongoing challenges. The focus has shifted from securing deployments to maintaining security across multi-cluster, multi-cloud environments. Misconfigurations and exposures (55%) are the primary threats.

Tools for Success

To succeed with multi-cloud Kubernetes, you need the right tools for the job. Significant shifts occurred in the tools that stakeholders view as useful this year, with policy-based management, infrastructure as code, and cluster ingress gaining the most ground. Stakeholders are increasingly willing to pay for critical tools to ensure success.

The right tools are essential for navigating the complexities of multi-cloud Kubernetes. Organizations are increasingly prioritizing:

- Data Security Tools (53%)

- Platform Monitoring and Alerting (53%)

- Policy-Based Management (41%)

- Infrastructure as Code (42%)

- Service Mesh Adoption (92%)

The move towards multi-cloud and Kubernetes is transforming the way organizations approach application development and deployment. By addressing challenges like skills gaps and security concerns, and leveraging the right tools, businesses can unlock the full potential of this powerful combination.

Multi-cloud Kubernetes Use Cases

High Availability and Disaster Recovery

Organizations can leverage multi-cloud Kubernetes to distribute their applications and workloads across multiple cloud providers, ensuring high availability and resilience against provider-specific outages or disasters. This aligns with the stated reason of “expanding disaster recovery and cloud backup options” for adopting multi-cloud (42% of respondents).

Vendor Lock-in Avoidance

One of the top reasons cited for using multiple clouds is reducing vendor dependency (53% of respondents). By deploying applications on Kubernetes across multiple cloud providers, organizations can avoid vendor lock-in and maintain flexibility in their cloud strategy.

Cost Optimization

Managing costs was cited as a reason for multi-cloud adoption by 45% of respondents. Kubernetes can help organizations optimize costs by dynamically scaling workloads across multiple clouds based on resource availability, pricing, and performance requirements.

Global Presence and Data Sovereignty

For organizations with a global customer base or strict data sovereignty requirements, a multi-cloud Kubernetes approach can enable them to distribute their applications and data across multiple regions or cloud providers, ensuring compliance and minimizing latency.

Cloud Migration and Hybrid Environments

As organizations migrate workloads from on-premises to the cloud or between different cloud providers, Kubernetes can facilitate a smooth transition by providing a consistent platform for application deployment and management across hybrid and multi-cloud environments.

Edge Computing

The survey noted that 26% of respondents plan to add or increase distributed edge deployments in the next year. Kubernetes can be leveraged to manage and orchestrate edge computing workloads across multiple cloud providers and on-premises environments, enabling low-latency processing and data processing closer to the source.

Kubernetes Distribution Selection for Multi-Cloud:

- Easy to deploy, operate and maintain (72%)

- Works in a hybrid cloud environment (55%)

- Availability of commercial support/professional services (55%)

- Vendor maturity and trust (46%)

- Leverage any Kubernetes across clouds without lock-in (37%)

- Modularity and works at the edge (around 25% each)

The Real Perils of Multi-Cloud Kubernetes Management

The “peril” framing is not hyperbole. Multi-cloud Kubernetes introduces compounding failure modes that would not exist in a single-cloud environment. Understanding them precisely is the first step to mitigating them.

Observability Fragmentation

Metrics, logs, and traces live in separate provider-native systems (CloudWatch, Google Cloud Monitoring, Azure Monitor). Building a unified view requires significant instrumentation work.

Security Posture Drift

IAM models differ fundamentally across AWS, GCP, and Azure. A policy that is secure on one cloud may create an exposure on another if your governance layer doesn’t abstract it correctly.

Cost Invisibility

Cloud providers use different billing dimensions, discount mechanisms, and tagging schemas. Cross-cloud cost attribution without a dedicated FinOps practice routinely results in 30–40% waste.

API Inconsistency

Even within Kubernetes, managed services diverge on node autoscaling behavior, storage class defaults, networking plugins, and version update cadences.

Egress Cost Shock

Inter-cloud data transfer costs are among the most underestimated budget items. Workloads that communicate across provider boundaries can generate egress bills that dwarf compute costs.

Platform Engineering Overload

Each additional cloud multiplies the surface area that platform teams must support—CI/CD pipelines, IaC modules, operator playbooks, and runbooks all need cloud-specific variants.

Multi-Cloud Kubernetes Management: The Five Core Disciplines

Rather than thinking about multi-cloud Kubernetes as a single problem, experienced platform engineering leaders decompose it into five distinct disciplines, each requiring its own tooling decisions and team ownership.

1. Cluster Lifecycle Management

Provisioning, upgrading, and decommissioning clusters consistently across clouds. The challenge here is not creating clusters that is solved, but managing the full lifecycle, including Kubernetes version upgrades, node pool rotation, and teardown without leaving orphaned cloud resources. Infrastructure-as-Code with Terraform or Pulumi, combined with GitOps workflows using Flux or Argo CD, provides the most reliable automation layer.

2. Workload Placement and Scheduling

Deciding which workloads run where — and enabling automated placement decisions based on cost, latency, compliance zone, or cloud-specific service availability. Tools like Karmada and Open Cluster Management (part of the Linux Foundation ecosystem) provide federated scheduling capabilities that abstract cloud-specific APIs.

3. Unified Observability

Building a single pane of glass for metrics, logs, traces, and events across all clusters regardless of where they run. OpenTelemetry has emerged as the de facto standard for instrumentation, with Prometheus + Thanos or Grafana Mimir handling federated metrics storage. The key architectural decision is whether to centralize observability data in a single cloud (with the associated egress cost) or to keep it distributed and federate queries.

4. Security and Policy Governance

Enforcing consistent security policies – network policies, RBAC rules, admission control, secret management, image scanning, across every cluster. Open Policy Agent (OPA) with Gatekeeper or Kyverno are the most widely adopted policy engines. Secrets management requires a vendor-agnostic solution like HashiCorp Vault or the Kubernetes External Secrets Operator to avoid binding to a cloud-native KMS.

5. FinOps and Cost Governance

Multi-cloud Kubernetes cost governance requires three layers: cloud-level cost allocation (tagging, commitment coverage), cluster-level showback (Kubecost, OpenCost), and workload-level optimization (right-sizing, spot/preemptible node usage). The FinOps Foundation Framework provides a maturity model that maps directly to multi-cloud Kubernetes environments and is worth adopting as an organizational standard.

Multi-Cloud Kubernetes Management Tools: Comparison Matrix (2026)

The tooling landscape has matured significantly. Below is a practical comparison of the leading platforms for multi-cloud Kubernetes management, across the dimensions that matter most to engineering leaders.

| Tool / Platform | Primary Function | Multi-Cloud Support | Open Source? | Best For |

|---|---|---|---|---|

| Rancher (SUSE) | Cluster lifecycle & management UI | EKS, GKE, AKS, on-prem | ✅ Apache 2.0 | Teams wanting a single control plane with strong UI |

| Karmada | Federated workload scheduling | Any K8s-conformant cluster | ✅ Apache 2.0 | Advanced multi-cluster placement policies |

| Anthos (Google) | Managed multi-cloud platform | GKE, EKS, AKS, on-prem | ❌ Commercial | GCP-primary orgs extending to other clouds |

| Azure Arc | Governance & policy projection | Any K8s cluster | ❌ Commercial | Azure-primary orgs, strong Azure Policy integration |

| Flux CD | GitOps continuous delivery | Any K8s cluster | ✅ Apache 2.0 | Multi-cluster GitOps with minimal operator overhead |

| Kubecost / OpenCost | Kubernetes cost allocation | Any K8s cluster | ✅ Apache 2.0 | Namespace/team-level cost showback |

| Crossplane | Cloud infrastructure as K8s APIs | AWS, GCP, Azure, others | ✅ Apache 2.0 | Teams building an internal developer platform on top of K8s |

| Istio / Cilium | Service mesh & networking | Any K8s cluster | ✅ Apache 2.0 | mTLS, traffic management, and network policy across clusters |

Best Practices for Multi-Cloud Kubernetes Management in 2026

These are the practices that separate organizations that have tamed multi-cloud Kubernetes complexity from those that are continuously firefighting.

Establish a “Golden Path” for Each Cloud Provider

Rather than allowing teams to make arbitrary technology choices, define an opinionated default stack for each cloud: which CNI plugin, which storage class, which logging agent, which autoscaler configuration. Document deviations through a formal RFC process. The goal is not rigidity – it is reducing the number of permutations your platform team must support.

Treat GitOps as Non-Negotiable

Every cluster state change in any cloud should flow through a Git repository, reviewed as code, and reconciled by a GitOps operator. This is the single most effective way to prevent configuration drift between clusters and maintain an auditable change history. Argo CD and Flux are both strong choices; the key is picking one and enforcing it consistently. For more on building a robust GitOps foundation, see our guide on GitOps best practices for enterprise Kubernetes.

Adopt a FinOps Practice from Day One

The organizations that manage multi-cloud Kubernetes costs effectively share one trait: they instrument costs at the workload level before they have a cost problem, not after. Deploy Kubecost or OpenCost into every cluster on day one. Define team-level budgets and automate alerts. Establish a weekly FinOps review cadence that includes both platform engineers and application owners.

Use Admission Controllers as Your Last Line of Defense

Network policies, RBAC, and image scanning catch problems at specific layers. Admission controllers – via OPA Gatekeeper or Kyverno enforce policies at the API server level and are cloud-agnostic by design. Define your policies as code in a shared repository, tested in CI, and promoted through the same GitOps workflow as your application manifests.

Design for Cross-Cloud Failure, Not Just Cloud Failure

Most DR planning addresses single-cloud failures. Multi-cloud Kubernetes enables a qualitatively different resilience posture: workloads can shift not just to a different region, but to a different provider. This requires investment in provider-agnostic service discovery (for example, via a shared service mesh or a federated DNS layer) and rigorous runbook testing that simulates a full provider outage, not just an AZ outage.

Building a Multi-Cloud Kubernetes Operating Model

Technology choices are only half the challenge. The organizations that succeed with multi-cloud Kubernetes management invest equally in the operating model that surrounds the technology.

Platform Engineering Team Structure

The most effective structure is a centralized Platform Engineering team that owns the cluster lifecycle, the developer platform, and the “golden paths” with embedded cloud specialists who maintain deep expertise in each provider’s managed Kubernetes service. Avoid the model where different product teams each own their own clusters: it leads to divergence, duplicated effort, and significantly higher operational cost.

For organizations moving in this direction, our platform engineering services provide the team augmentation and architecture guidance needed to establish this model quickly.

Runbook Standardization

Every operational procedure that a platform team must perform – cluster upgrade, node drain, incident response, capacity scaling should have a cloud-generic runbook with cloud-specific appendices. This reduces MTTD (mean time to detect) and MTTR (mean time to recover) significantly when incidents occur on clouds where the responding engineer has less daily experience.

Metrics That Actually Matter

Stop measuring cluster count. Start measuring these outcomes across your entire multi-cloud Kubernetes estate:

- P95 deployment lead time — from commit to production, across all clusters

- Policy compliance rate — percentage of namespaces passing all admission controller checks

- Node utilization — CPU and memory requests vs. allocatable capacity, per cloud

- Cross-cloud egress cost — tracked weekly, attributed by service

- MTTR per cluster — identifies which cloud environments are systematically harder to recover

Need Help Managing Kubernetes Across Clouds?

Gart Solutions works with enterprise engineering teams to design, implement, and operate multi-cloud Kubernetes environments—without the years of trial and error. We bring proven architecture patterns, hands-on platform engineering expertise, and managed support so your team can focus on delivering product value.

Whether you’re just starting to plan a multi-cloud strategy or you’re inheriting a sprawling environment that needs governance and cost control, we’ve done this before.

Conclusion

As the multi-cloud and Kubernetes trends continue to gain momentum, organizations must prioritize upskilling their IT teams, streamlining infrastructure integration, ensuring application portability, and adopting advanced security and automation tools. By addressing these challenges head-on, businesses can unlock the full potential of multi-cloud Kubernetes deployments and stay ahead in the ever-evolving cloud computing landscape.

Unleash the Potential of Multi-Cloud Kubernetes: Get Your Free Multi-Cloud Assessment!

See how we can help to overcome your challenges