Key Takeaways

Cloud migration delivers real financial benefits — but only when you migrate the right workloads the right way.

The CAPEX→OPEX shift frees capital and aligns IT costs with actual business demand.

TCO analysis across lift-and-shift, replatforming, and staying on-prem shows significant variance.

DevOps integration amplifies savings through autoscaling, rightsizing, and CI/CD efficiency.

Hidden costs — egress, idle reserved capacity, observability, and training — can erode 20–40% of expected savings.

Some workloads are better on-prem. A balanced framework avoids overspending.

Why companies move to the cloud

Cloud migration has moved far beyond a technology trend. For most organizations, it is a fundamental financial and operational restructuring — one that affects balance sheets, team productivity, speed-to-market, and carbon reporting simultaneously.

The shift to cloud is driven by a convergence of pressures: hardware refresh cycles that force capital decisions every 3–5 years, developer productivity expectations shaped by modern tooling, and investor and board-level scrutiny on sustainability commitments.

But these aggregate numbers hide important nuance. The financial benefits of cloud migration are real — but they are not automatic. They depend on workload type, migration approach, team readiness, and how closely you monitor spend post-migration. This guide gives you the frameworks to make an informed decision.

87%

of business leaders plan to increase sustainability investment over the next 2 years (Gartner)

80%+

potential workload carbon footprint reduction by migrating on-premises workloads to AWS (451 Research)

40–60%

typical infrastructure cost reduction reported by well-optimized cloud migrations

2.5%

share of global CO₂ emissions attributable to data centers — more than aviation (World Economic Forum)

When cloud migration improves ROI — a 6-question decision framework

Before moving a workload, every CFO and CTO should be able to answer these six questions. The answers determine whether cloud migration is a financial win or a costly mistake for that specific workload.

Question 1

How volatile is utilization?

Workloads with high utilization variance (e.g., seasonal e-commerce, event-driven processing) benefit most from elastic scaling. Flat, predictable workloads gain less.

Question 2

Are there licensing constraints?

Some enterprise software (Oracle, Microsoft) carries licensing models that become significantly more expensive in the cloud. Model costs before committing.

Question 3

What are latency & data gravity requirements?

Workloads requiring ultra-low latency or tightly coupled to large on-prem datasets may generate unexpected egress and latency costs.

Question 4

Where are you in the hardware lifecycle?

If hardware was refreshed 18 months ago, breakeven extends significantly. If refresh is due in 12–18 months, timing is ideal.

Question 5

What are the compliance requirements?

Regulated industries face specific data residency and sovereignty requirements that require carefully planned architecture.

Question 6

Is the team ready for cloud-native operations?

Financial benefits compound when teams use FinOps, IaC, and autoscaling. "Lift and shift" without behavior change yields limited ROI.

💡

Expert Insight from Roman Burdiuzha, CTO at Gart Solutions

"In our experience, the biggest mistake companies make is treating cloud migration as a single decision. It's actually a portfolio of decisions, workload by workload. The organizations that get the best ROI are those that migrate selectively..."

CAPEX vs OPEX: what actually changes financially

The financial model of cloud is fundamentally different from on-premises infrastructure. Understanding this shift is not just about accounting treatment — it reshapes how your finance team budgets, forecasts, and allocates capital.

The core shift: from owning to consuming

Traditional IT is built on capital expenditures (CAPEX): servers, storage, networking equipment, and data center facilities purchased or leased with significant upfront investment. Cloud replaces most of this with operational expenditures (OPEX): subscription fees, usage-based charges, and managed service fees incurred as services are consumed.

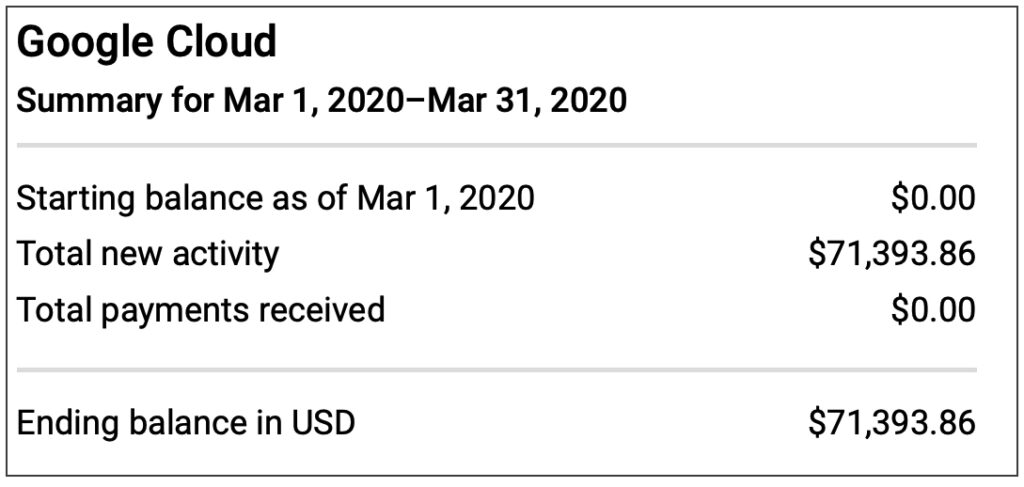

CriteriaCAPEX (On-premises)OPEX (Cloud)Nature of expenseLarge upfront investmentsRegular, usage-based costsTax treatmentDepreciated over asset life (3–7 years)Fully deductible in the year incurredBalance sheet impactIncreases fixed assets; impacts depreciationOperating expense; no capitalizationCash flow timingLarge outflows at purchase; benefits spread over yearsCosts align with revenue-generating periodsCapacity flexibilitySized for peak; most capacity often idleElastic; scales with actual demandRefresh cycle riskTechnology obsolescence every 3–5 yearsAlways on current-generation hardwareBudget predictabilityPredictable after purchase; opaque ongoing costsVariable; requires FinOps disciplineTeam responsibilityInternal IT manages hardware lifecycleVendor manages infrastructure; team manages configurationCAPEX (on premises) vs OPEX (cloud)

Key riskThe OPEX model's flexibility is also its risk. Without FinOps discipline and governance guardrails, cloud costs can grow unchecked. Organizations moving from CAPEX to OPEX must build new financial muscle: tagging standards, cost allocation by team and product, budget alerts, and regular rightsizing reviews.

TCO comparison: 3 migration scenarios for a mid-size workload

To make the financial case concrete, here is an illustrative TCO comparison across three scenarios for a typical mid-size organization running a business-critical application on aging infrastructure. The numbers are directional — actual outcomes vary by workload, region, and provider negotiation.

Scenario baseline: A 100-person SaaS company running a production application on 20 physical servers in a co-location facility, approaching a hardware refresh cycle in 18 months.

Scenario A: Stay on-prem

Hardware refresh + licensing + co-lo fees + staffing to manage infrastructure.

Typical 24-month spend

$480K–$620K

High upfront capital. Full control. Limited elasticity. Team spends ~30% of time on infrastructure ops.

Scenario B: Lift-and-shift

Direct migration of existing VMs. Minimal re-architecture. Quick path.

Typical 24-month spend

$420K–$560K

Moderate savings from CAPEX elimination. Limited elasticity benefits. Risk: migrating waste.

Scenario C: Replatforming

Containerization, CI/CD, rightsizing, and reserved capacity.

Typical 24-month spend

$280K–$380K

Best long-term ROI. Requires more investment upfront. Team focused on product, not infrastructure.

Note: Figures are illustrative only. Actual outcomes depend on workload architecture, cloud region, and engineering scope. Gart recommends a workload-level cost model before committing. Contact us for a tailored assessment.

Hidden cloud costs to model before you migrate

The most common reason cloud migrations underdeliver on their financial promise is that the business case modeled cloud costs in isolation — without accounting for the costs that only appear after go-live.

Hidden cost categoryWhat to modelTypical impactData egress feesVolume of data transferred out of the cloud per month × egress rate by region5–20% of compute billIdle reserved capacityReserved instances purchased but underutilized10–30% of reserved spend wastedObservability & logging growthLog volume × CloudWatch/Datadog pricing; scales with trafficCan double in 12 monthsManaged service premiumRDS vs self-managed DB; EKS vs self-managed Kubernetes30–50% markup vs self-managedLicensing in the cloudBYOL vs included; Oracle, Windows Server, SQL Server in cloudCan exceed compute costApplication refactoringEngineering hours to re-architect for cloud-native patterns3–9 months of team timeTraining & certificationCloud practitioner, architect, DevOps certifications per team member$2K–$8K per engineerSupport tiersBusiness/Enterprise support on top of compute costs3–10% of monthly billHidden cloud costs to model before you migrate

⚡

Quick win

Use AWS Migration Evaluator or Azure Migrate to baseline your actual on-premises utilization before scoping the cloud bill. Organizations consistently find they are running at 15–25% average CPU utilization on-prem — meaning they need significantly less cloud capacity than a 1:1 lift would suggest.

How DevOps multiplies the financial benefits of cloud migration

Cloud infrastructure alone does not deliver savings. The organizations that achieve 40–60% cost reductions are those that pair cloud migration with modern DevOps practices. Here is how each practice maps to a financial outcome.

DevOps practiceFinancial mechanismMeasurable outcomeAutoscalingResources provision and deprovision based on real demandEliminate idle capacity costs (typically 30–50% of compute)RightsizingContinuously match instance types to actual workload metrics15–40% compute cost reductionCI/CD pipelinesShorter release cycles, fewer rollback events, reduced defect costsFaster time-to-value; engineering time on features, not firefightingInfrastructure as Code (IaC)Eliminate manual provisioning drift; reproducible environmentsReduce environment provisioning time from days to minutesEnvironment schedulingAuto-shut non-production environments evenings and weekendsUp to 65% reduction in dev/test environment costsFinOps taggingAttribute every dollar of spend to a team, service, or productAccountability that reduces waste by 20–35% over 12 monthsContainer optimizationSmaller images, Fargate for variable workloads, node efficiency15–30% reduction in container infrastructure costsHow DevOps multiplies the financial benefits of cloud migration

"If you only move infrastructure without changing release practices, you may gain flexibility — but not meaningful cost efficiency. The financial benefits of cloud migration compound when engineering teams operate cloud-natively: they stop paying for idle time, they ship faster, and they build institutional knowledge that makes every future optimization easier."Roman Burdiuzha — Co-founder & CTO, Gart Solutions. 15+ years in DevOps and cloud architecture.

What Gart measures after migration

In our client environments, we track these metrics post-migration to quantify DevOps-driven financial impact:

Environment idle time (target: <5% of provisioned time)

Deployment frequency (from weekly to multiple times per day)

Cost per environment (should decrease 20–40% within 6 months)

Reserved capacity utilization (target: >80%)

Workload carbon intensity per transaction

Mean time to recovery (MTTR) — directly impacts incident cost

When cloud migration does NOT save money

A balanced, trustworthy business case acknowledges where cloud migration is the wrong choice — or where hybrid is better. Here are the most common scenarios where staying partly on-prem is the more financially sound decision.

3 migration mistakes we see most often at Gart

1.

Lifting waste into the cloud

Organizations that migrate oversized, underutilized VMs without rightsizing pay more in the cloud than on-prem. Always rightsize before you migrate.

2.

Ignoring egress costs

A data-intensive application with significant read traffic to external users can generate egress bills that offset compute savings entirely.

3.

Overbuying managed services

Managed Kubernetes, databases, and caches carry a premium. Evaluate whether that premium buys real productivity or is just a "convenience tax."

ScenarioBetter approachWhyStable, flat workloads (e.g., legacy ERP)Stay on-prem or re-evaluate at next hardware cycleNo elasticity benefit; cloud premium exceeds on-prem OpExHigh egress, read-heavy applicationsHybrid: origin on-prem, CDN + edge caching in cloudEgress costs can exceed all other cloud savingsOracle or legacy licensed workloadsStay on-prem or negotiate BYOL explicitlyLicensing in cloud can cost 2–4x on-premExtreme latency-sensitive processingEdge/colocation + cloud for non-latency-critical tiersNetwork latency in cloud may not meet SLA requirementsTeam not ready for cloud operationsInvest in training and FinOps before migratingWithout cloud-native operations, costs will spiral post-migrationWhen cloud migration does NOT save money

Measuring sustainability impact after migration

Sustainability is no longer a soft benefit of cloud migration — it is a measurable, reportable outcome that increasingly matters to investors, enterprise customers, and regulators. However, the financial benefits of cloud migration for carbon reduction are only realized if migration is paired with the right architecture choices.

How cloud providers support sustainability goals

The world's largest cloud providers operate at a scale of energy procurement and efficiency that no individual organization can match. This translates into material carbon reduction potential for migrating workloads.

AWS became the world's largest corporate buyer of renewable energy, with all electricity across 19 AWS Regions sourced from 100% renewable energy as of 2022. Research from 451 Research indicates that migrating on-premises workloads to AWS can reduce workload carbon footprints by at least 80%, with the potential to reach 96% once AWS achieves its 100% renewable energy goal.

Microsoft Azure publishes datacenter Power Usage Effectiveness (PUE) and Water Usage Effectiveness (WUE) metrics, enabling organizations to measure and compare energy efficiency. Through the Microsoft Cloud for Sustainability platform, organizations can consolidate environmental data and track progress against reduction targets. More details are available in Microsoft's sustainability reporting.

⚠️ Important distinctionFor many workloads, cloud migration can reduce emissions — but the outcome depends on region, utilization, modernization depth, and the provider's energy mix. Broad claims that "migrating to the cloud reduces your carbon footprint" are true on average, but should be validated with workload-level data for any public sustainability reporting. Distinguishing between provider-level renewable energy goals and your specific workload's realized reduction is critical for accurate ESG reporting.

How we estimate cost and carbon impact

Transparency in methodology builds trust. When Gart builds a cloud migration business case, we use the following inputs to model financial and carbon outcomes:

Workload utilization data — actual CPU, memory, and I/O metrics from on-prem monitoring, not nameplate capacity

Hardware lifecycle stage — time since last refresh, expected end-of-life date, maintenance cost trajectory

Region mix — cloud region selection affects both cost (varies up to 30% across regions) and renewable energy availability

Egress volume modeling — estimated monthly data transfer out of cloud, by traffic pattern

Licensing audit — current software licenses, cloud eligibility, BYOL vs included

Reserved capacity assumptions — 1-year vs 3-year reservations, upfront vs monthly payments

Modernization scope — lift-and-shift, replatforming, or re-architecture, each with different cost and savings profiles

Sustainability estimates follow provider methodologies: AWS Carbon Footprint Tool for AWS workloads, and Microsoft Emissions Impact Dashboard for Azure. Carbon reduction projections are presented as ranges, not point estimates, to reflect genuine uncertainty.

Reduced Data Center Footprint and Increased Productivity

Moving to the cloud reduces the need for big on-site data centers, saving costs and making operations more efficient. It also allows quick adjustments to resources, matching IT needs with actual demand, boosting productivity.

DevOps Integration for Efficiency and Time-to-Market

The cloud and DevOps work together to improve how businesses operate. Combining DevOps practices with cloud technology makes processes more efficient, speeds up bringing products to market, and encourages collaboration between development and operations teams. This teamwork streamlines growth, especially for startups, by providing scalable resources in the cloud.

This combination also cuts operating costs through automation, which is crucial for business leaders focused on digital transformation. It encourages innovation, saves money, motivates employees, and aligns with the need for efficient processes to deliver top-notch goods and services. Overall, blending DevOps and the cloud accelerates important technological changes that affect business goals.

Ready to build your cloud migration business case?

Gart's cloud architects have helped dozens of organizations move from on-prem to cloud — delivering real TCO reductions and measurable sustainability improvements.

Schedule a free call with Roman

Explore migration services

☁️ Cloud Migration

⚙️ DevOps Services

📈 FinOps & Optimization

🔒 AWS & Azure

🌱 Sustainability

🏗️ Infrastructure as Code

Roman Burdiuzha

Co-founder & CTO, Gart Solutions · Cloud Architecture Expert

Roman has 15+ years of experience in DevOps and cloud architecture, with prior leadership roles at SoftServe and lifecell Ukraine. He co-founded Gart Solutions, where he leads cloud transformation and infrastructure modernization engagements across Europe and North America. In one recent client engagement, Gart reduced infrastructure waste by 38% through consolidating idle resources and introducing usage-aware automation. Read more on Startup Weekly.

In my experience optimizing cloud costs, especially on AWS, I often find that many quick wins are in the "easy to implement - good savings potential" quadrant.

[lwptoc]

That's why I've decided to share some straightforward methods for optimizing expenses on AWS that will help you save over 80% of your budget.

Choose reserved instances

Potential Savings: Up to 72%

Choosing reserved instances involves committing to a subscription, even partially, and offers a discount for long-term rentals of one to three years. While planning for a year is often deemed long-term for many companies, especially in Ukraine, reserving resources for 1-3 years carries risks but comes with the reward of a maximum discount of up to 72%.

You can check all the current pricing details on the official website - Amazon EC2 Reserved Instances

Purchase Saving Plans (Instead of On-Demand)

Potential Savings: Up to 72%

There are three types of saving plans: Compute Savings Plan, EC2 Instance Savings Plan, SageMaker Savings Plan.

AWS Compute Savings Plan is an Amazon Web Services option that allows users to receive discounts on computational resources in exchange for committing to using a specific volume of resources over a defined period (usually one or three years). This plan offers flexibility in utilizing various computing services, such as EC2, Fargate, and Lambda, at reduced prices.

AWS EC2 Instance Savings Plan is a program from Amazon Web Services that offers discounted rates exclusively for the use of EC2 instances. This plan is specifically tailored for the utilization of EC2 instances, providing discounts for a specific instance family, regardless of the region.

AWS SageMaker Savings Plan allows users to get discounts on SageMaker usage in exchange for committing to using a specific volume of computational resources over a defined period (usually one or three years).

The discount is available for one and three years with the option of full, partial upfront payment, or no upfront payment. EC2 can help save up to 72%, but it applies exclusively to EC2 instances.

Utilize Various Storage Classes for S3 (Including Intelligent Tier)

Potential Savings: 40% to 95%

AWS offers numerous options for storing data at different access levels. For instance, S3 Intelligent-Tiering automatically stores objects at three access levels: one tier optimized for frequent access, 40% cheaper tier optimized for infrequent access, and 68% cheaper tier optimized for rarely accessed data (e.g., archives).

S3 Intelligent-Tiering has the same price per 1 GB as S3 Standard — $0.023 USD.

However, the key advantage of Intelligent Tiering is its ability to automatically move objects that haven't been accessed for a specific period to lower access tiers.

Every 30, 90, and 180 days, Intelligent Tiering automatically shifts an object to the next access tier, potentially saving companies from 40% to 95%. This means that for certain objects (e.g., archives), it may be appropriate to pay only $0.0125 USD per 1 GB or $0.004 per 1 GB compared to the standard price of $0.023 USD.

Information regarding the pricing of Amazon S3

AWS Compute Optimizer

Potential Savings: quite significant

The AWS Compute Optimizer dashboard is a tool that lets users assess and prioritize optimization opportunities for their AWS resources.

The dashboard provides detailed information about potential cost savings and performance improvements, as the recommendations are based on an analysis of resource specifications and usage metrics.

The dashboard covers various types of resources, such as EC2 instances, Auto Scaling groups, Lambda functions, Amazon ECS services on Fargate, and Amazon EBS volumes.

For example, AWS Compute Optimizer reproduces information about underutilized or overutilized resources allocated for ECS Fargate services or Lambda functions. Regularly keeping an eye on this dashboard can help you make informed decisions to optimize costs and enhance performance.

Use Fargate in EKS for underutilized EC2 nodes

If your EKS nodes aren't fully used most of the time, it makes sense to consider using Fargate profiles. With AWS Fargate, you pay for a specific amount of memory/CPU resources needed for your POD, rather than paying for an entire EC2 virtual machine.

For example, let's say you have an application deployed in a Kubernetes cluster managed by Amazon EKS (Elastic Kubernetes Service). The application experiences variable traffic, with peak loads during specific hours of the day or week (like a marketplace or an online store), and you want to optimize infrastructure costs. To address this, you need to create a Fargate Profile that defines which PODs should run on Fargate. Configure Kubernetes Horizontal Pod Autoscaler (HPA) to automatically scale the number of POD replicas based on their resource usage (such as CPU or memory usage).

Manage Workload Across Different Regions

Potential Savings: significant in most cases

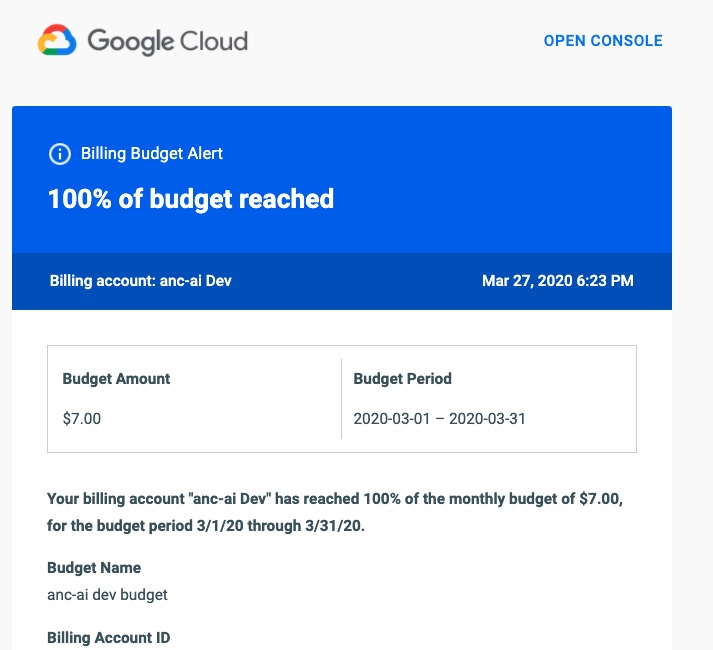

When handling workload across multiple regions, it's crucial to consider various aspects such as cost allocation tags, budgets, notifications, and data remediation.

Cost Allocation Tags: Classify and track expenses based on different labels like program, environment, team, or project.

AWS Budgets: Define spending thresholds and receive notifications when expenses exceed set limits. Create budgets specifically for your workload or allocate budgets to specific services or cost allocation tags.

Notifications: Set up alerts when expenses approach or surpass predefined thresholds. Timely notifications help take actions to optimize costs and prevent overspending.

Remediation: Implement mechanisms to rectify expenses based on your workload requirements. This may involve automated actions or manual interventions to address cost-related issues.

Regional Variances: Consider regional differences in pricing and data transfer costs when designing workload architectures.

Reserved Instances and Savings Plans: Utilize reserved instances or savings plans to achieve cost savings.

AWS Cost Explorer: Use this tool for visualizing and analyzing your expenses. Cost Explorer provides insights into your usage and spending trends, enabling you to identify areas of high costs and potential opportunities for cost savings.

Transition to Graviton (ARM)

Potential Savings: Up to 30%

Graviton utilizes Amazon's server-grade ARM processors developed in-house. The new processors and instances prove beneficial for various applications, including high-performance computing, batch processing, electronic design automation (EDA) automation, multimedia encoding, scientific modeling, distributed analytics, and machine learning inference on processor-based systems.

The processor family is based on ARM architecture, likely functioning as a system on a chip (SoC). This translates to lower power consumption costs while still offering satisfactory performance for the majority of clients. Key advantages of AWS Graviton include cost reduction, low latency, improved scalability, enhanced availability, and security.

Spot Instances Instead of On-Demand

Potential Savings: Up to 30%

Utilizing spot instances is essentially a resource exchange. When Amazon has surplus resources lying idle, you can set the maximum price you're willing to pay for them. The catch is that if there are no available resources, your requested capacity won't be granted.

However, there's a risk that if demand suddenly surges and the spot price exceeds your set maximum price, your spot instance will be terminated.

Spot instances operate like an auction, so the price is not fixed. We specify the maximum we're willing to pay, and AWS determines who gets the computational power. If we are willing to pay $0.1 per hour and the market price is $0.05, we will pay exactly $0.05.

Use Interface Endpoints or Gateway Endpoints to save on traffic costs (S3, SQS, DynamoDB, etc.)

Potential Savings: Depends on the workload

Interface Endpoints operate based on AWS PrivateLink, allowing access to AWS services through a private network connection without going through the internet. By using Interface Endpoints, you can save on data transfer costs associated with traffic.

Utilizing Interface Endpoints or Gateway Endpoints can indeed help save on traffic costs when accessing services like Amazon S3, Amazon SQS, and Amazon DynamoDB from your Amazon Virtual Private Cloud (VPC).

Key points:

Amazon S3: With an Interface Endpoint for S3, you can privately access S3 buckets without incurring data transfer costs between your VPC and S3.

Amazon SQS: Interface Endpoints for SQS enable secure interaction with SQS queues within your VPC, avoiding data transfer costs for communication with SQS.

Amazon DynamoDB: Using an Interface Endpoint for DynamoDB, you can access DynamoDB tables in your VPC without incurring data transfer costs.

Additionally, Interface Endpoints allow private access to AWS services using private IP addresses within your VPC, eliminating the need for internet gateway traffic. This helps eliminate data transfer costs for accessing services like S3, SQS, and DynamoDB from your VPC.

Optimize Image Sizes for Faster Loading

Potential Savings: Depends on the workload

Optimizing image sizes can help you save in various ways.

Reduce ECR Costs: By storing smaller instances, you can cut down expenses on Amazon Elastic Container Registry (ECR).

Minimize EBS Volumes on EKS Nodes: Keeping smaller volumes on Amazon Elastic Kubernetes Service (EKS) nodes helps in cost reduction.

Accelerate Container Launch Times: Faster container launch times ultimately lead to quicker task execution.

Optimization Methods:

Use the Right Image: Employ the most efficient image for your task; for instance, Alpine may be sufficient in certain scenarios.

Remove Unnecessary Data: Trim excess data and packages from the image.

Multi-Stage Image Builds: Utilize multi-stage image builds by employing multiple FROM instructions.

Use .dockerignore: Prevent the addition of unnecessary files by employing a .dockerignore file.

Reduce Instruction Count: Minimize the number of instructions, as each instruction adds extra weight to the hash. Group instructions using the && operator.

Layer Consolidation: Move frequently changing layers to the end of the Dockerfile.

These optimization methods can contribute to faster image loading, reduced storage costs, and improved overall performance in containerized environments.

Use Load Balancers to Save on IP Address Costs

Potential Savings: depends on the workload

Starting from February 2024, Amazon begins billing for each public IPv4 address. Employing a load balancer can help save on IP address costs by using a shared IP address, multiplexing traffic between ports, load balancing algorithms, and handling SSL/TLS.

By consolidating multiple services and instances under a single IP address, you can achieve cost savings while effectively managing incoming traffic.

Optimize Database Services for Higher Performance (MySQL, PostgreSQL, etc.)

Potential Savings: depends on the workload

AWS provides default settings for databases that are suitable for average workloads. If a significant portion of your monthly bill is related to AWS RDS, it's worth paying attention to parameter settings related to databases.

Some of the most effective settings may include:

Use Database-Optimized Instances: For example, instances in the R5 or X1 class are optimized for working with databases.

Choose Storage Type: General Purpose SSD (gp2) is typically cheaper than Provisioned IOPS SSD (io1/io2).

AWS RDS Auto Scaling: Automatically increase or decrease storage size based on demand.

If you can optimize the database workload, it may allow you to use smaller instance sizes without compromising performance.

Regularly Update Instances for Better Performance and Lower Costs

Potential Savings: Minor

As Amazon deploys new servers in their data processing centers to provide resources for running more instances for customers, these new servers come with the latest equipment, typically better than previous generations. Usually, the latest two to three generations are available. Make sure you update regularly to effectively utilize these resources.

Take Memory Optimize instances, for example, and compare the price change based on the relevance of one instance over another. Regular updates can ensure that you are using resources efficiently.

InstanceGenerationDescriptionOn-Demand Price (USD/hour)m6g.large6thInstances based on ARM processors offer improved performance and energy efficiency.$0.077m5.large5thGeneral-purpose instances with a balanced combination of CPU and memory, designed to support high-speed network access.$0.096m4.large4thA good balance between CPU, memory, and network resources.$0.1m3.large3rdOne of the previous generations, less efficient than m5 and m4.Not avilable

Use RDS Proxy to reduce the load on RDS

Potential for savings: Low

RDS Proxy is used to relieve the load on servers and RDS databases by reusing existing connections instead of creating new ones. Additionally, RDS Proxy improves failover during the switch of a standby read replica node to the master.

Imagine you have a web application that uses Amazon RDS to manage the database. This application experiences variable traffic intensity, and during peak periods, such as advertising campaigns or special events, it undergoes high database load due to a large number of simultaneous requests.

During peak loads, the RDS database may encounter performance and availability issues due to the high number of concurrent connections and queries. This can lead to delays in responses or even service unavailability.

RDS Proxy manages connection pools to the database, significantly reducing the number of direct connections to the database itself.

By efficiently managing connections, RDS Proxy provides higher availability and stability, especially during peak periods.

Using RDS Proxy reduces the load on RDS, and consequently, the costs are reduced too.

Define the storage policy in CloudWatch

Potential for savings: depends on the workload, could be significant.

The storage policy in Amazon CloudWatch determines how long data should be retained in CloudWatch Logs before it is automatically deleted.

Setting the right storage policy is crucial for efficient data management and cost optimization. While the "Never" option is available, it is generally not recommended for most use cases due to potential costs and data management issues.

Typically, best practice involves defining a specific retention period based on your organization's requirements, compliance policies, and needs.

Avoid using an undefined data retention period unless there is a specific reason. By doing this, you are already saving on costs.

Configure AWS Config to monitor only the events you need

Potential for savings: depends on the workload

AWS Config allows you to track and record changes to AWS resources, helping you maintain compliance, security, and governance. AWS Config provides compliance reports based on rules you define. You can access these reports on the AWS Config dashboard to see the status of tracked resources.

You can set up Amazon SNS notifications to receive alerts when AWS Config detects non-compliance with your defined rules. This can help you take immediate action to address the issue. By configuring AWS Config with specific rules and resources you need to monitor, you can efficiently manage your AWS environment, maintain compliance requirements, and avoid paying for rules you don't need.

Use lifecycle policies for S3 and ECR

Potential for savings: depends on the workload

S3 allows you to configure automatic deletion of individual objects or groups of objects based on specified conditions and schedules. You can set up lifecycle policies for objects in each specific bucket. By creating data migration policies using S3 Lifecycle, you can define the lifecycle of your object and reduce storage costs.

These object migration policies can be identified by storage periods. You can specify a policy for the entire S3 bucket or for specific prefixes. The cost of data migration during the lifecycle is determined by the cost of transfers. By configuring a lifecycle policy for ECR, you can avoid unnecessary expenses on storing Docker images that you no longer need.

Switch to using GP3 storage type for EBS

Potential for savings: 20%

By default, AWS creates gp2 EBS volumes, but it's almost always preferable to choose gp3 — the latest generation of EBS volumes, which provides more IOPS by default and is cheaper.

For example, in the US-east-1 region, the price for a gp2 volume is $0.10 per gigabyte-month of provisioned storage, while for gp3, it's $0.08/GB per month. If you have 5 TB of EBS volume on your account, you can save $100 per month by simply switching from gp2 to gp3.

Switch the format of public IP addresses from IPv4 to IPv6

Potential for savings: depending on the workload

Starting from February 1, 2024, AWS will begin charging for each public IPv4 address at a rate of $0.005 per IP address per hour. For example, taking 100 public IP addresses on EC2 x $0.005 per public IP address per month x 730 hours = $365.00 per month.

While this figure might not seem huge (without tying it to the company's capabilities), it can add up to significant network costs. Thus, the optimal time to transition to IPv6 was a couple of years ago or now.

Here are some resources about this recent update that will guide you on how to use IPv6 with widely-used services — AWS Public IPv4 Address Charge.

Collaborate with AWS professionals and partners for expertise and discounts

Potential for savings: ~5% of the contract amount through discounts.

AWS Partner Network (APN) Discounts: Companies that are members of the AWS Partner Network (APN) can access special discounts, which they can pass on to their clients. Partners reaching a certain level in the APN program often have access to better pricing offers.

Custom Pricing Agreements: Some AWS partners may have the opportunity to negotiate special pricing agreements with AWS, enabling them to offer unique discounts to their clients. This can be particularly relevant for companies involved in consulting or system integration.

Reseller Discounts: As resellers of AWS services, partners can purchase services at wholesale prices and sell them to clients with a markup, still offering a discount from standard AWS prices. They may also provide bundled offerings that include AWS services and their own additional services.

Credit Programs: AWS frequently offers credit programs or vouchers that partners can pass on to their clients. These could be promo codes or discounts for a specific period.

Seek assistance from AWS professionals and partners. Often, this is more cost-effective than purchasing and configuring everything independently. Given the intricacies of cloud space optimization, expertise in this matter can save you tens or hundreds of thousands of dollars.

More valuable tips for optimizing costs and improving efficiency in AWS environments:

Scheduled TurnOff/TurnOn for NonProd environments: If the Development team is in the same timezone, significant savings can be achieved by, for example, scaling the AutoScaling group of instances/clusters/RDS to zero during the night and weekends when services are not actively used.

Move static content to an S3 Bucket & CloudFront: To prevent service charges for static content, consider utilizing Amazon S3 for storing static files and CloudFront for content delivery.

Use API Gateway/Lambda/Lambda Edge where possible: In such setups, you only pay for the actual usage of the service. This is especially noticeable in NonProd environments where resources are often underutilized.

If your CI/CD agents are on EC2, migrate to CodeBuild: AWS CodeBuild can be a more cost-effective and scalable solution for your continuous integration and delivery needs.

CloudWatch covers the needs of 99% of projects for Monitoring and Logging: Avoid using third-party solutions if AWS CloudWatch meets your requirements. It provides comprehensive monitoring and logging capabilities for most projects.

Feel free to reach out to me or other specialists for an audit, a comprehensive optimization package, or just advice.

FinOps culture empowers engineers and architects to think as business owners about cost and value at all stages of the application life cycle.

In this article, as a co-founder and cloud architect of Gart, I want to share my hands-on experience with FinOps automation and its role in creating an outsized impact on business outcomes (here is my LinkedIn page)

This material will be useful for anyone who works in cloud engineering, finance, procurement, and is interested in product ownership and leadership of the company.

Why Does Cost Management Matter?

In practice, most organizations have an unbalanced cost/resource structure that was created during the planning, deployment, and subsequent launch stages of a project. An unbalanced structure leads to additional margin loss and, in some cases, quality loss.

But with FinOps practice, each operational group can access the data they need to influence their costs in near real-time and make decisions based on it that will lead to efficient cloud costs balanced with service speed or performance.

Thus, FinOps as a service has a direct impact on the margins of an organization or project, allowing cross-functional teams (project owners, engineers, and management) to maximize the use of resources based on a budget but in real-time.

Who Is Involved in FinOps?

From finance to operations, developers, architects, and executives, everyone in an organization has a role to play.

Whether it's a small business with a few employees deploying cloud resources or a large enterprise with thousands of employees, FinOps practices can be implemented throughout the organization at different levels.

The FinOps team generates recommendations, such as reconfiguring resources or committing to cloud service providers, that need to be considered by the organization.

Top FinOps Practices to Manage Cloud Costs

FinOps is an evolving practice that empowers organizations to manage their cloud expenses efficiently and fine-tune their financial operations. Below, we present some of the prime FinOps practices for proficiently controlling cloud costs:

1. Monitoring and Tracking Cloud Expenditure

The initial step in effectively overseeing cloud expenses is the vigilant monitoring and tracking of cloud spending. This entails gaining a deep understanding of the utilization patterns of various services, pinpointing the primary drivers of costs, and closely observing user trends. These actions are instrumental in uncovering areas ripe for cost optimization, identifying redundant resources, and recognizing underutilized services.

2. Implementing Cost Optimization Strategies

Once the key cost drivers have been pinpointed, the implementation of cost-efficiency strategies can commence. This involves harnessing discounts, making judicious use of spot instances, downsizing underused services, and eliminating superfluous resources. Here are some recommendations to initiate this process:

Scrutinize Your Company’s Expenditures

Identify Sources of Squander and Inefficiency

Rationalize Operational Procedures

3. Automating Management of Cloud Costs

Automation stands as the linchpin of cost control in the realm of cloud services. By automating key processes, organizations can expedite the discovery of cost-saving opportunities, automate the provisioning of resources, and streamline billing procedures. Automation plays a pivotal role in helping companies uncover and rectify inefficiencies in cloud cost management. For instance, it can facilitate real-time tracking of cloud resource utilization, enabling the identification and repurposing or termination of redundant or underutilized assets. Moreover, it can flag cost optimization prospects, such as discounts or incentives from cloud providers and potential strategies for economizing, such as resource scaling.

4. Leverage Tools for Cost Control

A multitude of cost control tools is at your disposal to facilitate efficient management of cloud costs. These optimization tools are adept at tracking usage patterns, establishing budgetary thresholds, and flagging opportunities for cost efficiency. Their design caters to empowering businesses with the capability to scrutinize and dissect their financial outlays. These tools enable meticulous expense tracking, identification of areas with potential for optimization, and the execution of cost-cutting measures.

5. Implementing Resource Allocation Strategies

Resource allocation proves pivotal in the effective management of cloud costs. The objective is to allocate resources in the most resourceful manner possible, taking into account usage trends and cost efficiency tactics.

6. Harnessing Cloud Cost Forecasting

The practice of cloud cost forecasting serves as a valuable resource for comprehending future cloud expenses and pinpointing areas ripe for cost reduction. This forward-looking approach aids in strategic planning and fosters more precise budgeting.

7. Investing in Cloud Governance

Establishing comprehensive cloud governance protocols is a foundational element in the realm of cloud cost management. This entails the formulation of rules and policies governing cloud utilization, the delineation of roles and responsibilities, and the diligent monitoring of compliance.

How to Set Up FinOps in Your Business?

Stage 1: Planning FinOps in the Organization 1. Gather Support: identify key stakeholders interested in increasing cloud margins. Familiarize yourself with the opportunities for your organization with better resource and expenditure analysis. 2. Determine the required time for monitoring and supporting FinOps in your organization based on time and data flow cycles. 3. Plan target actions and require a team with the relevant skills for FinOps. 4. Make decisions regarding the collection and storage of cloud consumption data. 5. Think about reporting tools and data transmission for FinOps stakeholders.

Stage 2: Adoption of FinOps FinOps is a cultural change that requires the involvement of various teams and individuals throughout the organization. Communication and feedback cycles aimed at encouraging the practice are crucial. The goal of this stage is to present the FinOps plan created in Stage 1 to stakeholders. The presentation below helps communicate this clearly, easily, and quickly:

Share a high-level activity roadmap of FinOps and the value it brings to different teams and projects.

Understand cross-team challenges and explain/teach how FinOps can help address them.

Establish a collaboration model between FinOps and key partners (IT domains, controllers, program teams).

Create and implement a FinOps dashboard for key stakeholders and cross-functional teams.

Stage 3: Operational Phase

The FinOps lifecycle is built around a 3-stage model and has the same principles in each of them.

Cross-functional teams must collaborate.

Decisions are made based on cloud value for the business.

Everyone takes responsibility for their cloud usage.

FinOps reports should be accessible and timely.

A centralized team manages FinOps.

Leverage the benefits of the cloud model with variable expenses.

To prepare for a successful FinOps practice, certain criteria need to be met:

Prepare a resource map or a list of resources in active projects, as specified in contracts and actively deployed environments.

Track complete and up-to-date consumption data from all cloud providers.

Enable cost analysis and expenditure forecasting for active projects.

Ability to assess discrepancies between contractual (budgeted) and actual consumption levels.

Reporting is the only way to provide information on cloud consumption discrepancies and offer recommendations for resource structuring or resizing. Data quality collected through APIs or proprietary cloud solutions, as mentioned earlier, is a critical prerequisite for the reporting process.

Top 3 FinOps Best Practices of Automation

1. Tag Management

After establishing a tagging standard for your organization, you can use automation to ensure compliance with this standard.

Start by identifying resources with missing or incorrectly applied tags, and then assign responsibility to rectify these tag violations. You can also proceed to stop or lock resources to compel owners to take action and potentially work on deletion or decommissioning policies for these resources.

However, resource deletion is a highly effective form of automation, so many companies may not reach this level of maturity immediately. It is advisable not to jump directly to resource deletion without addressing previous, less impactful levels of automation.

2. Scheduled Resource Start/Stop

Managing resources and automation allows you to schedule resource stoppages when they are not in use (e.g., outside of office hours) and then bring them back online when needed.

The goal of this automation is to minimize impact on teams while saving significant costs during hours when their resources are idle. This automation is often deployed in development and testing environments, where resource unavailability is not noticed outside of working hours.

You should ensure that the implementation allows team members to bypass scheduled actions in case they need to keep a server active during off-hours. Additionally, canceling a scheduled task should not completely remove the resource from automation but merely skip the current execution.

3. Usage Reduction

Automation for usage reduction eliminates waste of notifications to responsible team members for better cost optimization.

Automated resource data retrieval from services like Trusted Advisor (for AWS), third-party cost optimization platforms, or directly from resource metrics provides a straightforward way to send notifications to team members responsible for resources to investigate or, in some environments, allows for automatic resource termination or resizing.

Top Cloud FinOps KPIs

Answering the question of how to measure the success of FinOps program, from our experience, I can outline six main KPIs (but any KPI should be defined by your organization):

Cloud Spend

This metric provides visibility into how much money you spend on cloud services to get a clear picture of your cloud spending and identify areas where else to save money.

Cloud Utilization

This metric measures how efficiently you’re using your cloud resources.

Cloud Availability

The metric measures cloud environment’s reliability and meeting performance expectations. Poor availability can lead to downtime and lost productivity.

Cloud Security

Cloud Security measures the security of your cloud environment and helps you identify any potential threats.

Cloud Adoption

Cloud Adoption measures the rate at which your organization is adopting cloud technologies.

Businesses can optimize their cloud investments and resource utilization by monitoring these five Cloud FinOps Key Performance Indicators (KPIs).

Furthermore, keeping an eye on these KPIs enables businesses to pinpoint cost-saving opportunities and enhance their cloud infrastructure.

Conclusion

In this article, we've covered the fundamentals of FinOps as well as how to set up Cloud FinOps practices in your business. By leveraging these capabilities, organizations can achieve greater cost visibility, financial control, and overall operational efficiency in their cloud environments.

Start your cloud FinOps journey with Gart's FinOps Assessment. You will get a roadmap and a completely executable plan wherever you are on your cloud journey.

So, whether you're implementing a full cloud operating model, or just managing your cloud cost, a collaboration with Cloud FinOps partner like Gart, drives your organization. Schedule a free consultation.