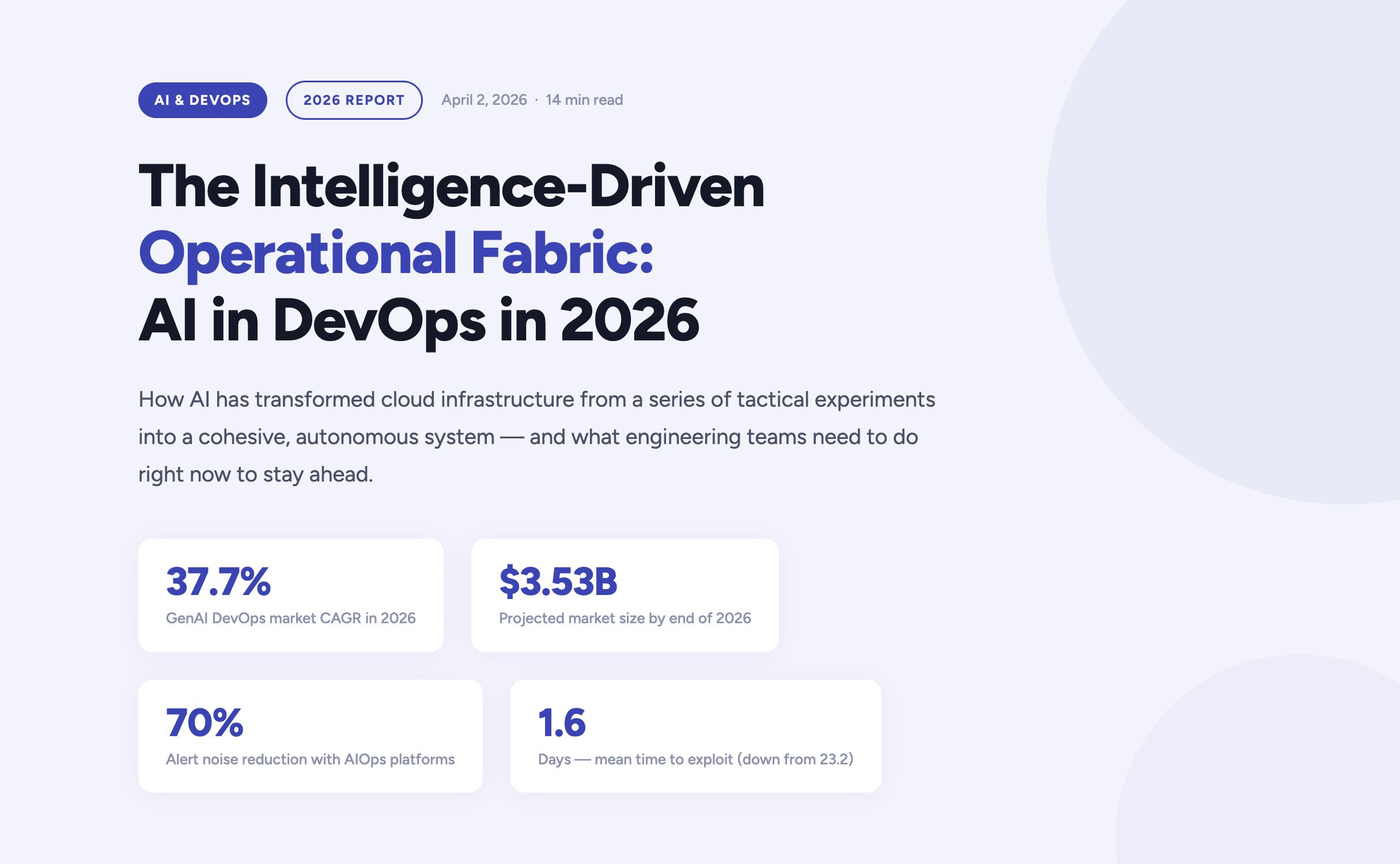

The year 2026 marks a definitive turning point in how enterprises build, deploy, and operate software. Artificial Intelligence has moved far beyond the experimental phase inside DevOps pipelines — it now forms the connective tissue of the entire software delivery lifecycle. According to current market analysis, the generative AI segment of the DevOps market is growing at a compound annual rate of 37.7%, expected to reach $3.53 billion by the end of this year alone.

For engineering teams, platform engineers, and CTOs navigating this shift, the questions are no longer “should we adopt AI?” but rather “how do we govern it?”, “where does it amplify our strengths?”, and critically — “where does it expose our weaknesses?”. This article answers those questions, grounded in the realities of operating cloud infrastructure in 2026.

The AI velocity paradox — why more code isn’t always better

One of the most striking findings in the 2026 DevOps landscape is what researchers have begun calling the AI Velocity Paradox. AI-assisted coding tools have dramatically accelerated the code creation phase of the Software Development Life Cycle. However, the downstream delivery systems responsible for testing, securing, and deploying that code have often failed to keep pace — creating a structural mismatch between production and operations capacity.

The data tells a clear story. Teams that use AI coding tools daily are three times more likely to deploy frequently — but they also report significantly higher rates of quality failures, security incidents, and engineer burnout.

The AI DevOps maturity gap — occasional vs. daily AI tool users

| Performance Indicator | Occasional AI Usage | Daily AI Usage |

|---|---|---|

| Daily deployment frequency | 15% of teams | 45% of teams |

| Frequent deployment issues | Minimal | 69% of teams |

| Mean Time to Recovery (MTTR) | 6.3 hours | 7.6 hours |

| Quality / security problems | Baseline | 51% quality / 53% security |

| Engineers working overtime | 66% | 96% |

The root cause is structural: a “six-lane highway” of AI-accelerated code generation is funneling into a “two-lane bridge” of operational capacity. Engineers spend an average of 36% of their time on repetitive manual tasks — chasing tickets, rerunning failed jobs, manually validating AI-generated code — while developer burnout now affects 47% of the engineering workforce.

The implication is clear: AI does not automatically improve DevOps outcomes. Applied to brittle pipelines or fragmented telemetry, it accelerates instability. Applied to robust, standardized foundations, it becomes a force multiplier. The organizations that succeed in 2026 are those that modernize their entire delivery system — not just the IDE.

Tech should do more than work — it should do good, and it should scale purposefully.”

Fedir Kompaniiets, CEO, Gart Solutions

Intent-to-Infrastructure — the evolution of IaC

Infrastructure as Code has been a DevOps cornerstone for years, but the model is undergoing a fundamental transformation in 2026. The industry is moving away from hand-crafted Terraform scripts and declarative state management toward what practitioners call Intent-to-Infrastructure — AI-powered platforms that interpret high-level business requirements and autonomously provision compliant, cost-optimized environments.

The evolution of Infrastructure as Code

| Generation | Primary Mechanism | Governance Model | Outcome Focus |

|---|---|---|---|

| IaC 1.0 — Legacy | Manual scripting (Terraform, Ansible) | Periodic manual audits | Resource provisioning |

| IaC 2.0 — Standard | Declarative state management | Automated policy checks | Environment consistency |

| Intent-Driven (2026) | AI translation of requirements | Continuous autonomous reconciliation | Business-aligned outcomes |

In the intent-driven model, a developer can express a requirement in plain language — for example, “provision a production-ready Kubernetes cluster with SOC 2-compliant networking for our EU-West workload” — and the platform autonomously generates, validates, and manages the resources. Compliance is no longer a retrospective audit exercise; it is embedded at the moment of generation.

This approach directly addresses one of the most persistent gaps in enterprise cloud governance: the Confidence Gap. While 77% of organizations report confidence in their AI-generated infrastructure, only 39% maintain the fully automated audit trails needed to actually verify those outputs. Intent-driven platforms close this gap by creating immutable, traceable records of every provisioning decision.

AIOps and the new era of observability

As cloud-native architectures scale in complexity, the challenge facing modern platform engineers is no longer the collection of telemetry data — it is the meaningful interpretation of it. According to Gartner, over 60% of production incidents in 2026 are caused by poor interpretation of existing data, not a lack of visibility. Teams are drowning in signals while missing the meaning.

This has driven the rapid maturation of AIOps — Artificial Intelligence for IT Operations — which shifts the operational model from reactive incident firefighting to predictive, self-healing systems. Modern AIOps platforms in 2026 are built on three core capabilities:

Predictive incident management

AI models trained on historical delivery patterns, change velocity data, and error logs can now surface probabilistic risk assessments hours before a service outage occurs. Rather than reacting to pages at 3am, platform teams receive prioritized warnings during business hours with recommended remediation paths.

Autonomous remediation

For well-understood failure patterns — pod OOMKill events, connection pool exhaustion, SSL certificate expiry — AI agents can execute validated runbooks autonomously, patching or scaling systems within seconds of detection. Human intervention is reserved for novel or high-impact scenarios.

Intelligent alert prioritization

By correlating weak signals across application, infrastructure, and network layers, modern AIOps platforms reduce alert noise by up to 70%. Engineers no longer triage a wall of Slack notifications — they engage with a curated, context-rich incident queue.

DevSecOps 2.0 — when autonomous security becomes non-negotiable

The security landscape of 2026 is unforgiving. The mean time to exploit a known vulnerability has collapsed from 23.2 days in 2025 to just 1.6 days — faster than any human-speed security process can respond. This has driven a fundamental rearchitecting of DevSecOps, from a set of “shift left” practices to a fully autonomous, self-healing security model.

| Security Metric | Traditional DevSecOps | AI-Enhanced DevSecOps (2026) |

|---|---|---|

| Vulnerability identification | Periodic scanning of dependencies | Real-time scanning of code, containers, and runtimes |

| Threat response | Manual triage and incident response | Automated isolation of compromised resources |

| Compliance evidence | Manual spreadsheet collection | Automated, immutable audit trails |

| Risk assessment | Static CVSS vulnerability scoring | Contextual scoring based on reachability and blast radius |

For regulated industries — healthcare, financial services, legal — compliance is no longer a quarterly exercise. In 2026, the most resilient organizations implement Compliance-by-Design infrastructure, where HIPAA, HITECH, SOC 2, and PCI-DSS controls are embedded directly into DevOps pipelines. Every commit, every deployment, every configuration change produces a verifiable, immutable compliance artifact — not as overhead, but as a natural byproduct of the engineering workflow.

The shift is cultural as well as technical: compliance is now understood as a growth enabler, not a hindrance. Organizations that can demonstrate real-time security posture attract enterprise customers, pass procurement audits, and move faster through regulated markets.

FinOps and the economics of intelligent infrastructure

Cloud spending has become a top-five P&L line item for most mid-to-large enterprises in 2026. Uncontrolled SaaS sprawl, over-provisioned Kubernetes clusters, and idle development environments have made AI-driven FinOps not just a cost-optimization strategy, but a boardroom-level priority.

The latest generation of FinOps tooling applies AI in two directions: reactive optimization (identifying and eliminating waste in existing infrastructure) and proactive cost governance (embedding unit cost constraints into provisioning workflows before resources are ever created). The results are significant — in some cases, organizations achieve savings of up to 80% on AWS compute budgets through spot instance migration, rightsizing, and automated idle resource termination.

Increasingly, FinOps and sustainability are being treated as two sides of the same coin. By eliminating idle compute and over-provisioned infrastructure, organizations simultaneously reduce cloud spend and digital carbon footprint — what practitioners are calling Green FinOps. At Gart Solutions, 70% of client workloads are optimized to run on green cloud platforms as part of a carbon-neutral-by-default infrastructure strategy.

“Applied to brittle pipelines or fragmented telemetry, AI accelerates instability. Applied to robust, standardized foundations, it becomes the force multiplier that allows organizations to scale resilience at the speed of code.”

Roman Burdiuzha, CTO, Gart Solutions

Human-on-the-Loop governance — the new control model

As AI agents take over increasing portions of the operational layer, one of the defining debates of 2026 is where to draw the line on autonomy. The industry consensus has moved away from both extremes — fully manual “Human-in-the-Loop” (HITL) processes that create bottlenecks, and fully autonomous systems that introduce unacceptable risk — toward a middle path: Human-on-the-Loop (HOTL) governance.

In the HOTL model, AI agents operate autonomously within predefined guardrails. Humans shift from being operators to being overseers — setting policies, reviewing exceptions, and vetoing high-stakes decisions. The architecture is built on four pillars:

- Step and cost thresholds — Hard limits on the number of actions an agent can execute per session, or the total tokens consumed, prevent infinite loops and runaway infrastructure costs.

- The Veto Protocol — For high-risk decisions (budget reallocations, production changes above a defined blast radius), the agent surfaces a structured “Decision Summary” for asynchronous human review before proceeding.

- Identity and access control — Agents are granted short-lived, task-scoped credentials. They never hold standing access to production environments; every session is authenticated, logged, and time-bounded.

- Immutable audit trails — Every agent action generates a cryptographically signed record, ensuring full traceability for compliance and post-incident review.

This governance model is not a limitation on AI capability — it is what makes AI capability trustworthy enough to deploy at scale in regulated, high-stakes environments.

Industry-specific transformations

Manufacturing — the intelligent shop floor

Manufacturing organizations face a persistent challenge: deeply siloed data environments where Management Execution Systems (MES), ERP platforms, IoT sensor networks, and POS systems rarely communicate in real time. In 2026, cloud-native, AI-powered integration layers are dissolving these silos — enabling predictive maintenance, real-time production analytics, and supply chain transparency from raw material to finished product.

For one manufacturing client, a custom Green FinOps strategy eliminated over-provisioned infrastructure while a blockchain-based supply chain integration created end-to-end product traceability. The combined impact: measurable cost savings, improved regulatory compliance, and a more resilient operational model.

Healthcare — securing the patient data journey

In healthcare, the stakes of a misconfigured infrastructure are clinical as well as financial. DevOps practices in this sector are purpose-built around securing electronic health records, ensuring FDA and HIPAA compliance, and protecting medical device software against zero-day vulnerabilities. AI-driven monitoring continuously scans for “blind spots” that could lead to clinical data loss — not just at deployment time, but across the full runtime lifecycle.

SaaS and fintech — scaling without headcount sprawl

SaaS companies and fintech startups are increasingly turning to DevOps-as-a-Service to manage global availability and rapid iteration cycles without proportional growth in engineering headcount. By embedding automated security tasks, infrastructure-as-code provisioning, and AI-driven observability into every deployment, these teams can scale their products while maintaining the operational quality standards that enterprise customers demand.

Build your intelligent operational fabric

Partner with Gart Solutions for resilient, AI-powered cloud infrastructure.

Your 2026 AI DevOps roadmap

Organizations that are successfully navigating the AI transition in 2026 share a common pattern. They did not bolt AI onto existing processes — they built the foundations first, then amplified them. The roadmap has four distinct stages:

Data readiness audit

Ensure that observability data — logs, metrics, traces, events — is clean, normalized, and accessible across organizational silos. AI models are only as good as the telemetry they consume. Fragmented, noisy data produces fragmented, unreliable AI recommendations.

High-ROI use case selection

Start with workflows where AI delivers measurable, auditable value — automated testing, incident triage, IaC generation, cost anomaly detection. Build confidence and governance muscle before expanding to higher-risk autonomous operations.

Governance architecture

Establish the guardrails — HOTL oversight protocols, agent identity controls, immutable audit trails, cost thresholds — before deploying autonomous agents into production environments. Governance is not friction; it is what makes speed sustainable.

AI fluency across the engineering organization

Develop the skills required to oversee, interact with, and continuously improve intelligent agents. The competitive advantage in 2027 will belong to teams that can govern AI effectively — not just deploy it.

The 2026 AI-native DevOps toolchain

The toolchain of 2026 is defined by intelligence at every stage of the delivery pipeline. Unlike earlier generations of tooling that added AI as an afterthought, these platforms are AI-native — built from the ground up to learn, adapt, and act autonomously.

| Tool | Domain | Key AI Capability |

|---|---|---|

| Snyk | Security | Real-time AI scanning for dependencies, containers, and IaC |

| Spacelift | Infrastructure | Multi-tool IaC management with AI policy enforcement |

| Harness | CI/CD | Intelligent software delivery with autonomous deployment verification |

| Datadog | Monitoring | AI-augmented full-stack visibility, anomaly detection, log correlation |

| PagerDuty | Incident Management | ML-based event correlation and intelligent noise reduction |

| StackGen | Platform Eng. | AI-powered intent-to-infrastructure generation |

| K8sGPT | Kubernetes | Natural language explanation and diagnosis of cluster errors |

| Sysdig Sage | DevSecOps | AI analyst for runtime security threat detection and CNAPP |

| Cast AI | FinOps | Autonomous Kubernetes cost optimization and rightsizing |

Conclusion — from manual doers to intelligent orchestrators

The convergence of AI and DevOps in 2026 has redefined what is possible in software delivery. The organizations that thrive are not those that deploy the most AI tools — they are those that build the most resilient foundations and then amplify those foundations intelligently. Cloud infrastructure is no longer a hosting environment. It is an intelligent fabric that predicts, learns, and self-heals.

The transition is as cultural as it is technical. Engineering teams are moving from being manual operators to being intelligent orchestrators — governing not through a queue of tickets, but through the strategic definition of intent and the rigorous enforcement of outcomes. For those willing to make this shift, the competitive advantage is significant, durable, and compounding.

As Gart Solutions has built its entire practice around: tech should do more than work — it should do good, and it should scale purposefully.

Build your intelligent operational fabric with us

A boutique DevOps and cloud infrastructure partner for engineering teams that want to scale reliably, securely, and sustainably — without the overhead of a hyperscaler.

DevOps as a Service

Full-lifecycle CI/CD design, automation, and platform engineering for teams that need reliable, battle-tested delivery pipelines at startup speed.

Cloud migration & adoption

Strategic migration from on-premise or legacy cloud environments to modern, cost-optimized, and green cloud architectures on AWS, GCP, or Azure.

DevSecOps automation

Compliance-by-design infrastructure for regulated industries — embedding HIPAA, SOC 2, and PCI-DSS controls directly into your delivery pipeline.

AIOps & observability

End-to-end observability strategy — from eBPF telemetry and distributed tracing to AI-powered alerting, anomaly detection, and autonomous runbook execution.

FinOps & cloud cost optimization

Cloud cost audits, spot instance migration, idle resource termination, and Kubernetes rightsizing — achieving savings of up to 80% on cloud budgets.

Managed infrastructure

24/7 proactive management of your cloud infrastructure, with SLA-backed uptime guarantees, automated scaling, and continuous compliance monitoring.

See how we can help to overcome your challenges