In 2026, the line between a thriving digital business and a stagnating one often comes down to how you allocate IT spend. Here's what every tech leader should know — and how Gart Solutions helps you get it right.

64%

Average cloud cost reduction via FinOps

81%

Compute savings on Azure Spot VMs (AI client)

200×

Operational efficiency gains in retail cloud migrations

Why this decision matters more than ever

Technology spending is no longer a back-office function managed quietly by IT. It's a board-level conversation that shapes cash flow, tax strategy, and competitive agility. The distinction between Capital Expenditure (CapEx) and Operational Expenditure (OpEx) has evolved from a simple accounting rule into a core lever for how fast your organization can move.

For most organizations, the shift is already underway — but moving from on-premise infrastructure to cloud isn't just a technical migration. It's a financial transformation that requires the right expertise to execute safely and sustainably.

"The question isn't whether to move to OpEx. It's whether you have the discipline and expertise to manage it well — and that's exactly where most teams underestimate the challenge."

CapEx vs. OpEx: the fundamentals

Before diving into strategy, let's establish clear definitions for how these models apply to IT in practice.

Traditional Model

Capital Expenditure

▪ Outright purchase of servers, switches, storage hardware

▪ Costs capitalized on the balance sheet (PP&E)

▪ Depreciated over 3–5 years

▪ Large upfront cash outlay in Year 1

▪ Organization owns & maintains all assets

▪ Rigid capacity planning; expensive to scale

Cloud-Native Model

Operational Expenditure

✓ Monthly subscriptions to AWS, Azure, GCP

✓ Fully expensed in the current fiscal year

✓ Immediate tax deductibility

✓ Zero upfront capital — pay only for what you use

✓ Provider handles maintenance & upgrades

✓ Elastic scaling: grow or shrink on demand

5-year TCO: the numbers don't lie

For a typical mid-market organization (50–150 users), the five-year total cost of ownership tells a compelling story. The on-premise model requires significant capital in Year 1 alone — before a single workload runs in production.

Cost component (5-year window)On-premise modelCloud-hosted modelYear 1 capital outlay$58,000 – $128,000$0Hardware refresh / upgradesSignificant periodic reinvestmentIncluded in subscriptionPower, cooling & facilitiesFully borne by the organizationIncluded in service feesIT staff (maintenance focus)High headcount requirementShift to optimization focusTotal estimated TCO (5 years)$553,000 – $1,138,000$350,000 – $820,000

The cloud advantage is real — but only if costs are actively managed. Without a FinOps discipline, cloud bills can grow faster than revenue. That's where Gart Solutions' expertise becomes a strategic asset, not just a vendor relationship.

FinOps: the discipline that makes OpEx work

Transitioning to OpEx without a financial operations framework is like switching from a fixed salary to freelance income without a budget — the flexibility is there, but the risk of overspending is real. FinOps closes that gap by aligning engineering, finance, and business teams around a shared goal: every cloud dollar tied to measurable business value.

1

Inform — full cost visibility & tagging

2

Optimize — right-size, reserve, spot

3

Operate — governance, automation, policy

64%

Average cloud cost optimization delivered by Gart's FinOps engagements.

In one AI-driven manufacturing project, Azure Spot VM optimization alone

drove an 81% reduction in compute costs — with zero performance impact.

How Gart approaches cloud cost optimization

Rightsizing & auto-scaling. We analyze actual resource utilization across your entire cloud estate and match instance types to real workload needs — eliminating the most common and expensive mistake in cloud: over-provisioning. AWS Auto Scaling is configured to respond to live demand, not theoretical peaks.

Leveraged pricing models. Steady-state workloads move to Reserved Instances or Savings Plans (up to 72% cheaper). Fault-tolerant batch processes and CI/CD agents run on Spot Instances — delivering savings of up to 90% vs on-demand pricing.

Storage lifecycle management. We enforce automated tiering policies that move infrequently accessed data from high-performance block storage to cold tiers like Amazon S3 Glacier — significantly reducing one of the fastest-growing cost categories in enterprise cloud.

How Gart Solutions accelerates your transition

Knowing the theory is one thing. Executing a CapEx-to-OpEx migration safely, at scale, and without disrupting production — that's where engineering expertise matters. Here's what we bring to the table.

DevOps & CI/CD automation

We integrate automation into every stage of your infrastructure lifecycle — reducing time-to-market and ensuring cloud resources are only provisioned when genuinely needed.

Fractional CTO leadership

For organizations without senior tech leadership, our Fractional CTO service ensures every infrastructure decision — CapEx or OpEx — is strategically sound and future-proof.

Compliance as Code

For regulated industries — healthcare (HIPAA, GDPR, NIS2), fintech, and beyond — we embed compliance directly into the infrastructure pipeline, not as an afterthought.

Cloud migration & IT audit

We begin every engagement with a comprehensive IT audit — identifying hidden costs, legacy dependencies, and optimization opportunities before a single workload moves.

Tax advantages of the OpEx model

One of the most underappreciated benefits of cloud adoption is its impact on tax strategy. When your organization pays $20,000 in cloud hosting fees, that entire sum is typically deductible in the current fiscal year — providing immediate relief rather than a multi-year depreciation schedule.

In the UK, the reformed RDEC scheme now covers cloud computing and data costs as qualifying R&D expenditure (from April 2023). In the US, cloud compute rental expenses can qualify as Research Expenditures under the R&E tax credit. For engineering-led organizations running simulations, model training, or data-intensive R&D workloads, this represents significant annual savings that compound with scale.

Software development costs can also be capitalized as intangible assets once the application development stage begins — blending CapEx vs. OpEx treatment strategically to manage margins. A close CTO-CFO partnership is essential to classify engineering activity correctly.

Looking ahead: CapEx vs. OpEx

As AI and ML workloads become central to product strategy, the cost management challenge intensifies. GPU compute is structurally more expensive than standard instances, and without proactive governance, AI infrastructure costs can spiral rapidly. In this environment, FinOps evolves from an analytical function into an active control mechanism — tracking cost per inference, cost per model version, and optimizing training pipelines continuously.

Gart Solutions is already working with AI-driven clients across manufacturing, healthcare, and retail to implement GPU rightsizing, spot instance strategies for training jobs, and automated cost alerting — keeping AI ambitions financially grounded.

The global financial services sector is at an inflection point. For decades, traditional banks have operated on aging mainframe infrastructure that was designed for a batch-processing world — a world without real-time payments, open APIs, or autonomous AI agents. Today, approximately 43% of financial institutions still run core banking systems built more than 20 years ago. These systems, largely written in COBOL and similar procedural languages, have become the single greatest constraint on innovation in banking.

This article provides a comprehensive look at core banking modernization — the strategic, technical, and organizational journey from monolithic legacy platforms to composable, cloud-native, AI-ready financial ecosystems. Whether you are a CIO evaluating migration strategies or a product leader planning a fintech digital transformation roadmap, this guide covers everything you need to make informed, high-stakes decisions.

The economic burden of legacy infrastructure

The most persistent myth in banking IT is that maintaining a legacy system is cheaper than replacing it. In reality, financial institutions consistently underestimate the true total cost of ownership of legacy systems by 70 to 80%. When compliance overhead, integration workarounds, and innovation opportunity cost are factored in, actual IT expenditure runs 3.4 times higher than initially budgeted.

70%

Of IT budgets spent maintaining legacy systems rather than building new products

4.7×

More spent on compliance for legacy systems vs. modern alternatives

$2.41T

Cost of poor software quality in the US alone—with $1.52T in accumulated technical debt

This is the compounding nature of technical debt in banking legacy system modernization. Every year a bank delays, the workarounds grow more complex, the specialist consultants grow more expensive, and the competitive gap versus digital-native challengers widens. The market has a term for this: the "Innovation Tax." For every dollar spent on IT, less than 30 cents reaches new product development.

The core banking modernization market is projected to grow from USD 1.9 billion in 2025 to USD 16.8 billion by 2035, representing a CAGR of 24.4%. This is not a technology trend — it is a survival imperative for traditional financial institutions facing competition from cloud-native challengers.

The hidden costs that inflate legacy TCO include: custom coding for minor product updates, exorbitant consulting fees for COBOL specialists, extended QA and testing cycles on unstable codebases, and the maintenance of redundant parallel systems following mergers and acquisitions. Each of these cost drivers compounds the others, creating a system that consumes budget without generating strategic value.

The COBOL talent crisis and operational resilience

One of the least discussed — yet most urgent — aspects of legacy modernization in fintech is the impending collapse of the specialist workforce that keeps legacy systems running. An estimated 220 billion lines of COBOL code remain in active production globally. These systems process 95% of ATM swipes and the majority of daily financial transactions worldwide. The workforce keeping them alive is rapidly disappearing.

The average COBOL programmer is 55 years old. Approximately 10% of this workforce retires every year, with no meaningful pipeline of replacement talent.

60% of organizations using COBOL cite finding skilled developers as their primary operational challenge, leading to delayed security patches and extended system downtime.

"Black box" logic — business rules embedded in decades-old code that no current employee fully understands — poses a systemic risk to operational resilience.

This is not a distant risk. It is happening now. Banks that have not initiated structured knowledge-capture and code-analysis programs are accumulating operational fragility with every retirement notice. The question is not if your COBOL estate will become unmanageable, but when.

Modernization strategies: a practical comparison

There is no single correct approach to core banking modernization. The right strategy depends on institutional size, risk appetite, regulatory context, and existing technology investment. Below, we outline the three dominant approaches and their respective trade-offs.

Rip and replace — the high-risk path

The "Big Bang" or "Rip and Replace" strategy involves decommissioning the legacy system and cutting over to a new platform in a single compressed timeframe. While it promises the fastest path to a clean architecture, its failure rate is significant. If the new system fails at the point of cutover, the bank goes offline entirely — as witnessed in the TSB disaster of 2018, which cost the institution over £600 million in direct and remediation costs.

⚠ Legacy Risk

Rip & Replace

High-variance, binary outcome: Success is not guaranteed.

Catastrophic failure potential: Risk of total system blackout if cutover fails.

Massive upfront capital: Requires significant investment before value is realized.

Rules lost in translation: Legacy business logic often fails to migrate correctly.

Governance complexity: High-stakes Board-level oversight required.

Regulatory misalignment: Approval timelines rarely match the aggressive cutover.

✓ Recommended Strategy

Phased Modernization

Controlled progress: Reversible steps reduce systemic danger.

Parallel processing: Legacy systems run alongside new components.

Incremental migration: Business logic is preserved and moved slowly.

Safe rollbacks: Revert any stage without a full institution outage.

Continuous delivery: Unlock new capabilities every few months, not years.

Clear audit trails: Easier regulatory engagement via step-by-step validation.

Automated refactoring — preserving intellectual property

Automated refactoring uses specialized tooling to convert legacy code — typically COBOL — into modern languages such as Java or C#. Critically, this method preserves the unique business logic that has been refined over decades. Rather than rebuilding rules from scratch (and inevitably missing edge cases discovered only after the fact), refactoring transforms the codebase while retaining its institutional knowledge, moving the application to a cloud-native, microservices-compatible foundation.

Hollowing out the core — the pragmatic middle path

A growing approach among regional and community banks is "hollowing out the core." Rather than replacing the legacy system entirely, this strategy decouples critical real-time services — transaction authorization, balance management, payment processing — and moves them to a modern cloud-native layer. A "Digital Twin" high-performance ledger handles real-time operations, while the legacy core continues performing non-real-time functions such as regulatory reporting and historical statement management.

Key benefit: By moving transaction authorization to a Digital Twin, banks can participate in instant payment networks like FedNow and RTP even when the legacy core is offline for nightly batch processing — unlocking real-time capability without a complete replacement project.

Composable banking and the BIAN standard

The end-state of fintech digital transformation in core banking is not a new monolith — it is a composable architecture. Rather than replacing one rigid system with another, composable banking assembles best-of-breed capability blocks, interconnected via standardized APIs, that can be independently updated, scaled, or replaced without disrupting the whole.

The Banking Industry Architecture Network (BIAN) provides the industry-standard framework for this approach, offering a common service landscape and shared vocabulary that dramatically reduces integration complexity. BIAN-aligned architectures offer three compounding benefits: they reveal redundancies in existing systems that drive consolidation savings; they enable a "change-the-bank" rather than just "run-the-bank" mentality; and they provide the clean integration surface required for embedding advanced AI and autonomous agents.

BIAN Domain

Strategic Outcome

Operational Benefit

KYC & Onboarding

Rationalized onboarding systems

Faster customer acquisition

Payments Processing

Real-time event architecture

Reduced settlement latency

Credit Decisioning

Continuous compliance layer

Improved risk scoring

Identity Management

Trust as a Service model

Monetizable API endpoints

Data & Analytics

Unified data fabric

AI-ready data foundation

BIAN-aligned APIs are evolving from internal integration tools into externally exposed capability endpoints — the foundation of embedded finance and Banking-as-a-Service business models. Institutions that align to BIAN now are building the commercial infrastructure of the next decade.

AI as a transformation catalyst — from generative to agentic

The role of artificial intelligence in core banking modernization is dual: it accelerates the transformation process itself, and it defines the target state that transformation is building toward. Both dimensions deserve serious attention.

AI accelerating migration

CIOs and engineering leaders are increasingly deploying generative AI to analyze legacy codebases, extract embedded business rules, and automate the conversion of procedural COBOL logic into modern architectures. This approach directly addresses the highest-risk phase of any banking legacy system modernization: the knowledge-capture and code-translation work that, done manually, is slow, expensive, and error-prone. AI-driven development reduces manual effort and dramatically improves migration accuracy.

Agentic AI in core banking modernization

The shift from generative AI to agentic AI represents a fundamental change in how banking operations are conceived. While 2024 and early 2025 were characterized by chatbots and text summarization, 2026 marks the year of autonomous AI agents capable of executing complex multi-step workflows without continuous human oversight.

McKinsey estimates that banks leveraging AI at scale can achieve a

30 to 50% acceleration in development timelines and unlock up to

$1 trillion in annual value globally.

McKinsey & Company Research

In the context of core banking, agentic AI systems can autonomously reconcile ledgers, pre-underwrite loans by reading financial statements in real time, detect fraud in instant payment flows, and prioritize relationship management at scale. However, all of these capabilities depend on one prerequisite: a clean, integrated, real-time data foundation. AI strategy is only as good as the underlying data architecture — and this is precisely what legacy modernization enables.

AI Capability Level

Application in Banking

Strategic Impact

Traditional ML

Fraud pattern detection and transaction monitoring

Risk reduction

Generative AI

Automated code refactoring and legacy logic extraction

Modernization speed

Agentic AI

Autonomous loan underwriting and workflow execution

Operational efficiency

Multi-agent Mesh

Cross-department collaboration and autonomous orchestration

Enterprise agility

Managing distributed transactions: consistency vs. scalability

One of the most technically challenging aspects of legacy modernization in banking is the transition from the atomic consistency of monolithic databases to the distributed nature of microservices. In a legacy mainframe, a transaction either commits everywhere or rolls back everywhere — an elegantly simple guarantee. In a distributed cloud architecture, achieving the same guarantee requires deliberate architectural patterns.

Two-Phase Commit (2PC)

The 2PC protocol ensures strong, immediate consistency across multiple nodes through a coordinated "prepare" and "commit" sequence. It guarantees that all systems reflect the same state — essential for core ledger operations such as money transfers where immediate consistency is non-negotiable. The trade-off is latency, a single point of failure at the coordinator node, and data locks that can impede throughput under high volume.

The Saga Pattern

The Saga pattern decomposes a distributed transaction into a sequence of smaller, independent local transactions. Consistency is maintained through eventual consistency and compensating transactions — reversal actions triggered if any step in the sequence fails. Sagas are more scalable and resilient, making them the preferred choice for long-running workflows in cloud-native environments: loan origination, customer onboarding, complex payment routing.

Feature

Two-Phase Commit (2PC)

Saga Pattern

Consistency model

Strict / immediate

Eventual

Transaction type

Single global transaction

Sequence of local transactions

Failure handling

Global rollback

Compensating transactions

Latency

Higher (coordination overhead)

Lower (local execution)

Scalability

Limited (global locks)

High (loosely coupled)

Best suited for

Core ledger

Fund transfers

Onboarding

Credit

Workflows

The strategic decision is not either/or. Well-designed modern core banking architectures apply 2PC for the immutable ledger layer where immediate consistency is the gold standard, and Saga patterns for auxiliary services where agility and scale matter more than synchronous guarantees.

Regulatory evolution: FiDA, PSD3, and the BaaS reckoning

Core banking modernization is not only a technology imperative — it is increasingly a regulatory mandate. The European and US regulatory environments are actively reshaping what modern banking infrastructure must be capable of, at a speed that legacy systems cannot match.

FiDA and the open finance transition

The EU's Financial Data Access (FiDA) regulation, expected to become law in 2026, marks the formal transition from Open Banking to Open Finance. FiDA expands consent-based data sharing to cover mortgages, loans, investments, insurance, and pensions — not just payment accounts. For established banks, this means building secure, standardized data-sharing infrastructure capable of delivering complex financial histories to third parties in seconds, with granular customer consent controls centralized in a single dashboard.

PSD3 and fraud prevention responsibility

Parallel to FiDA, PSD3 and the Payment Services Regulation (PSR) harmonize EU payment services and shift liability for financial losses onto payment service providers that fail to implement adequate fraud prevention. This regulatory shift increases pressure on banks to modernize core systems to support real-time transaction monitoring and stronger multi-factor customer authentication — capabilities that most legacy platforms cannot deliver natively.

Regulation

Primary Focus

Timeline

FiDA

Data access and open finance framework

2026 / 2027

PSD3 / PSR

Fraud prevention and payments harmonization

2026

DORA

Digital operational resilience — increased audit frequency

Active — 2026 Audits

EU AI Act

Algorithmic transparency and risk-based AI governance

2025 / 2026

The US BaaS regulatory reckoning

In the United States, 2024 and 2025 have seen a significant regulatory crackdown on the Sponsor Bank model underpinning Banking-as-a-Service. Federal agencies including the OCC and FDIC have issued consent orders to multiple banks, forcing them to substantially increase oversight of their fintech partners. The lesson is clear: BaaS is not a simple deposit-gathering product but a complex operational model requiring deep investment in integrated governance, third-party risk management, and real-time monitoring. Going into 2026, compliance is a competitive product feature — not just a back-office burden.

Case studies: the anatomy of failure and success

The disparity between transformations that succeed and those that fail is rarely technical at its root. It is almost always a function of governance, testing rigor, and the willingness to let technical readiness — not predetermined deadlines — drive the cutover decision.

⚠ Cautionary Tale

TSB Bank, 2018

The "Big Bang" Migration Failure

5 million customers migrated over a single weekend.

1.9 million customers locked out of accounts.

Mortgage accounts vanished; funds appeared in wrong accounts.

£613M total cost: Includes migration, remediation, and fines.

System went live with over 4,400 open defects.

Primary Failure: Timeline driven by business deadline, not technical readiness.

✓ Model Transformation

DBS & HSBC

The Digital-Native Rebuild

Phased strategy: Replaced monolith incrementally with a native cloud core.

50% cost reduction: Lowered cost-to-income ratio for digital customers.

AI deployment accelerated from 18 months to under 5 months.

Ranked Best Digital Bank globally multiple years running.

HSBC AI AML: Detects 2–4× more suspicious activity and reduces false positives by 60% across 1.2B monthly transactions.

The DBS and HSBC cases share a common thread: transformation was treated as an organizational capability-building exercise, not a one-time project. Leadership invested in data infrastructure, engineering culture, and modular architecture before attempting to harvest AI-driven outcomes — and the results compounded over years, not quarters.

Modern core banking providers: choosing the right platform

The provider landscape for core banking modernization has matured considerably over the past five years. The choice of platform is now among the most consequential strategic decisions a bank can make — and the right answer varies significantly by institution size, geography, and ambition.

Provider & HQ

Target Market

Key Differentiation

Temenos

Switzerland

Global Tier 1 & 2 banks

Composable banking cloud with deep global functional richness.

Mambu

Germany

Neobanks & fintechs

Pure SaaS / composable structure for rapid market entry.

Thought Machine

UK

Tier 1 retail banks

Cloud-native / smart contracts for infinite product flexibility.

10x Banking

UK

Tier 1 & global banks

Cloud-native core with AI-driven migration tooling.

Finxact

US

Community & regional

US-localized cloud core tailored for American regulatory frameworks.

Fiserv / Jack Henry

US

Community banks & CUs

Massive US market share and integrated TCO ecosystem.

Tier 1 banks typically prioritize migration complexity management and global regulatory compliance, gravitating toward platforms like Thought Machine or 10x Banking for their ability to handle the transformation of aging mainframes. Community and regional banks focus on total cost of ownership and API flexibility, often choosing Finxact or Jack Henry to ensure open banking compatibility with fintech partners. The platform decision should always follow the architecture decision — not precede it.

The future: banking in 2030 and beyond

By 2030, the concept of a core banking system will have evolved from a monolithic booking engine into a distributed, orchestrating platform. The most significant shift will not be technical — it will be relational. Future banking infrastructure will move away from user-facing portals toward autonomous protocols that enable secure, verified interaction between AI agents.

In this agent-to-agent economy, a customer's personal AI might negotiate mortgage options or dynamically adjust investment allocations directly with a bank's AI system — reducing the need for direct human interaction at the transactional layer while elevating the human role to relationship and exception management. Gartner predicts that by 2030, agentic AI will make at least 15% of daily decisions autonomously, fundamentally flattening organizational structures and redefining the role of middle management in financial services.

Post-quantum cryptography (PQC): Banks must begin implementing quantum-resilient encryption now. The threat timeline from quantum decryption is measured in years, not decades, and migration of cryptographic standards at scale requires significant lead time.

Digital assets & CBDCs: Bitcoin, stablecoins, and Central Bank Digital Currencies are establishing themselves as permanent features of future balance sheets, requiring new valuation models and direct integration into core banking ledgers.

Banking as mentorship: Automation and real-time data analytics will enable banks to monitor customer financial health continuously, offering proactive advice and personalized interventions — evolving from transactional processors to valued financial partners.

By 2028, outdated banking systems are projected to cost global banks over $57 billion annually. The institutions that begin their core banking modernization journey now — systematically, with a clear data and architecture strategy — will define the competitive landscape of the next decade. Those that wait will be paying for the privilege of being disrupted.

Ready to launch your core banking modernization?

Gart Solutions is a technology-first engineering partner specializing in cloud infrastructure, data platform architecture, and DevSecOps for financial institutions navigating legacy modernization.

Cloud-native migration

Phased transition from mainframe and on-premise cores to AWS, GCP, or Azure — with zero-downtime architecture and full disaster recovery.

Data platform & AI readiness

Build the real-time data foundation that legacy systems can't provide — streaming pipelines, clean data lakes, and AI-ready feature stores.

API & composable banking

Design and implement BIAN-aligned, MACH-principle API layers that unlock open banking, BaaS, and embedded finance partnerships.

DevSecOps & compliance

Continuous compliance pipelines aligned to DORA, PSD3, and FiDA — shifting security and audit left into the development lifecycle.

Move faster — without the Big Bang risk.

Start the conversation

Key takeaways: core banking modernization

Avoid Big Bang: Adopt incremental, sidecar, or "hollow out the core" strategies. The risk profile of full cutover migrations is not acceptable for most institutions.

Data first: AI strategy is only as good as the data foundation. Prioritize clean, real-time, integrated data architecture before launching AI initiatives.

Embrace BIAN and MACH principles: Standardized, composable architecture avoids vendor lock-in and creates the integration surface for future capabilities.

Treat compliance as product: Particularly in BaaS and open finance contexts, regulatory readiness is a competitive differentiator, not just a cost center.

Start the COBOL clock: Knowledge-capture and codebase analysis should begin now, before the specialist workforce shrinks further and institutional knowledge is lost.

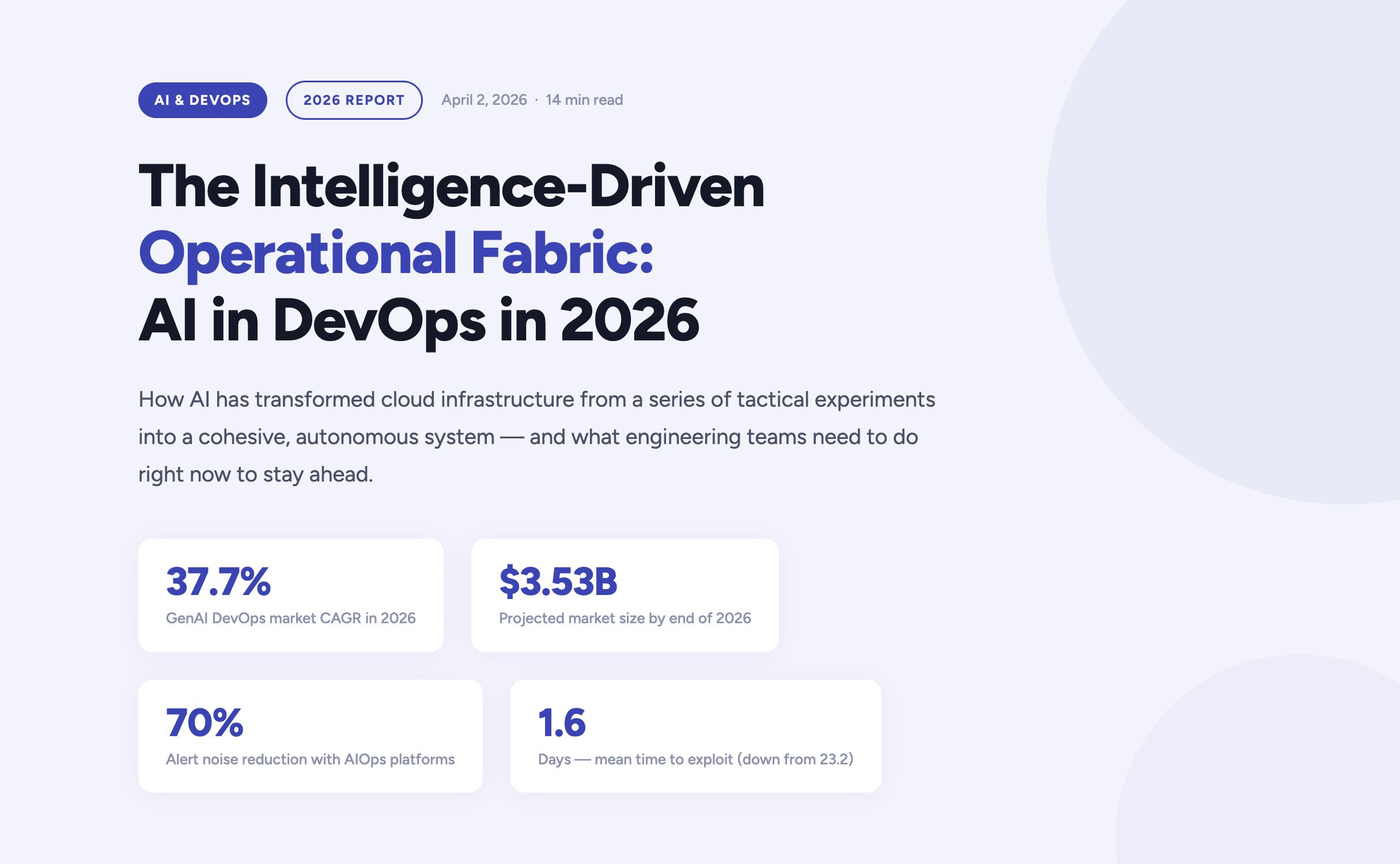

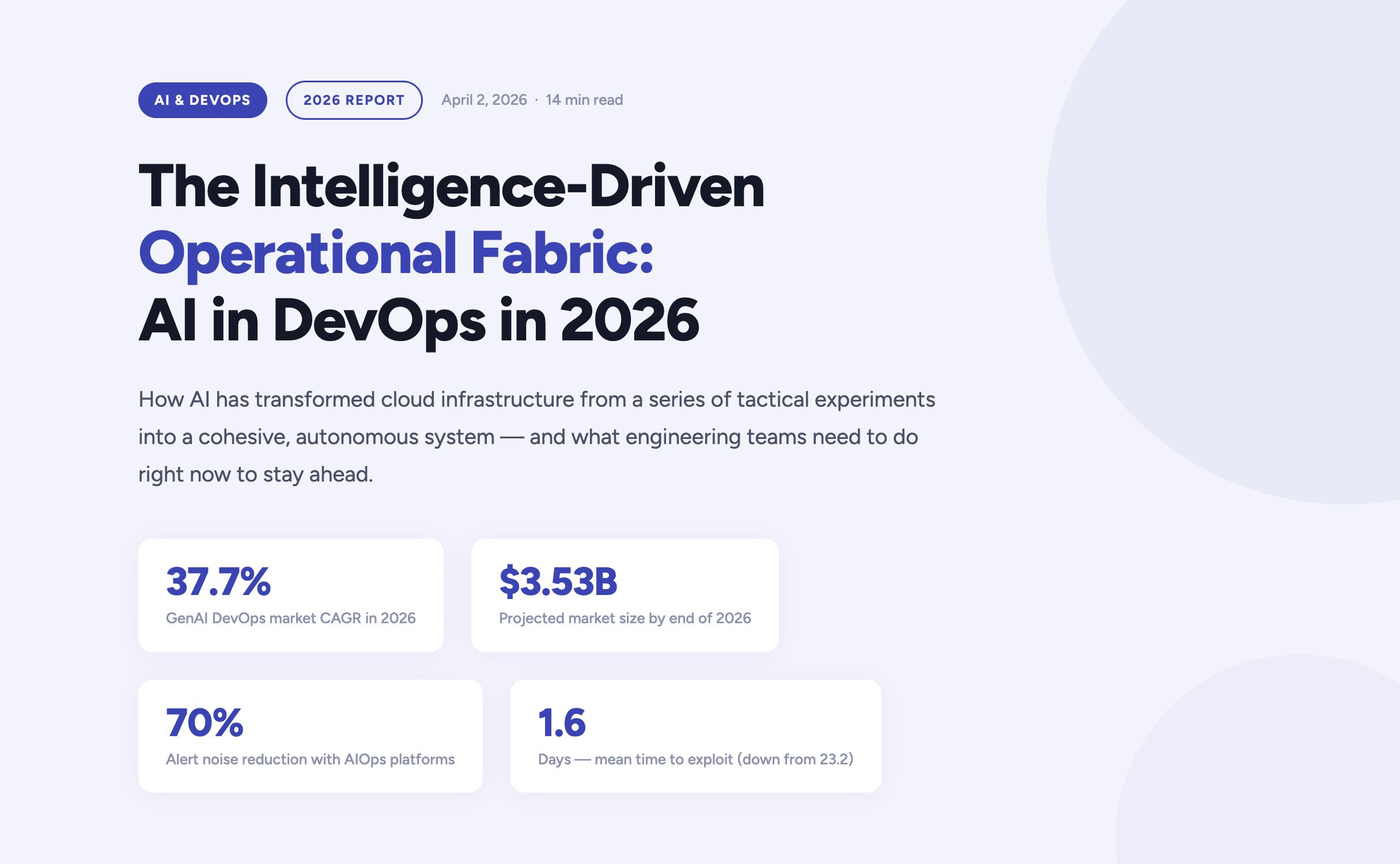

The year 2026 marks a definitive turning point in how enterprises build, deploy, and operate software. Artificial Intelligence has moved far beyond the experimental phase inside DevOps pipelines — it now forms the connective tissue of the entire software delivery lifecycle. According to current market analysis, the generative AI segment of the DevOps market is growing at a compound annual rate of 37.7%, expected to reach $3.53 billion by the end of this year alone.

For engineering teams, platform engineers, and CTOs navigating this shift, the questions are no longer "should we adopt AI?" but rather "how do we govern it?", "where does it amplify our strengths?", and critically — "where does it expose our weaknesses?". This article answers those questions, grounded in the realities of operating cloud infrastructure in 2026.

https://youtu.be/4FNyMRmHdTM?si=F2yOv89QU9gQ7Hif

The AI velocity paradox — why more code isn't always better

One of the most striking findings in the 2026 DevOps landscape is what researchers have begun calling the AI Velocity Paradox. AI-assisted coding tools have dramatically accelerated the code creation phase of the Software Development Life Cycle. However, the downstream delivery systems responsible for testing, securing, and deploying that code have often failed to keep pace — creating a structural mismatch between production and operations capacity.

The data tells a clear story. Teams that use AI coding tools daily are three times more likely to deploy frequently — but they also report significantly higher rates of quality failures, security incidents, and engineer burnout.

The AI DevOps maturity gap — occasional vs. daily AI tool users

The AI DevOps Maturity Gap — 2026 Analysis

Performance Indicator

Occasional AI Usage

Daily AI Usage

Daily deployment frequency

15% of teams

45% of teams

Frequent deployment issues

Minimal

69% of teams

Mean Time to Recovery (MTTR)

6.3 hours

7.6 hours

Quality / security problems

Baseline

51% quality / 53% security

Engineers working overtime

66%

96%

The root cause is structural: a "six-lane highway" of AI-accelerated code generation is funneling into a "two-lane bridge" of operational capacity. Engineers spend an average of 36% of their time on repetitive manual tasks — chasing tickets, rerunning failed jobs, manually validating AI-generated code — while developer burnout now affects 47% of the engineering workforce.

The implication is clear: AI does not automatically improve DevOps outcomes. Applied to brittle pipelines or fragmented telemetry, it accelerates instability. Applied to robust, standardized foundations, it becomes a force multiplier. The organizations that succeed in 2026 are those that modernize their entire delivery system — not just the IDE.

Tech should do more than work — it should do good, and it should scale purposefully."

Fedir Kompaniiets, CEO, Gart Solutions

Intent-to-Infrastructure — the evolution of IaC

Infrastructure as Code has been a DevOps cornerstone for years, but the model is undergoing a fundamental transformation in 2026. The industry is moving away from hand-crafted Terraform scripts and declarative state management toward what practitioners call Intent-to-Infrastructure — AI-powered platforms that interpret high-level business requirements and autonomously provision compliant, cost-optimized environments.

The evolution of Infrastructure as Code

The Evolution of Infrastructure as Code

Generation

Primary Mechanism

Governance Model

Outcome Focus

IaC 1.0 — Legacy

Manual scripting (Terraform, Ansible)

Periodic manual audits

Resource provisioning

IaC 2.0 — Standard

Declarative state management

Automated policy checks

Environment consistency

Intent-Driven (2026)

AI translation of requirements

Continuous autonomous reconciliation

Business-aligned outcomes

In the intent-driven model, a developer can express a requirement in plain language — for example, "provision a production-ready Kubernetes cluster with SOC 2-compliant networking for our EU-West workload" — and the platform autonomously generates, validates, and manages the resources. Compliance is no longer a retrospective audit exercise; it is embedded at the moment of generation.

This approach directly addresses one of the most persistent gaps in enterprise cloud governance: the Confidence Gap. While 77% of organizations report confidence in their AI-generated infrastructure, only 39% maintain the fully automated audit trails needed to actually verify those outputs. Intent-driven platforms close this gap by creating immutable, traceable records of every provisioning decision.

Key IaC Capabilities in 2026

Natural language provisioning — Describe infrastructure requirements in plain English, receiving validated, compliant Terraform or Pulumi code.

Golden path enforcement — Pre-approved patterns ensure every environment is secure by default, reducing misconfiguration risk.

Continuous autonomous reconciliation — AI continuously monitors for drift and self-corrects without human intervention.

Policy-as-code integration — OPA, Sentinel, and custom guardrails are embedded into generation pipelines, not added as an afterthought.

Cost-aware provisioning — FinOps constraints are applied at generation time, preventing over-provisioning before it happens.

AIOps and the new era of observability

As cloud-native architectures scale in complexity, the challenge facing modern platform engineers is no longer the collection of telemetry data — it is the meaningful interpretation of it. According to Gartner, over 60% of production incidents in 2026 are caused by poor interpretation of existing data, not a lack of visibility. Teams are drowning in signals while missing the meaning.

This has driven the rapid maturation of AIOps — Artificial Intelligence for IT Operations — which shifts the operational model from reactive incident firefighting to predictive, self-healing systems. Modern AIOps platforms in 2026 are built on three core capabilities:

Predictive incident management

AI models trained on historical delivery patterns, change velocity data, and error logs can now surface probabilistic risk assessments hours before a service outage occurs. Rather than reacting to pages at 3am, platform teams receive prioritized warnings during business hours with recommended remediation paths.

Autonomous remediation

For well-understood failure patterns — pod OOMKill events, connection pool exhaustion, SSL certificate expiry — AI agents can execute validated runbooks autonomously, patching or scaling systems within seconds of detection. Human intervention is reserved for novel or high-impact scenarios.

Intelligent alert prioritization

By correlating weak signals across application, infrastructure, and network layers, modern AIOps platforms reduce alert noise by up to 70%. Engineers no longer triage a wall of Slack notifications — they engage with a curated, context-rich incident queue.

60%+

Incidents from misinterpretation

70%

Less alert noise via AIOps

36%

Engineer time lost to manual tasks

eBPF

Deep visibility sans code changes

DevSecOps 2.0 — when autonomous security becomes non-negotiable

The security landscape of 2026 is unforgiving. The mean time to exploit a known vulnerability has collapsed from 23.2 days in 2025 to just 1.6 days — faster than any human-speed security process can respond. This has driven a fundamental rearchitecting of DevSecOps, from a set of "shift left" practices to a fully autonomous, self-healing security model.

Traditional vs. AI-Enhanced DevSecOps

Security Metric

Traditional DevSecOps

AI-Enhanced DevSecOps (2026)

Vulnerability identification

Periodic scanning of dependencies

Real-time scanning of code, containers, and runtimes

Threat response

Manual triage and incident response

Automated isolation of compromised resources

Compliance evidence

Manual spreadsheet collection

Automated, immutable audit trails

Risk assessment

Static CVSS vulnerability scoring

Contextual scoring based on reachability and blast radius

For regulated industries — healthcare, financial services, legal — compliance is no longer a quarterly exercise. In 2026, the most resilient organizations implement Compliance-by-Design infrastructure, where HIPAA, HITECH, SOC 2, and PCI-DSS controls are embedded directly into DevOps pipelines. Every commit, every deployment, every configuration change produces a verifiable, immutable compliance artifact — not as overhead, but as a natural byproduct of the engineering workflow.

The shift is cultural as well as technical: compliance is now understood as a growth enabler, not a hindrance. Organizations that can demonstrate real-time security posture attract enterprise customers, pass procurement audits, and move faster through regulated markets.

FinOps and the economics of intelligent infrastructure

Cloud spending has become a top-five P&L line item for most mid-to-large enterprises in 2026. Uncontrolled SaaS sprawl, over-provisioned Kubernetes clusters, and idle development environments have made AI-driven FinOps not just a cost-optimization strategy, but a boardroom-level priority.

The latest generation of FinOps tooling applies AI in two directions: reactive optimization (identifying and eliminating waste in existing infrastructure) and proactive cost governance (embedding unit cost constraints into provisioning workflows before resources are ever created). The results are significant — in some cases, organizations achieve savings of up to 80% on AWS compute budgets through spot instance migration, rightsizing, and automated idle resource termination.

Increasingly, FinOps and sustainability are being treated as two sides of the same coin. By eliminating idle compute and over-provisioned infrastructure, organizations simultaneously reduce cloud spend and digital carbon footprint — what practitioners are calling Green FinOps. At Gart Solutions, 70% of client workloads are optimized to run on green cloud platforms as part of a carbon-neutral-by-default infrastructure strategy.

"Applied to brittle pipelines or fragmented telemetry, AI accelerates instability. Applied to robust, standardized foundations, it becomes the force multiplier that allows organizations to scale resilience at the speed of code."

Roman Burdiuzha, CTO, Gart Solutions

Human-on-the-Loop governance — the new control model

As AI agents take over increasing portions of the operational layer, one of the defining debates of 2026 is where to draw the line on autonomy. The industry consensus has moved away from both extremes — fully manual "Human-in-the-Loop" (HITL) processes that create bottlenecks, and fully autonomous systems that introduce unacceptable risk — toward a middle path: Human-on-the-Loop (HOTL) governance.

In the HOTL model, AI agents operate autonomously within predefined guardrails. Humans shift from being operators to being overseers — setting policies, reviewing exceptions, and vetoing high-stakes decisions. The architecture is built on four pillars:

Step and cost thresholds — Hard limits on the number of actions an agent can execute per session, or the total tokens consumed, prevent infinite loops and runaway infrastructure costs.

The Veto Protocol — For high-risk decisions (budget reallocations, production changes above a defined blast radius), the agent surfaces a structured "Decision Summary" for asynchronous human review before proceeding.

Identity and access control — Agents are granted short-lived, task-scoped credentials. They never hold standing access to production environments; every session is authenticated, logged, and time-bounded.

Immutable audit trails — Every agent action generates a cryptographically signed record, ensuring full traceability for compliance and post-incident review.

This governance model is not a limitation on AI capability — it is what makes AI capability trustworthy enough to deploy at scale in regulated, high-stakes environments.

Industry-specific transformations

Manufacturing — the intelligent shop floor

Manufacturing organizations face a persistent challenge: deeply siloed data environments where Management Execution Systems (MES), ERP platforms, IoT sensor networks, and POS systems rarely communicate in real time. In 2026, cloud-native, AI-powered integration layers are dissolving these silos — enabling predictive maintenance, real-time production analytics, and supply chain transparency from raw material to finished product.

For one manufacturing client, a custom Green FinOps strategy eliminated over-provisioned infrastructure while a blockchain-based supply chain integration created end-to-end product traceability. The combined impact: measurable cost savings, improved regulatory compliance, and a more resilient operational model.

Healthcare — securing the patient data journey

In healthcare, the stakes of a misconfigured infrastructure are clinical as well as financial. DevOps practices in this sector are purpose-built around securing electronic health records, ensuring FDA and HIPAA compliance, and protecting medical device software against zero-day vulnerabilities. AI-driven monitoring continuously scans for "blind spots" that could lead to clinical data loss — not just at deployment time, but across the full runtime lifecycle.

SaaS and fintech — scaling without headcount sprawl

SaaS companies and fintech startups are increasingly turning to DevOps-as-a-Service to manage global availability and rapid iteration cycles without proportional growth in engineering headcount. By embedding automated security tasks, infrastructure-as-code provisioning, and AI-driven observability into every deployment, these teams can scale their products while maintaining the operational quality standards that enterprise customers demand.

Build your intelligent operational fabric

Partner with Gart Solutions for resilient, AI-powered cloud infrastructure.

Talk to an engineer →

Your 2026 AI DevOps roadmap

Organizations that are successfully navigating the AI transition in 2026 share a common pattern. They did not bolt AI onto existing processes — they built the foundations first, then amplified them. The roadmap has four distinct stages:

Data readiness audit

Ensure that observability data — logs, metrics, traces, events — is clean, normalized, and accessible across organizational silos. AI models are only as good as the telemetry they consume. Fragmented, noisy data produces fragmented, unreliable AI recommendations.

High-ROI use case selection

Start with workflows where AI delivers measurable, auditable value — automated testing, incident triage, IaC generation, cost anomaly detection. Build confidence and governance muscle before expanding to higher-risk autonomous operations.

Governance architecture

Establish the guardrails — HOTL oversight protocols, agent identity controls, immutable audit trails, cost thresholds — before deploying autonomous agents into production environments. Governance is not friction; it is what makes speed sustainable.

AI fluency across the engineering organization

Develop the skills required to oversee, interact with, and continuously improve intelligent agents. The competitive advantage in 2027 will belong to teams that can govern AI effectively — not just deploy it.

The 2026 AI-native DevOps toolchain

The toolchain of 2026 is defined by intelligence at every stage of the delivery pipeline. Unlike earlier generations of tooling that added AI as an afterthought, these platforms are AI-native — built from the ground up to learn, adapt, and act autonomously.

The AI DevOps Tooling Landscape (2026)

Tool

Domain

Key AI Capability

Snyk

Security

Real-time AI scanning for dependencies, containers, and IaC

Spacelift

Infrastructure

Multi-tool IaC management with AI policy enforcement

Harness

CI/CD

Intelligent software delivery with autonomous deployment verification

Datadog

Monitoring

AI-augmented full-stack visibility, anomaly detection, log correlation

PagerDuty

Incident Management

ML-based event correlation and intelligent noise reduction

StackGen

Platform Eng.

AI-powered intent-to-infrastructure generation

K8sGPT

Kubernetes

Natural language explanation and diagnosis of cluster errors

Sysdig Sage

DevSecOps

AI analyst for runtime security threat detection and CNAPP

Cast AI

FinOps

Autonomous Kubernetes cost optimization and rightsizing

Conclusion — from manual doers to intelligent orchestrators

The convergence of AI and DevOps in 2026 has redefined what is possible in software delivery. The organizations that thrive are not those that deploy the most AI tools — they are those that build the most resilient foundations and then amplify those foundations intelligently. Cloud infrastructure is no longer a hosting environment. It is an intelligent fabric that predicts, learns, and self-heals.

The transition is as cultural as it is technical. Engineering teams are moving from being manual operators to being intelligent orchestrators — governing not through a queue of tickets, but through the strategic definition of intent and the rigorous enforcement of outcomes. For those willing to make this shift, the competitive advantage is significant, durable, and compounding.

As Gart Solutions has built its entire practice around: tech should do more than work — it should do good, and it should scale purposefully.

Build your intelligent operational fabric with us

A boutique DevOps and cloud infrastructure partner for engineering teams that want to scale reliably, securely, and sustainably — without the overhead of a hyperscaler.

DevOps as a Service

Full-lifecycle CI/CD design, automation, and platform engineering for teams that need reliable, battle-tested delivery pipelines at startup speed.

Cloud migration & adoption

Strategic migration from on-premise or legacy cloud environments to modern, cost-optimized, and green cloud architectures on AWS, GCP, or Azure.

DevSecOps automation

Compliance-by-design infrastructure for regulated industries — embedding HIPAA, SOC 2, and PCI-DSS controls directly into your delivery pipeline.

AIOps & observability

End-to-end observability strategy — from eBPF telemetry and distributed tracing to AI-powered alerting, anomaly detection, and autonomous runbook execution.

FinOps & cloud cost optimization

Cloud cost audits, spot instance migration, idle resource termination, and Kubernetes rightsizing — achieving savings of up to 80% on cloud budgets.

Managed infrastructure

24/7 proactive management of your cloud infrastructure, with SLA-backed uptime guarantees, automated scaling, and continuous compliance monitoring.