The DevOps vs DevSecOps debate is no longer theoretical. In 2026, with AI-generated code shipping at machine speed and regulators tightening compliance obligations across every sector, the question for engineering leaders is not whether to add security to their delivery pipelines—it is how fast they can do it without breaking the velocity that makes modern software teams competitive.

This guide cuts through the noise. We explain the core differences betweenDevSecOps and DevOps, show you exactly what changes in practice, walk through a proven implementation roadmap, and help you decide which model fits your organisation today. Whether you run a five-person startup pipeline or a regulated enterprise environment, you will leave with a clear, actionable picture.

85%

of organisations run some form of DevOps in 2025 (DORA State of DevOps Report)

6×

faster breach detection in DevSecOps-mature teams vs traditional pipelines

70%

of cloud breaches stem from misconfiguration—a gap DevSecOps directly addresses

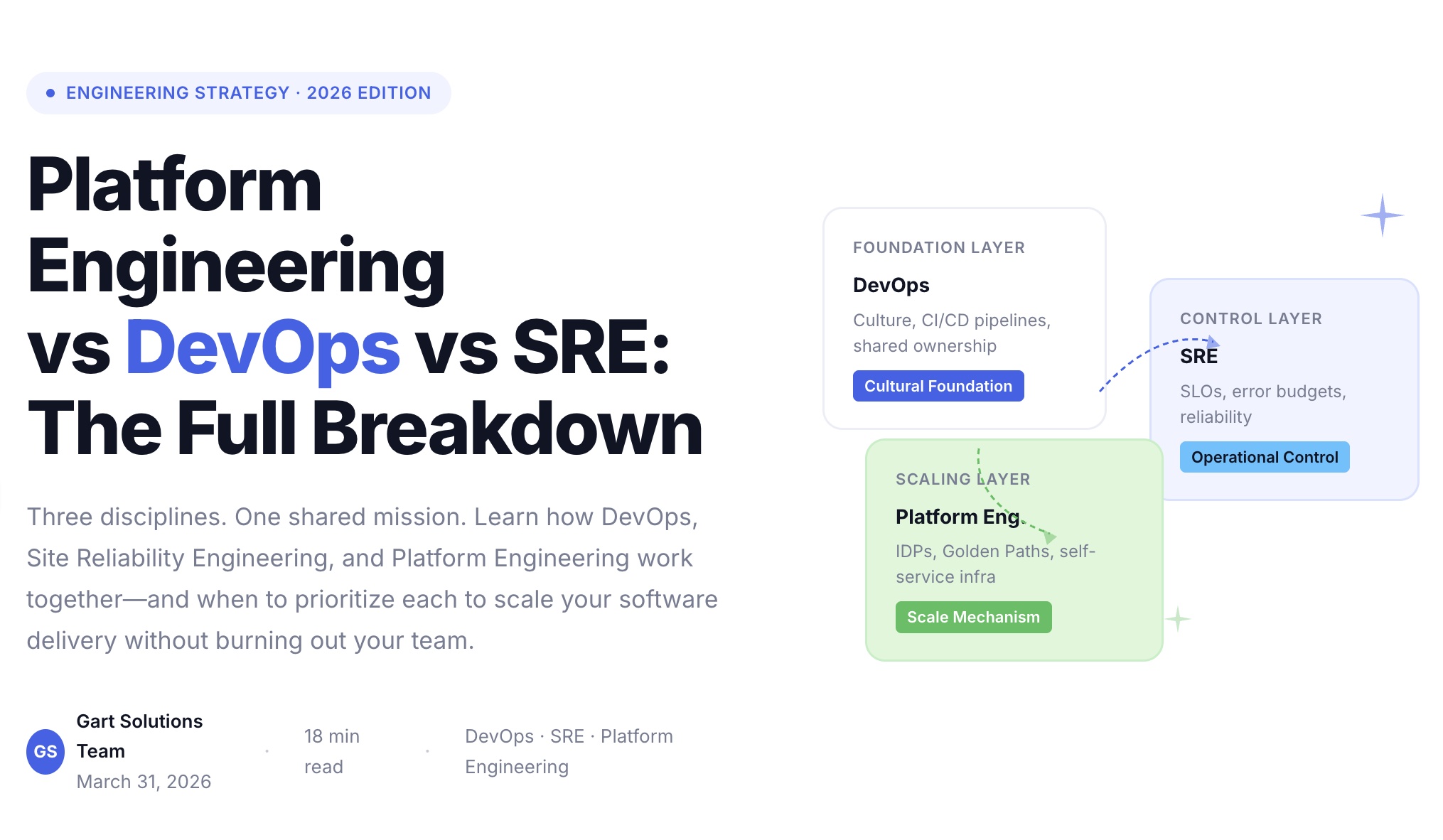

What Is DevOps? The Foundation You Need to Understand First

DevOps is a cultural and technical movement that broke down the wall between software development teams (who want to ship features fast) and operations teams (who want stable, reliable systems). Before DevOps, software was thrown "over the fence" from dev to ops, causing slow releases, finger-pointing, and fragile deployments.

The solution DevOps pioneered was continuous delivery: automated build, test, and deployment pipelines backed by a culture of shared ownership. By the mid-2010s, companies like Netflix, Amazon, and Google were deploying thousands of times per day. The four DORA metrics — deployment frequency, lead time, change failure rate, and mean time to restore — became the industry scorecard.

DevOps solved a genuine problem brilliantly. But it created a quieter problem: security was not in the room. Security teams remained external reviewers, approving or rejecting releases after developers had already spent weeks on a feature. The faster DevOps moved, the larger the gap grew.

SECTION TAKEAWAY

DevOps merges development and operations through culture + automation.

Goal: faster, more reliable software delivery.

Core mechanism: CI/CD pipelines, IaC, and shared DORA metrics.

Critical blind spot: security remains a late-stage gate, not a built-in property.

What Is DevSecOps? DevOps vs DevSecOps at the Core

DevSecOps does not replace DevOps. It extends and matures it by embedding security as a first-class responsibility at every stage of the software development lifecycle (SDLC) — from the moment a developer starts writing code to the moment an application runs in production.

The phrase "shift left" captures the mechanism: move security controls earlier (to the left on a timeline) so that vulnerabilities are caught when they cost almost nothing to fix, rather than in production where the cost is highest. According to NIST, the relative cost of fixing a defect in production is 30× higher than catching it in design.

In a DevSecOps pipeline, security tools are woven into the same CI/CD automation developers already use. A pull request automatically triggers a static analysis scan. Committing code with a hardcoded API key fails the build instantly. Infrastructure templates are validated against policy before a cloud resource is ever provisioned. Security does not slow delivery — it moves with delivery.

Real-world example — financial services: A European challenger bank migrated from a quarterly security review model to DevSecOps. Within six months, their average vulnerability-to-fix time dropped from 47 days to 4 days. Deployment frequency doubled because teams no longer feared late-stage security failures blocking releases.

SECTION TAKEAWAY

DevSecOps embeds security into every CI/CD stage — it does not add a new gate at the end.

"Shift Left" is the core mechanism: earlier = cheaper + faster.

Ownership changes: every engineer owns a piece of security, not just a dedicated team.

DevSecOps vs DevOps: Key Differences at a Glance

The table below captures the structural differences between DevOps and DevSecOps across the dimensions that matter most to engineering leaders making adoption decisions.

DimensionDevOpsDevSecOpsPrimary goalSpeed & reliability of deliverySpeed with verifiable security built inSecurity roleExternal reviewer, late-stage gateShared responsibility, automated at every stageWhen security runsAfter code is written (pre-release)At commit, build, test, deploy, and runtimeRisk focusDowntime, deployment failuresVulnerabilities, compliance violations, exposureAutomation scopeBuild, test, deployBuild, test, deploy + security scans, policy checks, compliance evidenceCultural modelDev + Ops collaborationDev + Sec + Ops collaboration ("golden triangle")Compliance approachPeriodic audits, manual evidenceContinuous compliance, automated evidence generationCost of vulnerabilitiesHigh (found late)Low (found early—at dev or build stage)Toolchain additionsCI/CD, IaC, monitoringAll DevOps tools + SAST, DAST, SCA, secrets mgmt, IaC scanning, RASPRegulatory fitAdequate for lower-risk environmentsRequired for finance, healthcare, government (PCI-DSS, HIPAA, SOC 2, GDPR)DevSecOps vs DevOps: Key Differences at a Glance

Infrastructure and Policy as Code: Governance Without Friction

As infrastructure moved to the cloud, manual configuration became a liability.

DevSecOps extends automation to governance itself:

Infrastructure as Code (IaC) ensures consistency and auditability

Policy as Code (PaC) enforces rules automatically using engines like Open Policy Agent (OPA)

Examples:

Preventing unencrypted storage before deployment

Blocking insecure Kubernetes manifests at admission time

Generating audit evidence automatically for SOC 2, HIPAA, or GDPR

This creates guardrails, not gates — allowing teams to move fast safely.

Culture: From Security Gatekeepers to Shared Ownership

Tools alone do not create DevSecOps. DevSecOps succeeds or fails less on tooling than on culture. In traditional organizations, security teams often operated as external reviewers, stepping in late to approve or reject releases. This positioning made security a perceived obstacle to delivery and reinforced adversarial dynamics between teams focused on speed and those focused on risk reduction.

DevSecOps replaces this model with shared ownership. Security is no longer something “handed off” to specialists but a responsibility distributed across development, operations, and security professionals. Developers are empowered to make secure decisions as they write code, operations teams enforce resilient environments, and security teams act as enablers who design guardrails rather than gates.

The cultural shift is from security as enforcement to security as collaboration:

Developers own security outcomes

Security teams enable, not block

Operations enforce reliability and containment

In practice, this shift requires meeting engineers where they work. Security feedback must appear in the same tools developers already use — IDEs, pull requests, and issue trackers — rather than in separate reports or audits. As trust grows, security specialists increasingly collaborate directly with product teams, helping shape design decisions early instead of policing them later.

Successful organizations scale this through:

Security champions inside engineering teams

Pairing and embedding security engineers

Threat modeling workshops and gamification

Integrating security into existing workflows

Maturity is measured not by zero vulnerabilities, but by how fast teams learn and respond.

Measuring DevSecOps: Speed and Risk Signals

Traditional DevOps metrics, like deployment frequency, lead time, and change failure rate, remain important indicators of agility. But they don’t capture the full picture in a security-first environment.

DevSecOps expands the lens to include risk signals that reflect how effectively teams prevent, detect, and remediate vulnerabilities. Key measures include how quickly newly discovered flaws are addressed, how long critical issues linger in the system, and how many high-severity vulnerabilities reach production. By combining velocity with these security indicators, organizations can evaluate whether their fast-moving pipelines also maintain a strong risk posture.

DevSecOps extends classic DORA metrics with security indicators:

Vulnerability discovery rate

Mean time to remediate (MTTR)

Mean vulnerability age

Critical issues reaching production

Data from 2025 shows that mature DevSecOps organizations resolve vulnerabilities over ten times faster than less mature peers, while simultaneously increasing deployment frequency by up to 150 percent. This demonstrates a crucial point: when automated correctly, speed and security reinforce each other rather than compete, turning DevSecOps into a true accelerator for both innovation and resilience.

DevSecOps Tools: The Layered Security Toolchain

A mature DevSecOps toolchain is not a single product — it is a system of complementary controls, each catching a different class of risk. The goal is defence in depth: no single missed scan exposes the entire system. Below are the key categories and leading tools teams use in 2026.

SAST

Static Analysis

Scans source code for insecure patterns and coding vulnerabilities before execution.

SonarQube, Semgrep, Checkmarx

DAST

Dynamic Testing

Tests running applications for real-world exploitability, including auth flaws and injection paths.

OWASP ZAP, Burp Suite

SCA

Dependency Scanning

Evaluates open-source libraries against CVE databases at build time to manage third-party risk.

Snyk, Dependabot, Black Duck

SECRETS

Secrets Management

Prevents credentials, tokens, and API keys from leaking into version control systems.

HashiCorp Vault, AWS Secrets Manager

IAC SCANNING

Infrastructure Policy

Catches cloud misconfigurations in Terraform or Helm templates before resources are provisioned.

Checkov, Terrascan, OPA/Rego

RASP

Runtime Protection

Actively monitors and blocks exploit attempts in production as a final line of defence.

Contrast Security, Sqreen

CONTAINER

Image & Registry Scanning

Validates container images for vulnerabilities before they are deployed to Kubernetes clusters.

Trivy, Anchore, Aqua Security

COMPLIANCE

Policy as Code

Enforces regulatory controls automatically and generates continuous audit evidence.

Open Policy Agent, AWS Config Rules

Gart perspective:

The teams that implement DevSecOps most successfully arediff-aware — they scan only what changed, not the entire codebase on every commit. This keeps feedback fast and prevents security tooling from becoming a bottleneck that developers learn to route around.

When to Choose DevOps vs DevSecOps: A Practical Decision Guide

Not every team needs to leap straight to a full DevSecOps implementation today. The right choice depends on your risk profile, regulatory environment, and current pipeline maturity. Use this decision framework to orient your roadmap.

Start with DevOps if…

You do not yet have a functioning CI/CD pipeline

Your team is fewer than 10 engineers and moving fast on non-regulated product

You handle no sensitive PII, financial data, or health records

Compliance obligations are minimal or absent

Your primary challenge is deployment reliability, not security posture

Prioritise DevSecOps if…

You operate in finance, healthcare, government, or any regulated sector

You must comply with PCI-DSS, HIPAA, SOC 2, GDPR, or ISO 27001

You handle customer data, credentials, or payment information

You have suffered a breach, security incident, or failed audit

Your CI/CD pipeline is mature and ready for the next layer

The honest answer: In 2026, any team shipping to production in a B2B or regulated context should treat DevSecOps as the standard, not an upgrade. The question is sequencing: achieve DevOps maturity first (stable pipelines, DORA metrics tracked), then layer in security controls systematically using the roadmap below.

How to Implement DevSecOps: A 6-Step Roadmap

Based on work with enterprise clients across financial services, healthcare IT, and SaaS, the Gart team has found that DevSecOps adoption succeeds when it follows a sequenced, iterative approach, rather than trying to bolt on every tool at once.

Assess your current security posture

Run a baseline security audit of your CI/CD pipeline, dependencies, IaC configurations, and access controls. Identify your top five highest-risk exposures. This creates a prioritised backlog, not a wish list.

Integrate SAST and secrets scanning into the IDE and PR workflow

Add static analysis plugins to developer IDEs and enforce pre-commit hooks that block secrets from entering version control. This is the highest-ROI first step - developers fix issues without ever leaving their workflow.

Add SCA to the build pipeline

Configure dependency scanning on every build. Set policies that fail the build on critical CVEs in direct dependencies and warn on transitive ones. Connect to your issue tracker so vulnerabilities become tickets, not reports no one reads.

Implement IaC scanning and Policy as Code

Before any cloud resource is provisioned, validate Terraform/CloudFormation/Helm templates against your security baseline using Checkov or OPA. Codify your hardening standards so they enforce themselves rather than relying on manual review.

Deploy DAST and container image scanning in staging

Run dynamic tests against the full application in a staging environment. Scan container images before they reach production registries. Establish a vulnerability SLA: critical = fix within 24h, high = 7 days, medium = next sprint.

Build the culture: security champions and shared metrics

Designate security champions in each engineering squad. Add security metrics— mean time to remediate, open critical CVEs, deployment gate pass rate—to your engineering dashboard alongside DORA. Culture change follows measurement.

5 DevSecOps Implementation Pitfalls We've Seen in Enterprise Teams

Most DevSecOps failures are predictable. After working with engineering organisations across multiple sectors, these are the five mistakes that consistently derail implementations.

⚠

Trying to add every tool at once

Teams that try to implement SAST + DAST + SCA + secrets management + IaC scanning simultaneously overwhelm developers with alerts and create alert fatigue. Start with one or two high-impact controls and mature from there.

⚠

Making security a separate team's problem

DevSecOps requires distributed ownership. If security engineers are the only ones who care about scan results, developers will find workarounds and suppressions proliferate. Accountability must sit with the squad that wrote the code.

⚠

Ignoring pipeline performance

Security scans that add 20+ minutes to a CI build will be disabled by engineers under deadline pressure. Use diff-aware scanning, parallel jobs, and caching to keep the full pipeline under 10 minutes even with security controls active.

⚠

Skipping threat modelling

Tools find known vulnerabilities. Threat modelling finds architectural risks tools cannot see. Even a lightweight STRIDE session at the start of a new feature or service prevents entire classes of security debt from accumulating.

⚠

Treating compliance as the destination

Passing a SOC 2 audit is not the same as being secure. Teams that optimise purely for compliance checkboxes often have significant unmitigated risk in areas the audit framework does not cover. Compliance is a floor, not a ceiling.

Ready to Move from DevOps to DevSecOps?

Gart helps engineering teams design, implement, and operate production-grade DevSecOps pipelines—from the first SAST integration to full Policy as Code and continuous compliance.

DevOps Services

CI/CD design, pipeline optimisation, AWS & Azure DevOps implementation for teams at any stage.

Security & IT Audit

Infrastructure, compliance, and security audits with actionable remediation roadmaps, not just findings.

Cloud & Infrastructure

IaC, Kubernetes hardening, and managed cloud environments designed for security from day one.

Fractional CTO

Strategic security and delivery leadership for scale-ups that need senior guidance without full-time cost.

Talk to a DevSecOps expert →

AI Change Everything — and Exposes Everything

By 2025, 90% of developers used AI daily.The DORA report confirms a hard truth:

AI does not fix broken systems — it amplifies them.

High-maturity teams get faster and safer.Low-maturity teams accumulate debt at machine speed.

The key lesson is clear: AI is a force multiplier. In capable environments, it drives innovation safely. In fragile environments, it magnifies vulnerabilities and exposes weaknesses faster than human teams can respond. The challenge for 2026 and beyond is not whether AI will be used—it’s whether organizations have the culture, tooling, and guardrails in place to ensure that speed doesn’t come at the cost of security. In other words, AI changes everything, but without DevSecOps, it also exposes everything.

Vibe Coding, Agentic AI, and the New Security Gap

As we move into 2026, a new paradigm is reshaping software development: vibe coding. Developers now act as “conductors,” giving natural language prompts to AI systems that generate entire modules or applications. This accelerates prototyping at unprecedented speeds but introduces a hidden cost: security debt baked into AI-generated code.

By 2026:

Up to 42% of code is AI-generated

Nearly 25% of that code contains security flaws

Developers increasingly do not fully trust what they ship

New risks emerge:

hallucinated authentication bypasses,

phantom dependencies,

silent removal of security controls,

AI-driven polymorphic attacks.

Compounding the challenge, adversaries are also leveraging agentic AI to launch adaptive attacks, creating a dynamic, real-time contest between offensive and defensive systems. In this environment, DevSecOps is no longer optional — it is the framework that allows organizations to integrate security into AI-assisted development, detect flawed code before it reaches production, and maintain trust even as machines take a more active role in creating software.

Security is no longer human-versus-human.It is machine-versus-machine.

DevSecOps in the Agentic Era

In the era of agentic AI, DevSecOps evolves from a pipeline strategy into a continuous, autonomous capability. Security can no longer be a manual checkpoint or a final review — AI-driven development moves too fast, and attackers are already leveraging machine intelligence to probe vulnerabilities in real time.

The future DevSecOps model includes:

autonomous vulnerability detection,

AI-generated remediation PRs,

automated validation pipelines,

strict human-in-the-loop controls for high-impact logic.

Frameworks like NIST SSDF, OWASP SAMM, SLSA provide structure, but success depends on platform engineering that embeds security invisibly into developer experience.

DevSecOps Is Not Optional Anymore

DevOps made software fast.DevSecOps makes it trustworthy at speed.

In an era of:

AI-generated code,

autonomous attackers,

continuous compliance,

and expanding attack surfaces,

security can no longer be a phase, a team, or a checklist.

DevSecOps is the operating system for modern software delivery.

Organizations that adopt it as a cultural, architectural, and automated system will not just ship faster -they will survive the next decade of software evolution.

The Bottom Line on DevSecOps vs DevOps

DevOps transformed software delivery by eliminating the friction between development and operations. DevSecOps extends that transformation by eliminating the friction between delivery speed and security. In 2026, the two are not competing philosophies — they are successive chapters of the same story.

The practical question for engineering leaders is not whether to adopt DevSecOps but how to sequence it given your current pipeline maturity, team culture, and risk profile. The organisations that move fastest are those that treat security as a design constraint from the start — not a compliance checkbox at the end.

If you are assessing where your team sits today or planning a transition, our IT Audit service gives you a clear, honest baseline. From there, our DevOps and DevSecOps team can help you build the pipeline your security posture requires.

Fedir Kompaniiets

Co-founder & CEO, Gart Solutions · Cloud Architect & DevOps Consultant

Fedir is a technology enthusiast with over a decade of diverse industry experience. He co-founded Gart Solutions to address complex tech challenges related to Digital Transformation, helping businesses focus on what matters most — scaling. Fedir is committed to driving sustainable IT transformation, helping SMBs innovate, plan future growth, and navigate the "tech madness" through expert DevOps and Cloud managed services. Connect on LinkedIn.

If you are scaling a startup beyond 30 engineers, you have already felt it: pipelines slow down, senior developers become de-facto infrastructure gatekeepers, and every deployment feels like a ceremony rather than a routine. Platform engineering is the systematic answer to this problem — and in 2026, it has become the defining capability that separates fast-moving product teams from organizations drowning in operational debt.

This guide is written for engineering leaders, CTOs, and founders who need a clear, actionable picture of the best platform engineering solutions for startups right now — covering tooling, architecture, service partners, and real-world ROI.

80%

of eng orgs have dedicated platform teams

40–50%

reduction in developer cognitive load

50×

more deployments per day vs. manual DevOps

<1 hr

to first commit for new engineers

Why platform engineering is now the default operating model

For most of the past decade, DevOps was the answer to slow delivery. "You build it, you run it" worked beautifully at 10–20 engineers. But cloud-native complexity — microservices, multi-cloud, Kubernetes, regulatory compliance — eventually exceeded what informal communication and tribal knowledge could sustain.

Platform engineering responds by treating infrastructure as a product, with developers as its customers. The goal is a "paved road": a set of standardized, pre-approved workflows where the right way to ship software is also the easiest way. The result is not just faster delivery — it is qualitatively different work. Engineers stop managing infrastructure and start building features again.

The Breaking Point

The breaking point typically arrives between 30 and 50 engineers. At that scale, informal handoffs collapse, manual deployments accumulate, and your best engineers spend half their time on tickets that a platform would eliminate entirely.

The cost of waiting is far higher than the cost of building.

The maturity gap in numbers

Metric

Low-Maturity (Manual DevOps)

High-Maturity (Platform Eng)

Deployment Frequency

1–5 per day

50+ per day

Lead Time for Changes

1–6 weeks

< 1 hour

Mean Time to Recovery

30+ minutes

< 10 minutes

Change Failure Rate

15–30%

< 5%

Engineer Onboarding

1–2 weeks

< 1 hour to commit

Developer eNPS

Below 20

Above 60

The three layers every startup IDP must have

A modern Internal Developer Platform (IDP) is not a single tool — it is a layered architecture that separates developer experience from infrastructure orchestration from governance. Understanding these layers is the prerequisite for choosing the right tooling stack.

Layer 1 — The developer-facing portal

The portal is the "front door" for all engineering activity: a centralized catalog of services, documentation, ownership metadata, and self-service actions. Open-source Backstage by Spotify remains influential, but commercial alternatives like Port, Cortex, and OpsLevel are frequently the better choice for startups that cannot staff a dedicated Backstage maintainer. These tools provide service scorecards, automated actions, and flexible data models with far less overhead.

Layer 2 — The orchestration backbone

Beneath the portal sits Kubernetes — the undisputed baseline for cluster orchestration in 2026. GitOps has matured into the standard for declarative infrastructure: Argo CD reconciles Git's "desired state" with what is actually running in production, enabling self-healing deployments without manual intervention. For Infrastructure as Code, OpenTofu (the community-driven Terraform fork) and Pulumi (which lets teams write IaC in TypeScript, Python, or Go) dominate the startup space due to their modularity and testability.

Layer 3 — Security and governance

Security in 2026 is an integrated feature, not a downstream audit. Infisical leads the secrets management category with automated secret lifecycle management across every environment. Policy engines like OPA Gatekeeper and Kyverno enforce security and cost rules at the Kubernetes API level — so the fastest path to production is always the compliant path.

Best platform engineering tools for startups in 2026

With the architectural layers clear, the question becomes which specific tools deliver the best value for resource-constrained startup teams. Below is a curated assessment of the most impactful options available this year.

Atmosly

All-in-One IDP

Ready-to-use Kubernetes automation, self-service workflows, and AI-based insights for Series A SaaS teams.

Humanitec

Platform Orchestrator

Sits at the core of the IDP to dynamically generate environment-specific configurations.

Qovery

Ephemeral Environments

Provides on-demand preview environments per pull request to improve PR review velocity.

Infisical

Secrets Management

Automated secret lifecycle management. Essential for Fintech and Healthtech compliance.

Argo CD

Continuous Delivery

GitOps-native, self-healing Kubernetes deployments for declarative infrastructure models.

Port

Developer Portal

Flexible data models and service scorecards. A customizable "front door" for engineering teams.

Pulumi

Infrastructure as Code

Multi-language IaC (TypeScript, Go, Python) for complex conditional logic.

OpenTelemetry

Observability

Vendor-neutral standard for traces, logs, and metrics to prevent vendor lock-in.

The real ROI: what platform engineering actually returns

Platform engineering is a capital investment, and every startup's leadership team needs to understand the financial case before approving the budget. The returns manifest across three dimensions.

90%

Fewer recall costs

(Tesla OTA model)

30%

Lower engineer turnover

(Atlassian, GitLab)

$18k

Monthly cloud savings

Typical post-FinOps

15 min

Env. provisioning

(Down from 3 days)

Velocity gains

Stripe's internal PaaS reduced environment provisioning from 3 days to 15 minutes by standardizing Kubernetes configurations and embedding security policies directly into the CI/CD pipeline. This is not an outlier — it reflects the structural impact of eliminating manual handoffs in the deployment cycle.

Reliability improvements

High-maturity platforms reduce Mean Time to Recovery to under 10 minutes, compared to 30+ minutes in manual DevOps environments. AI-powered observability tools now achieve 30–40% faster MTTR through automated diagnostics and incident correlation.

Cloud cost control (FinOps)

Unmanaged cloud sprawl is one of the most common financial surprises for scaling startups — AWS or Azure bills that are 3–5× higher than necessary are not unusual. A platform-driven FinOps strategy integrates cost visibility, automated right-sizing, and governance rules directly into the infrastructure lifecycle. Startups that modernize their platform with FinOps in scope consistently identify $15,000–$18,000 in monthly savings while simultaneously improving uptime to 99.99%.

When to build, when to buy, when to partner

One of the most consequential decisions a startup makes is choosing between building an IDP in-house, adopting a commercial solution, or engaging a specialist consulting partner. There is no universal answer — but there are clear heuristics.

Build in-house if you are post-Series B with 3+ dedicated platform engineers and highly specific compliance or architecture constraints that commercial products cannot meet.

Commercial IDP product (Atmosly, Qovery, Humanitec) if you are Series A–B, need rapid time-to-value, and cannot afford to dedicate senior engineers to internal tooling.

Partner with a specialist consultancy if you need architectural guidance, do not yet have internal platform expertise, or are migrating a complex legacy environment.

Hybrid approach — the most common pattern for startups: adopt a commercial IDP core, extend it with open-source components (Argo CD, OpenTofu, Infisical), and engage a partner for initial design and onboarding.

AI integration: where platform engineering is heading in 2026–2027

Seventy-six percent of DevOps teams have now integrated AI into their pipelines in some form. The impact is moving well beyond code generation into operational intelligence.

AI-powered observability surfaces anomalies before they become incidents, correlates logs and traces automatically, and suggests remediation steps — cutting MTTR by 30–40% in production environments. Compliance automation (HIPAA and GDPR scanning embedded in the pipeline) is eliminating manual audit cycles entirely for startups in regulated industries. Engineering analytics platforms like Milestone and LinearB are providing leadership with proof of whether AI coding tools are actually improving productivity — a critical accountability layer as AI tooling spend scales.

Looking ahead, the next frontier is agentic AI: autonomous agents that can navigate deployment pipelines, integrate with ERP systems, and maintain production reliability without human escalation. Startups building the infrastructure to host these workloads today are establishing a structural competitive advantage for 2027 and beyond.

🚀

Gart Solutions · Platform Services

Ready to build your internal developer platform?

Gart Solutions helps growth-stage startups design, build, and operate high-maturity IDPs. We help Series A and B teams scale engineering velocity without scaling headcount in lockstep.

IDP Design & Architecture

Kubernetes & GitOps

FinOps & Cloud Cost Control

Secrets & Security Layer

Observability & MTTR Reduction

Developer Portal Setup

Book a free platform review →

Conclusion: treat the platform as a product

The companies winning in 2026 are not the ones with the most engineers — they are the ones where each engineer operates at maximum leverage. A well-designed internal developer platform is the multiplier that makes this possible: it removes cognitive load, enforces security by default, controls cloud spend, and makes onboarding a matter of hours instead of weeks.

The best platform engineering solutions for startups are not defined by any single tool. They are defined by the intentional combination of the right portal, the right orchestration backbone, and the right governance layer — implemented in a way that the team actually adopts and trusts. Whether you build that platform in-house, adopt a commercial solution, or partner with a specialist team, the investment will consistently outperform the alternative of doing nothing.

The organizations that neglect this investment do not just ship slower — they accumulate the kind of organizational debt that becomes a strategic liability at the Series C table.

FinOps culture empowers engineers and architects to think as business owners about cost and value at all stages of the application life cycle.

In this article, as a co-founder and cloud architect of Gart, I want to share my hands-on experience with FinOps automation and its role in creating an outsized impact on business outcomes (here is my LinkedIn page)

This material will be useful for anyone who works in cloud engineering, finance, procurement, and is interested in product ownership and leadership of the company.

Why Does Cost Management Matter?

In practice, most organizations have an unbalanced cost/resource structure that was created during the planning, deployment, and subsequent launch stages of a project. An unbalanced structure leads to additional margin loss and, in some cases, quality loss.

But with FinOps practice, each operational group can access the data they need to influence their costs in near real-time and make decisions based on it that will lead to efficient cloud costs balanced with service speed or performance.

Thus, FinOps as a service has a direct impact on the margins of an organization or project, allowing cross-functional teams (project owners, engineers, and management) to maximize the use of resources based on a budget but in real-time.

Who Is Involved in FinOps?

From finance to operations, developers, architects, and executives, everyone in an organization has a role to play.

Whether it's a small business with a few employees deploying cloud resources or a large enterprise with thousands of employees, FinOps practices can be implemented throughout the organization at different levels.

The FinOps team generates recommendations, such as reconfiguring resources or committing to cloud service providers, that need to be considered by the organization.

Top FinOps Practices to Manage Cloud Costs

FinOps is an evolving practice that empowers organizations to manage their cloud expenses efficiently and fine-tune their financial operations. Below, we present some of the prime FinOps practices for proficiently controlling cloud costs:

1. Monitoring and Tracking Cloud Expenditure

The initial step in effectively overseeing cloud expenses is the vigilant monitoring and tracking of cloud spending. This entails gaining a deep understanding of the utilization patterns of various services, pinpointing the primary drivers of costs, and closely observing user trends. These actions are instrumental in uncovering areas ripe for cost optimization, identifying redundant resources, and recognizing underutilized services.

2. Implementing Cost Optimization Strategies

Once the key cost drivers have been pinpointed, the implementation of cost-efficiency strategies can commence. This involves harnessing discounts, making judicious use of spot instances, downsizing underused services, and eliminating superfluous resources. Here are some recommendations to initiate this process:

Scrutinize Your Company’s Expenditures

Identify Sources of Squander and Inefficiency

Rationalize Operational Procedures

3. Automating Management of Cloud Costs

Automation stands as the linchpin of cost control in the realm of cloud services. By automating key processes, organizations can expedite the discovery of cost-saving opportunities, automate the provisioning of resources, and streamline billing procedures. Automation plays a pivotal role in helping companies uncover and rectify inefficiencies in cloud cost management. For instance, it can facilitate real-time tracking of cloud resource utilization, enabling the identification and repurposing or termination of redundant or underutilized assets. Moreover, it can flag cost optimization prospects, such as discounts or incentives from cloud providers and potential strategies for economizing, such as resource scaling.

4. Leverage Tools for Cost Control

A multitude of cost control tools is at your disposal to facilitate efficient management of cloud costs. These optimization tools are adept at tracking usage patterns, establishing budgetary thresholds, and flagging opportunities for cost efficiency. Their design caters to empowering businesses with the capability to scrutinize and dissect their financial outlays. These tools enable meticulous expense tracking, identification of areas with potential for optimization, and the execution of cost-cutting measures.

5. Implementing Resource Allocation Strategies

Resource allocation proves pivotal in the effective management of cloud costs. The objective is to allocate resources in the most resourceful manner possible, taking into account usage trends and cost efficiency tactics.

6. Harnessing Cloud Cost Forecasting

The practice of cloud cost forecasting serves as a valuable resource for comprehending future cloud expenses and pinpointing areas ripe for cost reduction. This forward-looking approach aids in strategic planning and fosters more precise budgeting.

7. Investing in Cloud Governance

Establishing comprehensive cloud governance protocols is a foundational element in the realm of cloud cost management. This entails the formulation of rules and policies governing cloud utilization, the delineation of roles and responsibilities, and the diligent monitoring of compliance.

How to Set Up FinOps in Your Business?

Stage 1: Planning FinOps in the Organization 1. Gather Support: identify key stakeholders interested in increasing cloud margins. Familiarize yourself with the opportunities for your organization with better resource and expenditure analysis. 2. Determine the required time for monitoring and supporting FinOps in your organization based on time and data flow cycles. 3. Plan target actions and require a team with the relevant skills for FinOps. 4. Make decisions regarding the collection and storage of cloud consumption data. 5. Think about reporting tools and data transmission for FinOps stakeholders.

Stage 2: Adoption of FinOps FinOps is a cultural change that requires the involvement of various teams and individuals throughout the organization. Communication and feedback cycles aimed at encouraging the practice are crucial. The goal of this stage is to present the FinOps plan created in Stage 1 to stakeholders. The presentation below helps communicate this clearly, easily, and quickly:

Share a high-level activity roadmap of FinOps and the value it brings to different teams and projects.

Understand cross-team challenges and explain/teach how FinOps can help address them.

Establish a collaboration model between FinOps and key partners (IT domains, controllers, program teams).

Create and implement a FinOps dashboard for key stakeholders and cross-functional teams.

Stage 3: Operational Phase

The FinOps lifecycle is built around a 3-stage model and has the same principles in each of them.

Cross-functional teams must collaborate.

Decisions are made based on cloud value for the business.

Everyone takes responsibility for their cloud usage.

FinOps reports should be accessible and timely.

A centralized team manages FinOps.

Leverage the benefits of the cloud model with variable expenses.

To prepare for a successful FinOps practice, certain criteria need to be met:

Prepare a resource map or a list of resources in active projects, as specified in contracts and actively deployed environments.

Track complete and up-to-date consumption data from all cloud providers.

Enable cost analysis and expenditure forecasting for active projects.

Ability to assess discrepancies between contractual (budgeted) and actual consumption levels.

Reporting is the only way to provide information on cloud consumption discrepancies and offer recommendations for resource structuring or resizing. Data quality collected through APIs or proprietary cloud solutions, as mentioned earlier, is a critical prerequisite for the reporting process.

Top 3 FinOps Best Practices of Automation

1. Tag Management

After establishing a tagging standard for your organization, you can use automation to ensure compliance with this standard.

Start by identifying resources with missing or incorrectly applied tags, and then assign responsibility to rectify these tag violations. You can also proceed to stop or lock resources to compel owners to take action and potentially work on deletion or decommissioning policies for these resources.

However, resource deletion is a highly effective form of automation, so many companies may not reach this level of maturity immediately. It is advisable not to jump directly to resource deletion without addressing previous, less impactful levels of automation.

2. Scheduled Resource Start/Stop

Managing resources and automation allows you to schedule resource stoppages when they are not in use (e.g., outside of office hours) and then bring them back online when needed.

The goal of this automation is to minimize impact on teams while saving significant costs during hours when their resources are idle. This automation is often deployed in development and testing environments, where resource unavailability is not noticed outside of working hours.

You should ensure that the implementation allows team members to bypass scheduled actions in case they need to keep a server active during off-hours. Additionally, canceling a scheduled task should not completely remove the resource from automation but merely skip the current execution.

3. Usage Reduction

Automation for usage reduction eliminates waste of notifications to responsible team members for better cost optimization.

Automated resource data retrieval from services like Trusted Advisor (for AWS), third-party cost optimization platforms, or directly from resource metrics provides a straightforward way to send notifications to team members responsible for resources to investigate or, in some environments, allows for automatic resource termination or resizing.

Top Cloud FinOps KPIs

Answering the question of how to measure the success of FinOps program, from our experience, I can outline six main KPIs (but any KPI should be defined by your organization):

Cloud Spend

This metric provides visibility into how much money you spend on cloud services to get a clear picture of your cloud spending and identify areas where else to save money.

Cloud Utilization

This metric measures how efficiently you’re using your cloud resources.

Cloud Availability

The metric measures cloud environment’s reliability and meeting performance expectations. Poor availability can lead to downtime and lost productivity.

Cloud Security

Cloud Security measures the security of your cloud environment and helps you identify any potential threats.

Cloud Adoption

Cloud Adoption measures the rate at which your organization is adopting cloud technologies.

Businesses can optimize their cloud investments and resource utilization by monitoring these five Cloud FinOps Key Performance Indicators (KPIs).

Furthermore, keeping an eye on these KPIs enables businesses to pinpoint cost-saving opportunities and enhance their cloud infrastructure.

Conclusion

In this article, we've covered the fundamentals of FinOps as well as how to set up Cloud FinOps practices in your business. By leveraging these capabilities, organizations can achieve greater cost visibility, financial control, and overall operational efficiency in their cloud environments.

Start your cloud FinOps journey with Gart's FinOps Assessment. You will get a roadmap and a completely executable plan wherever you are on your cloud journey.

So, whether you're implementing a full cloud operating model, or just managing your cloud cost, a collaboration with Cloud FinOps partner like Gart, drives your organization. Schedule a free consultation.