Three disciplines. One shared mission. Learn how DevOps, Site Reliability Engineering, and Platform Engineering work together—and when to prioritize each to scale your software delivery without burning out your team.

The Great Infrastructure Complexity Crisis

Here's a paradox that every engineering leader in 2026 knows all too well: the tools available to developers have never been more powerful, yet the operational complexity required to manage them has never been more overwhelming. Kubernetes, Terraform, multi-cloud networking, service meshes, secrets management — the list of things a developer is expected to master keeps growing.

The result? A phenomenon practitioners now call DevOps fatigue. Engineers are spending more time navigating infrastructure than writing business logic. Context switching is destroying productivity. And the "you build it, you run it" philosophy — while well-intentioned — has created a crushing cognitive burden on development teams.

"When every squad builds its own path to production, the result is a fragmented landscape of incompatible toolchains, inconsistent security postures, and a significant drain on productivity."

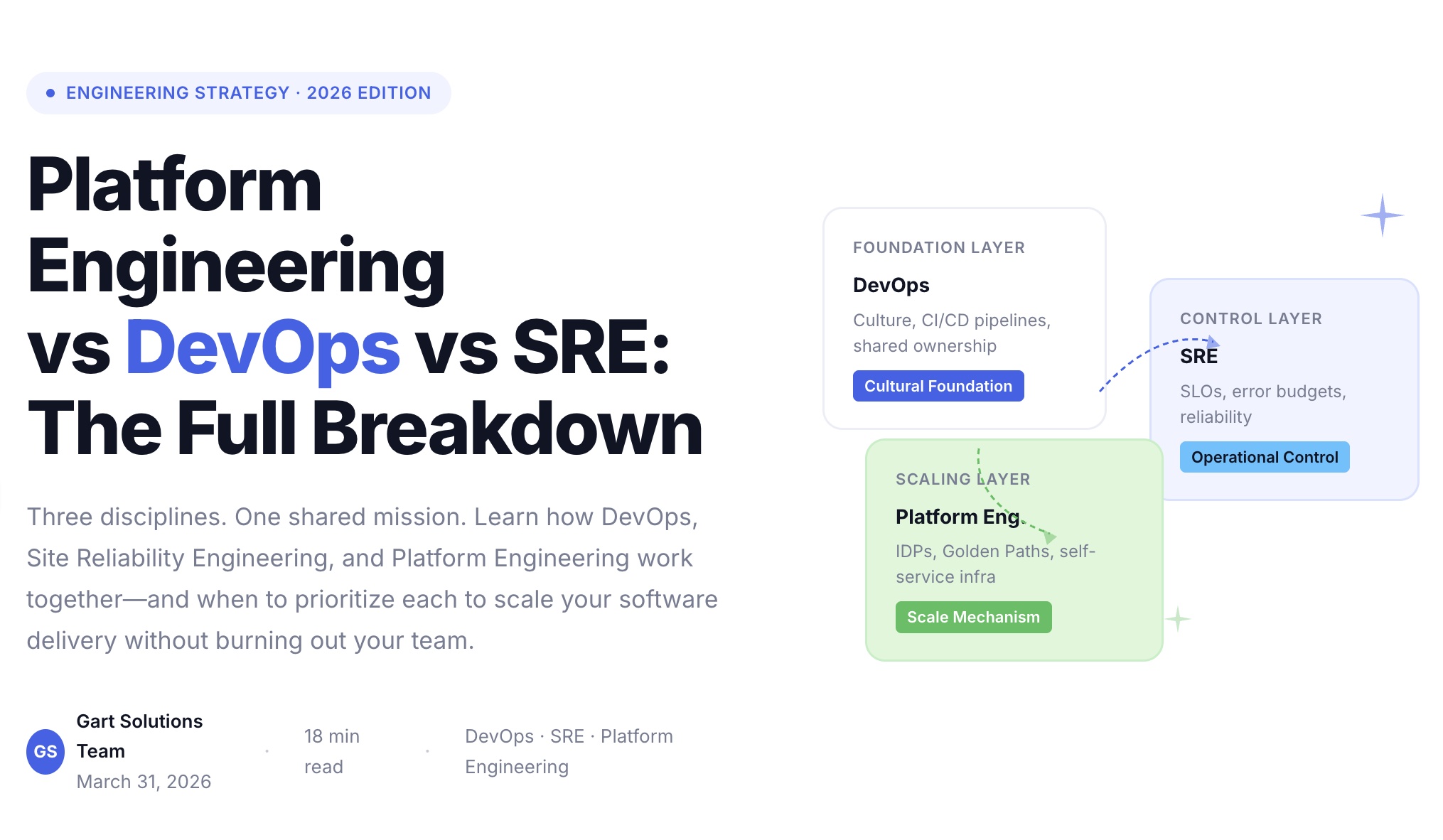

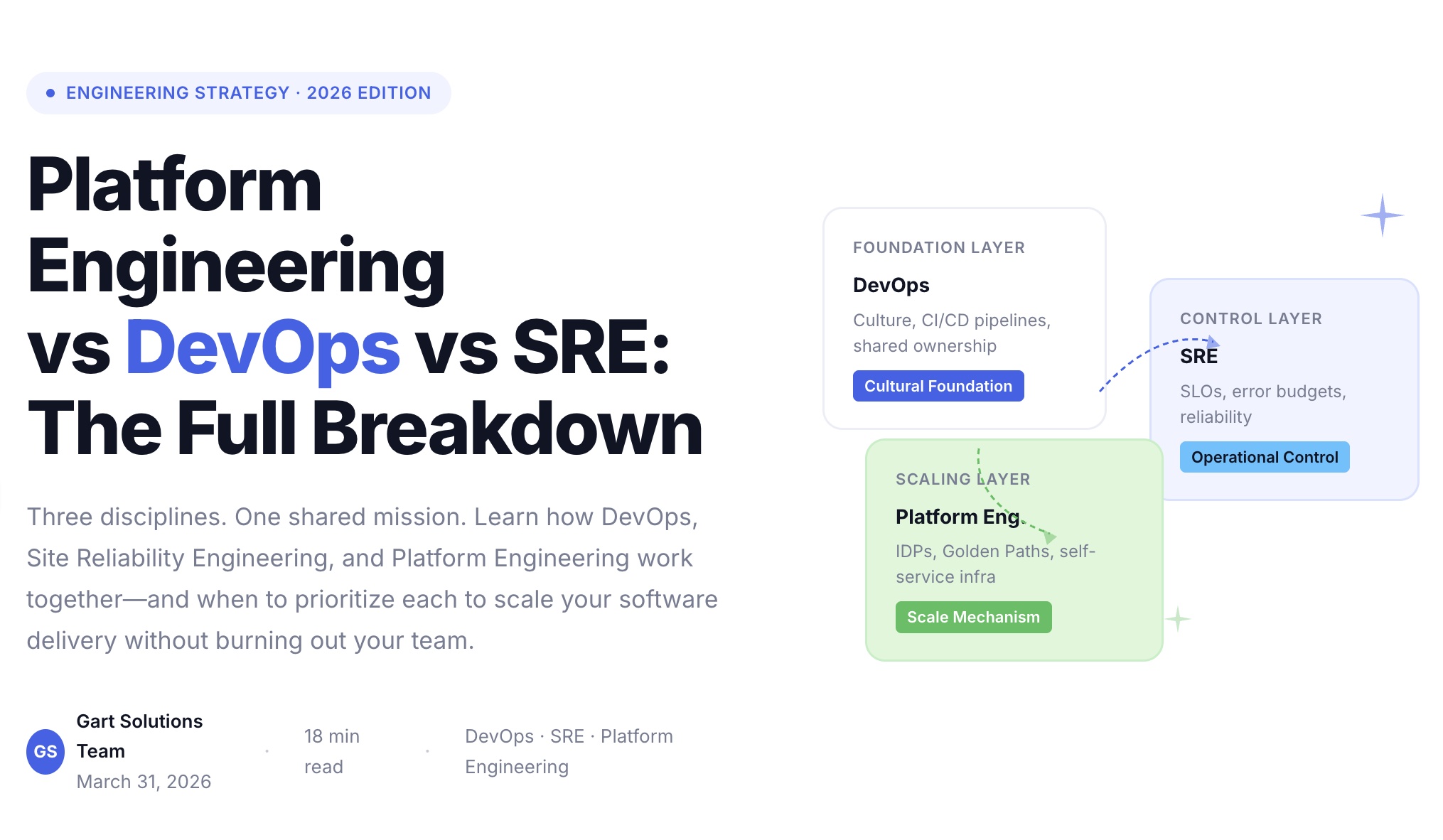

The answer isn't to abandon DevOps culture. It's to understand how three distinct but complementary disciplines — DevOps, Site Reliability Engineering (SRE), and Platform Engineering — layer on top of each other to solve different problems at different scales. And then to know which one your organization needs to prioritize right now.

DevOps

Cultural Foundation

The philosophical backbone. Shared responsibility, CI/CD automation, iterative delivery, and the breakdown of dev/ops silos.

SRE

Operational Control

Software engineering applied to operations. SLOs, error budgets, blameless culture, and proactive reliability at scale.

Platform Eng.

Scaling Mechanism

Infrastructure as a product. Internal Developer Platforms, Golden Paths, and self-service tools that let devs focus on code.

DevOps: The Cultural Foundation That Started It All

DevOps was never meant to be a job title — it was a philosophical shift born in the late 2000s to dissolve the wall between developers and operations teams. Its core promise: faster delivery, fewer handoffs, and shared accountability for what ships to production.

By the mid-2020s, mature DevOps practices are measured by the DORA (DevOps Research and Assessment) metrics — a four-dimensional framework that quantifies delivery performance with brutal clarity:

DORA Metric

What It Measures

Elite Benchmark

Deployment Frequency

How often the team successfully releases to production

Multiple times per day

Lead Time for Changes

Time from code commit to running in production

Less than 1 hour

Change Failure Rate

Percentage of deployments causing a production failure

0–5%

Time to Restore (MTTR)

Time to recover from a production incident

Less than 1 hour

Where DevOps Hits Its Limits

DevOps grants teams the cultural permission to move fast. But it doesn't guarantee they'll all move in the same direction. At scale — across hundreds of microservices and dozens of squads — the decentralized nature of DevOps creates a bottleneck of expertise.

Teams spend weeks building CI/CD pipelines from scratch, often producing nearly identical results with different tooling. Security configurations drift. Onboarding a new developer takes months, not days. This is the "shadow operations" problem: uncoordinated, manual infrastructure work that consumes engineering cycles without generating business value.

DevOps provides the cultural permission to automate. It doesn't inherently provide the standardized systems necessary to scale that automation across hundreds of teams. That's where the next two disciplines come in.

Site Reliability Engineering: The Engineering Approach to Resilience

Popularized by Google, SRE fills the operational gap by applying software engineering discipline to the challenge of keeping systems running reliably at scale. The canonical description: "what happens when you ask a software engineer to design an operations function."

Unlike traditional IT ops — which reacts to fires — SRE is proactive, metrics-driven, and automation-first. Its fundamental mechanism is the Service Level Objective (SLO): a precise, business-aligned target for how reliable a system needs to be.

The Math Behind Reliability

SRE rejects the myth that 100% uptime is the right goal. Instead, it introduces the concept of an error budget — the amount of downtime or errors a service can tolerate before reliability work takes precedence over feature development.

Reliability Math

Service Level Indicator (SLI)

(Good Events / Total Events) × 100%

Error Budget

100% − SLO Target

For a 99.9% SLO

Acceptable Downtime

Error Budget: 0.1% (~8.7 hrs/year)

This math transforms reliability from an abstract aspiration into a resource — one that can be spent on feature velocity or invested in system stability, depending on what the business needs right now.

Core SRE Practices

SRE Practice

Core Activity

Business Impact

Monitoring & Alerting

Track Golden Signals: Latency, Traffic, Errors, Saturation

Early detection before users notice

Incident Response

Blameless postmortems, on-call rotation management

Minimized MTTR, prevented recurrence

Toil Reduction

Automating repetitive manual operational tasks

Engineer time shifted to value creation

Capacity Planning

Forecasting resource needs from traffic trends

Cost-efficient, surprise-free scaling

SREs act as the control layer of the engineering organization — ensuring that the speed of delivery enabled by DevOps doesn't compromise production integrity. By institutionalizing blameless postmortems, they transform failures from shameful incidents into learning opportunities that make the whole system stronger.

Enterprise Reliability

Need a structured SRE foundation?

Gart builds production-ready SRE practices — from SLO definition and error budget management to 24/7 proactive monitoring with AWS CloudWatch and Grafana.

On-call Design

Blameless Postmortems

Cloud Native

Explore SRE Services →

Platform Engineering: Productizing the Developer Journey

Platform Engineering is the discipline that addresses the scaling limits of DevOps. Its mission: build and maintain an Internal Developer Platform (IDP) — a self-service product that abstracts cloud-native complexity behind a clean, opinionated interface.

The paradigm shift is significant. In traditional DevOps, a developer is handed building blocks — a Kubernetes cluster, a CI/CD tool, a cloud account — and told to wire it together. In Platform Engineering, developers follow a Golden Path: a pre-configured, secure, production-ready workflow that handles the plumbing automatically.

A Golden Path is not a restrictive cage — it's a paved road. The easiest route through the platform happens to also be the most secure, most compliant, and most reliable one. Guardrails become the default, not the exception.

Platform Engineering Philosophy

The Architecture of a Mature IDP

A production-grade Internal Developer Platform is organized across five logical planes. This separation allows the platform team to swap underlying technologies — cloud providers, orchestration tools, monitoring stacks — without disrupting the developer experience:

1

Developer Control Plane

Interface Layer

The graphical portal and documentation hub. Provides a single pane of glass into service ownership, deployment status, and API contracts.

Backstage

Humanitec

CLI

2

Integration & Delivery Plane

CI/CD Engine

"Pipeline-as-a-service" allowing teams to activate standardized build and deploy workflows via simple config files — no pipeline authoring required.

GitHub Actions

GitLab CI

ArgoCD

3

Resource & Infra Plane

IaC Management

Manages compute, storage, and networking. Developers request resources through the portal; the platform provisions them automatically across any cloud.

Terraform

Crossplane

Pulumi

4

Monitoring & Logging Plane

Observability

Standardized stacks that work out of the box. Monitoring is embedded into the Golden Path — every service is observable from deploy day one.

Prometheus

Grafana

ELK Stack

5

Security & Compliance Plane

Shift-Left

Integrated security workflows: secret management, IAM policies, and automated scanning for HIPAA, GDPR, and ISO 27001 compliance by design.

HashiCorp Vault

IAM

Compliance Scanning

What Platform Engineering Does to Developer Productivity

Factor

Traditional DevOps

Platform Engineering

Cognitive Load

High — developers must master 10+ infra tools

Low — complexity abstracted behind a single portal

Context Switching

Constant alerts, pipeline failures, infra debugging

Minimal — standardized paths reduce toil dramatically

Onboarding Time

Weeks or months per new developer

Days — templates and documentation do the heavy lifting

Time on Business Logic

~40–50%

High Infra Overhead

~85–90%

Focus on Product Value

Strategic Blueprint

Ready to build your Internal Developer Platform?

Gart's Reliable Management Framework (RMF) is a proven blueprint for building scalable IDPs — with self-service provisioning, embedded observability, and compliance baked in from day one.

Explore Platform Engineering →

Which Discipline Does Your Organization Need Right Now?

In the standard 2026 operating model, these disciplines are layered — DevOps is the foundation, SRE is the control layer, Platform Engineering is the scaling layer. But sequencing matters. Here's the decision framework:

If your bottleneck is...

Slow releases & deployment friction

↓

Prioritize

DevOps Practices

Track Metrics:

Lead Time for Changes, Deployment Frequency

If your bottleneck is...

Outages, poor reliability, alert noise

↓

Prioritize

Site Reliability Engineering

Track Metrics:

SLO adherence, MTTR, Error Rate

If your bottleneck is...

Developer cognitive load & team scale

↓

Prioritize

Platform Engineering

Track Metrics:

Dev satisfaction, Time-to-onboard, IDP adoption

The important nuance: these aren't mutually exclusive. Organizations rarely suffer from just one bottleneck. The strategic question is about sequencing — where does the highest-leverage investment happen first? A team of 20 engineers needs different medicine than an organization of 2,000.

Operational Era

Primary Objective

Key Implementation

Scaling Constraint

Waterfall (Legacy)

Predictability & Documentation

Siloed departments, manual handoffs

Slow time-to-market; high failure rates

Early DevOps (2010s)

Speed & Collaboration

CI/CD pipelines, "you build it, you run it"

High cognitive load on developers

Platform Era (2025+)

Developer Experience & Scale

Internal Developer Platforms, Golden Paths

Requires specialized platform product teams

What These Disciplines Actually Deliver: Real Numbers

Investing in DevOps, SRE, and Platform Engineering isn't an engineering luxury — it's a business imperative with measurable returns. Here are the outcomes Gart has delivered for clients across industries:

81%

Reduction in Azure cloud spend via SRE & DevOps optimization

99.99%

Uptime achieved for ESG AI platform with DR architecture

25%

EC2 + RDS cost reduction while maintaining full uptime

8+ hrs

Developer hours saved per week per engineer via IDPs

✦ Gart Case Study

GreenTech: From Local Solution to Global Platform

A GreenTech leader needed to rapidly onboard clients globally, but traditional DevOps required weeks of manual reconfiguration for every deployment. By implementing a Platform Engineering model via our Reliable Management Framework (RMF), we created a self-service IDP that abstracted regional complexity.

Weeks → Minutes

New client onboarding time

Zero

Manual infra reconfigurations

Read the full Case Study →

✦ Gart Case Study

BrainKey.ai: Healthcare Platform Security at Scale

BrainKey.ai processes sensitive MRI and genetic data, requiring infrastructure that is both highly secure and elastic. We designed a Kubernetes-based architecture with HashiCorp Vault, ensuring HIPAA compliance while maintaining the ability to scale dynamically during peak processing loads.

HIPAA

Compliance achieved by design

Dynamic

Auto-scaling during peak loads

Read the full Case Study →

What Comes Next: AIOps, GreenOps & Cognitive Engineering

The three disciplines described above are already evolving. By 2027, the lines between them will blur further as artificial intelligence, sustainability requirements, and adaptive automation reshape what it means to run reliable software at scale.

AI-Driven Observability

AI adoption in engineering teams has reached nearly 90%, but its impact is only as good as the underlying platform. The next generation of observability is predictive — machine learning algorithms that identify anomaly patterns before they manifest as user-facing failures. NLP-based incident summaries and predictive root cause analysis are already compressing incident resolution from hours to minutes.

GreenOps: Sustainability as a Platform Feature

The green cloud model is no longer optional. Engineering teams are now responsible for the carbon footprint of their infrastructure decisions — from cloud provider selection based on Power Usage Effectiveness (PUE) ratings to application architecture choices that reduce unnecessary compute cycles. GreenOps is emerging as both a moral imperative and a measurable business outcome.

Cognitive Platform Engineering (CPE)

The frontier of the discipline — where static Golden Paths evolve into adaptive, intelligence-driven control systems. Unlike procedural pipelines, CPE platforms continuously learn from their environment, adjusting behaviors and enforcing policies based on operational intent and business impact in real time. The platform doesn't just provide the paved road; it dynamically optimizes the route for each driver.

Four Pillars for Engineering Leaders in 2026

Organizations that successfully integrate all three disciplines create a virtuous cycle: DevOps drives the cultural foundation; SRE enforces rigorous reliability standards; Platform Engineering provides the scalable systems that let developers innovate without operational toil.

01

Platform as Product

Treat your IDP as a core business capability, not an IT afterthought. Focus on a product mindset and measurable Developer Experience (DevEx).

02

Institutionalize SLOs

Reliability is a feature with its own budget. SLOs aligned to customer satisfaction drive rational engineering tradeoffs.

03

Invest in Culture

Psychological safety, blameless postmortems, and continuous upskilling. Tools don't transform organizations — people do.

04

Bridge the Gap

Partner with specialists who have navigated these transitions before. Avoid rebuilding the wheel from scratch to accelerate time-to-value.

Gart Solutions: Your Engineering Transformation Partner

We don't just consult — we embed. Our engineers work alongside your team to build the internal capabilities that sustain high performance.

⚙️

DevOps Engineering

Optimizing your entire CI/CD pipeline. DORA metric baselines, automation frameworks, and the cultural playbook to make it all stick.

GitHub Actions

ArgoCD

Terraform

Learn more →

🛡️

Site Reliability Engineering

Defining SLOs, error budgets, and 24/7 monitoring with real signal-to-noise discipline. Postmortem frameworks included.

AWS CloudWatch

Grafana

SLO/SLI Design

Learn more →

🏗️

Platform Engineering

Building Internal Developer Platforms using our RMF — from self-service portals to multi-cloud orchestration and security.

Kubernetes

Backstage

HashiCorp Vault

Learn more →

Engineering Excellence

Stop firefighting.Start engineering.

Whether you need to accelerate delivery, harden reliability, or scale developer productivity — Gart has the frameworks and the people to get you there faster.

Get a DevOps Audit →

View Case Studies

Let’s be honest: the term “AI infrastructure” gets thrown around way too loosely. Every company claims to offer it, every platform says they do it, and every startup feels they need it. But the truth? Most businesses don’t fully understand what AI infrastructure really involves — let alone who to trust to build it.

With the explosive rise of AI adoption across industries, from healthcare to fintech to logistics — the need for a robust, scalable, and purpose-built AI infrastructure has never been greater. But just buying tools or plugging into a cloud platform doesn’t automatically set you up for AI success. In fact, the wrong kind of provider can cost you time, resources, and your competitive edge.

So, how do you figure out who you actually need? Should you go with a big-name hyperscaler like AWS or Azure? Rely on AI tooling vendors? Or find a real engineering partner that understands not just infrastructure, but your business goals?

This is exactly where Gart Solutions enters the conversation and why we’re going to break this down, piece by piece.

What “AI Infrastructure” Really Means (And Why It’s Misused)

Let’s clear the air: AI infrastructure is not just cloud compute. It’s not just spinning up GPUs or having a Kubernetes cluster. True AI infrastructure is an ecosystem — spanning hardware, software, networking, orchestration, data pipelines, security, and deployment strategies, that enables your models to be trained, tested, and deployed at scale reliably and efficiently.

Many vendors blur this definition. Some refer to AI infrastructure as access to compute resources. Others pitch it as MLOps tooling. But these are fragments, not the full picture. Without the glue —infrastructure engineering — you’re essentially building AI on shaky ground.

Here’s what real AI infrastructure includes:

Provisioning scalable compute environments (on-prem, cloud, hybrid)

CI/CD for AI (from data to model to inference)

Networking and security specific to AI workloads

Automated infrastructure management and monitoring

Model versioning, rollback, and lifecycle support

Regulatory compliance & data governance

As Fedir Kompaniiets, CEO of Gart Solutions, often puts it:

“You can’t build intelligent systems on unintelligent foundations. AI needs an engineered runway to take off.”

That “engineered runway” is where too many projects cut corners. And why most AI deployments fail after the proof-of-concept phase.

The Three Major Categories of AI Infrastructure Providers

Let’s break down the landscape. All AI infrastructure vendors fall into one of these three buckets:

Hyperscalers & Platforms

These are your big cloud providers — AWS, Microsoft Azure, Google Cloud, offering on-demand compute, storage, and managed AI services.

Strengths:

Global scale and availability

Massive catalog of AI/ML services

Flexibility to scale compute up/down

Pay-as-you-go pricing

Limitations:

One-size-fits-all approach

High complexity; steep learning curve

Hidden costs and potential vendor lock-in

No engineering support for tailoring environments

Hyperscalers are powerful, no doubt. But they require skilled teams to design and manage AI-ready infrastructure. The tools are there, but you have to know how to wire them correctly.

AI Tooling Vendors

These vendors — like Hugging Face, DataRobot, Weights & Biases, and Neptune.ai — offer platforms for training, experiment tracking, model deployment, and observability.

Strengths:

Simplified interfaces for ML workflows

Version control, reproducibility, and collaboration

Accelerated model development

Limitations:

Assume infrastructure is already in place

Don’t handle compute provisioning, security, or networking

Tooling doesn’t solve operational or scaling issues

Can add toolchain bloat

AI tooling vendors are great after you’ve built the core infrastructure. But they don’t replace the need for infrastructure automation, engineering, or DevOps support.

AI Infrastructure Engineering & Delivery Partners

This is where real transformation happens. Engineering-led partners design, build, and operate AI infrastructure customized for your business and goals.

Strengths:

Vendor-agnostic and tailored to your environment

Combines DevOps, MLOps, automation, and security

Offers long-term support and scale planning

Aligns with compliance, governance, and data strategies

Gart Solutions is a leader in this category. With proven delivery across healthcare, fintech, and product companies, they offer end-to-end AI infrastructure services — not just tools or compute, but custom-engineered solutions.

When Companies Need Each Category

Here’s a breakdown of when each provider type is right, depending on your business maturity and goals:

Company StageHyperscalerTooling VendorEngineering PartnerStartup✅ For initial experiments✅ If team is skilled❌ Usually overkillScale-up✅ For scalability✅ Adds efficiency✅ To avoid technical debtEnterprise✅ Core platform✅ For governance✅ Crucial for transformationRegulated Industry⚠️ Need strong compliance overlays✅ Helpful for tracking✅ Required for auditability

If you’re running mission-critical AI workloads, handling sensitive data, or deploying in production at scale — you need an engineering-led partner.

Where AI Projects Fail Without Infrastructure Engineering

The AI landscape is full of failed pilots and expensive detours. Why?

Models work in dev, but can’t scale in prod

Data bottlenecks and broken pipelines

Lack of observability and rollback mechanisms

Downtime, security risks, and compliance gaps

Take MedWrite AI, a healthcare NLP platform. They had models ready, but infrastructure issues blocked production launch. Gart Solutions stepped in, designed AI-ready infrastructure with automated scaling and monitoring — and cut time-to-market by over 60%.👉 Read the full case study

Fedir Kompaniiets explains:

“AI tooling gives you a car. Infrastructure engineering builds the road — and the traffic system to keep it running.”

Why Engineering-Led Partners Outperform Tools Alone

The key reason tools fail is that they assume the groundwork has been done. But most companies haven’t:

Set up secure, compliant data flows

Automated their infrastructure

Integrated CI/CD for AI

Designed scalable model-serving environments

Gart Solutions combines IT infrastructure consulting, automation, and DevOps best practices to create a future-proof foundation for AI.

They don’t just deliver a stack — they build a customizable, self-healing, and compliant AI delivery system.

Market Overview: AI Infrastructure Spending and Trends

According to Gartner, global AI infrastructure spending is expected to surpass $422 billion by 2028, growing at a CAGR of 26%. The key investment areas include:

Cloud infrastructure and hybrid deployments

Hardware accelerators (GPUs, TPUs)

MLOps tooling and automation

Engineering services for delivery and monitoring

The big shift? From platform dependence to engineering autonomy.

Companies are realizing that AI platforms are only part of the puzzle — infrastructure strategy is becoming the new battleground.

Deep Dive: Gart Solutions’ Approach to AI Infrastructure Delivery

Gart doesn’t sell tools — they deliver outcomes.

By combining consulting, automation, and AI-ready architectures, they support every stage of the AI lifecycle. Their services include:

IT Infrastructure Consulting

Infrastructure Automation

General IT Infrastructure Services

In their HealthTech AI case study, they delivered HIPAA-compliant, cloud-native AI infrastructure capable of zero-downtime deployments and real-time model performance monitoring.

That’s not just delivery. That’s engineering-led transformation.

Case Studies That Prove the Point

Let’s move beyond theory and look at how this plays out in real businesses.

Take MedWrite AI, a HealthTech platform transforming how clinical notes are analyzed using NLP. When they approached Gart Solutions, their infrastructure was:

Underperforming under load

Hard to manage and monitor

Non-compliant with healthcare standards

Gart stepped in and:

Re-architected their cloud infrastructure

Implemented robust MLOps pipelines

Added auto-scaling and fault tolerance

Ensured HIPAA compliance through secure networking and audit logging

👉 See the full MedWrite AI Case Study

Results:

Time-to-market reduced by 60%

Model performance boosted by 3x

Uptime near 100% during critical deployments

In another case, a fintech company needed to deploy an AI fraud detection engine. The issue? Their tools worked in test but crashed under real-world scale. With Gart Solutions’ infrastructure automation services, they achieved:

Full CI/CD for model updates

Cost-optimized infrastructure scaling

Secure multi-region deployments

The takeaway? Tools are great, but without engineering, they collapse under pressure.

How to Choose the Right AI Infrastructure Partner

Before you sign up with a vendor promising "AI infrastructure," ask yourself:

Do they understand your industry’s compliance needs?

Сan they automate deployments and rollback pipelines?

Will they stay involved beyond the initial setup?

Do they offer custom engineering vs. out-of-the-box tools?

And perhaps most importantly:

❌ Are they trying to sell you tools instead of solving your problems?

With Gart Solutions, you’re getting a team that thinks beyond platforms. They build scalable, secure, and future-proof environments that grow with you.

Why Gart Solutions Stands Out

There’s no shortage of vendors claiming to support AI. But few can deliver custom, scalable, and production-grade infrastructure the way Gart Solutions does.

Here’s why:

Engineering-first approach: Every project starts with strategy, not software

Vendor-neutral: They use what works best for you, not what pays them commissions

Business-oriented outcomes: They align infrastructure with your goals — not just technical specs

Ongoing support: Monitoring, updating, and evolving your infrastructure over time

Proven track record: Across industries like HealthTech, FinTech, and SaaS

Conclusion

AI infrastructure isn’t one-size-fits-all. Whether you're experimenting with models or deploying them into production, you need the right kind of partner to avoid common traps like tool sprawl, vendor lock-in, and under-engineered environments.

To recap:

Hyperscalers give you the raw power, but no guidance

Tooling vendors offer control — but no infrastructure

Engineering-led partners, like Gart Solutions, deliver tailored, future-ready solutions

If your AI initiative is serious, the choice is clear: invest in infrastructure engineering from the start.

And if you're looking for a trusted partner, Gart Solutions is ready to help. Contact Us and explain the challenges of your project.

Platform Engineering is one of the top technology trends of 2026. Gartner estimates that by 2026, 80% of development companies will have internal platform services and teams to improve development efficiency.

What is Platform Engineering?

It is the process of designing and building platforms that provide infrastructure, tools, and services to support various applications and services. The main goal of platform engineering is to create a powerful and versatile platform that can support various application development and operation processes.

The platform provides developers, operators, and other stakeholders with a user-friendly interface and set of tools to simplify and accelerate the process of application development, deployment, and maintenance.

Key Components of Platform Engineering

Successful platform engineering combines technical infrastructure, automation, and developer experience improvements. Common components include:

CI/CD Pipelines – Automated build, test, and deployment processes for faster delivery.

Developer Portals & Service Catalogs – Centralized access to APIs, documentation, and reusable components.

Observability & Monitoring – Logging, metrics, and tracing integrated into the platform.

Security & Compliance Automation – Built-in vulnerability scanning and policy enforcement.

Infrastructure as Code (IaC) – Declarative configuration using tools like Terraform or Pulumi.

Development Efficiency

The adoption of Agile, DevOps, and TeamFirst approaches, along with the rapid development of cloud and deployment tools, has led to explosive growth in software development across all segments of the economy. Businesses began to actively increase in-house development in an attempt to improve their own efficiency and occupy new market niches.

A typical development team is cross-functional and includes up to 15 people of different roles - from product manager and developers to QA and DevOps. This composition of specialists allows the team to achieve high autonomy and a high degree of responsibility for the product, which significantly reduces time-to-market.

It is noteworthy that it is undesirable to involve more than 15 people in teams: research results show that in this case, it becomes more difficult to build communications within the team, participants start to "cluster" by communication nodes, and trust between them decreases. This inevitably affects the performance of the entire team.

Technical specialists and businesses, as a rule, face typical problems inherent in teams regardless of their tasks and sphere of activity:

Development efficiency directly depends on how much time a team devotes to developing software functionality that is valuable to the business. At the same time, teams have mandatory activities that have nothing to do with creating business value: onboarding new employees, setting up monitoring services for the product, building CI/CD pipelines. The team usually spends a lot of time and resources on all these activities, distracting them from development. At the same time, the allowable amount of cognitive load teams have is limited (primarily due to the limit of team members).

It is important for business that time-to-market of developed solutions and functions be minimal. But in practice, the development team is dependent on specialists from other departments: it is difficult to achieve full autonomy in delivery and maintain high efficiency, as the complexity of software and the conditions of its production and operation are constantly growing.

Development teams have a lot of routine by default, which often not only affects the team's productivity in terms of product delivery (resulting in delays in releases), but also reduces the quality of delivered solutions. If the problem of routine is unresolved, you can get a situation with constant overtime and consequent drop in morale, burnout, and apathy. All of these have significant negative consequences for the product and the business.

The key task is to rid technicians of routine, artificial constraints and dependencies.

Streamline your DevOps with Gart Solutions – Let’s build a scalable platform together. Get in touch today!

Platform Engineering in the Cloud-Native Era

Cloud-native development has made platform engineering essential for modern software delivery. Key considerations include:

Kubernetes Orchestration – Managing scalable workloads and microservices.

GitOps Workflows – Declarative deployments with automated rollbacks.

Multi-Cloud Interoperability – Avoiding vendor lock-in through portable infrastructure design.

Serverless Integration – Supporting event-driven architectures for rapid feature deployment.

Platform Engineering and Internal Development Platform (IDP)

Platform Engineering is a methodology for organizing development teams and the tools around them, which allows removing unnecessary non-core workload from development teams, thus increasing their productivity in delivering business value.

One example of the platform approach is the creation of an Internal Development Platform (IDP), through which the development team can solve all non-core issues in a self-service mode - from requesting infrastructure and environments to accessing Observability services, necessary development tools, and utilizing typical build and deployment pipelines.

Internal Development Platform (IDP) serves as a one-stop-shop platform for developers:

Maximizes Developer Experience;

Provides a single point of entry, simplifying onboarding;

Reduces the cognitive load for development teams, thereby increasing their productivity.

Thus, the better and more fully implemented the back-end platform is, the higher the efficiency of development teams.

Let's take a closer look at the implementation of the platform approach using IDP as an example.

The Genesis of Internal Development Platforms

The trend of creating Internal Development Platforms (IDPs) is relatively new, but the industry has been moving towards them for quite some time.

Early Signs of the Need for IDPs

Even during the early stages of the digital transformation trend (2012-2015), three key points became apparent:

Teams within a company go through the same onboarding process: This includes gaining access to tools and resources for building and deploying code, and so on.

Teams use similar CI/CD processes for building and deploying products, as well as identical Observability techniques. Moreover, many teams solve the same architectural problems, such as creating a fault-tolerant PostgreSQL or developing Stateless microservices.

Development speed is often slowed down by IT and Information Security (IS) departments. These departments became bottlenecks on the path to product delivery. While development teams were able to adapt to rapid changes and deployments, IT and IS departments often lagged behind. Many operations in these departments were manual, and processes were slow and not scalable.

The Need for a Solution

Companies needed to minimize this duplication of work and bypass these limitations without compromising security. The solution was the idea of consolidating all best architectural practices, configuration templates, embedding IS requirements into the infrastructure deployment workflow, and automating the allocation of development tools, with subsequent provision of all of the above using the "as a service" (aaS) model.

The Birth of IDPs

Thus, internal platforms began to emerge, and development teams gained a single portal through which they could request everything they needed to work without months of configuration and approvals. This could include virtual machines, Kubernetes clusters, databases, creating repositories in GitLab, deploying repositories, and so on.

Simplify your DevOps – Explore our platform engineering solutions. Talk to our team today!

Implementation Features of Internal Development Platforms

Today, almost all large companies are either considering or already using IDPs.

In the enterprise segment, IDP platforms are often built on the basis of cloud orchestrators (HP CSA, VMware vRA, OpenStack, etc.), which provide an IT service "constructor" and a wide stack of plugins for quick creation of self-service portals with a service catalog. As a rule, companies in the enterprise segment are assisted in this by integrators who have the necessary competencies.

At the same time, many companies create development platforms from scratch, without using ready-made solutions. This is usually done by companies with a high level of development culture who have a clear idea of what they want to get from the platform and are willing to invest significant resources in its creation and support. This allows them to integrate their own custom-built infrastructure services (Observability, IaM, etc.), CI/CD tools, and other solutions into the IDP.

In other words, IDPs in such companies are specifically tailored to the internal development business processes.

Get a sample of IT Audit

Sign up now

Get on email

Loading...

Thank you!

You have successfully joined our subscriber list.

Why Platform Engineering Is Trending

Platform Engineering has become a trend because the challenges it addresses have become widespread and the benefits of its implementation are clear.

The positive outcomes of using Platform Engineering include:

Alignment of the technology stack across teams: The use of the same services and tools by different teams, available in the IDP, allows for increased efficiency of cross-team work, minimizes "shadow IT", and reduces "competency silos" in which individual specialists and even teams operate.

Reduction of technology sprawl: Building an IDP allows for the development of a unified set of tools used in the company. This makes it possible to reduce the stack by eliminating unnecessary and duplicate solutions (for example, when different departments of the company use different tools with identical functionality). Moving away from technology sprawl simplifies stack support, its administration, and reduces licensing costs. At the same time, the expertise of the IDP support team for this toolkit grows, as there is no need to scatter resources on learning different products and solutions.

Knowledge Sharing: Creating an IDP platform implies developing detailed documentation that guides users through typical cases - from building a CI/CD pipeline to deployment in production. This ensures the continuity of expertise and guarantees that the team will be able to continue working with the tools even if some of the expert employees leave. The bus factor effect is minimized.

Security Enhancement: The IDP platform is essentially a single point of access to tools. This makes it possible to integrate security solutions into the "user-tool" chain - for example, to check access rights and perform approval procedures. IDP users can also use IT resources and services that have already been approved by security personnel.

Standardization and Improved Collaboration: Platform Engineering involves using a specific set of tools and frameworks. This simplifies application development and allows for the development of a clear standard for components, such as monitoring, logging, and tracing. Development teams can use ready-made components and functionalities to quickly create applications and services, which also simplifies the integration of the created solutions with each other. This relieves some of the cognitive load on development teams and increases their efficiency.

Onboarding Acceleration: The implementation of an IDP platform with a single stack and common rules for working with tools reduces the time it takes to connect new users or entire teams to the project. This is achieved through SSO mechanisms and tight integration with development tools, as well as pre-agreed rights and access from the security department.

Fast and Non-bureaucratic Resource Acquisition: Platform Engineering allows easy access to computing resources through a pre-agreed catalog of IT services (from the security and IT departments), which publishes IaaS and PaaS services adapted for the company. In this case, it takes a few minutes to get the necessary resources, not weeks.

All this allows development teams to be relieved from solving typical tasks and helps them focus on delivering value to the business.

Revolutionize your infrastructure – Discover our platform engineering services. Connect with us!

Real-World Examples of Platform Engineering in Action

Industry leaders demonstrate how platform engineering transforms development:

Netflix – Custom internal platforms enabling self-service, monitoring, and automation for thousands of services.

Spotify – Developed Backstage, an open-source developer portal for unified service management.

Case Study – Gart Solutions helped a SaaS client cut infrastructure management time by 40% through a Kubernetes-based IDP with Terraform automation.

Platform Engineering Tools & Frameworks

A robust IDP relies on a well-chosen stack of tools and frameworks:

Backstage – Developer portal framework by Spotify.

Crossplane – Kubernetes-native infrastructure orchestration.

ArgoCD / FluxCD – GitOps deployment automation.

Terraform / Pulumi – Infrastructure as Code tools for repeatable environments.

Prometheus / Grafana – Monitoring, alerting, and visualization solutions.

Barriers to Adoption and Development of Internal Development Platform (IDP)

The adoption of Platform Engineering as a core company concept can be hindered by various factors, both financial and organizational. However, the blockers are usually typical.

Lack of a clear understanding of what IDP is and how the platform should work. There is no strict definition of what an IDP platform is and how it should look like. Therefore, managers are afraid of "creating something wrong." But in reality, there can be no standard: the IDP platform is created taking into account the company's stack and work patterns, so projects of different companies differ. It is impossible to "create something wrong" if you do it according to the needs of your team.

Fear of having to cut staff. Often, the development of the Internal Development Platform is slowed down at the level of employees, including DevOps, who are worried that they will be left without work. But this is a misconception. The Platform Engineering concept does not imply a reduction in staff, but simply a shift in their focus to solving other tasks.

The need to restructure processes. After the implementation of the IDP platform, the teams responsible for IT infrastructure and information security may have to change their usual working procedures, which means that they will have to restructure their processes. This can be a challenge for both the IT team and information security, as well as for the management, which is afraid of potential risks. But in practice, with proper preparation and desire, the transition to a new methodology of work is smooth and seamless.

The need for investment and the length of the journey. Implementing Platform Engineering and developing an Internal Development Platform is not a one-day task. Such innovations require regular investments and allocation of resources from the business. However, the increase in the frequency of releases, the reduction of errors and the increase in development productivity justify any costs.

By outsourcing the development of the IDP platform, the company can mitigate some of the shortcomings and accelerate the adoption of the Platform Engineering concept.

Implementation Timeline and Effectiveness Evaluation

The implementation of an IDP platform is a continuous journey. Even after restructuring internal processes, building a self-service portal, and moving away from a "technology zoo," it is important to continue developing the platform, updating the available stack, improving the user experience, and more. Tools, market needs, and business processes change, so IDP as a product also requires regular changes.

Typically, it takes companies several years to adapt their business processes to use an IDP platform. The timeframe depends largely on the size and expertise of the team involved in building the IDP platform, as well as the chosen implementation approach - building on a ready-made solution or developing from scratch.

It is difficult to directly assess the cost-effectiveness of implementing such projects. Therefore, conceptually, when determining the rationality of investments, several criteria are taken into account.

Ratio of developers to DevOps engineers. For example, if before the implementation of the IDP platform, 10 DevOps specialists were required for 10 development teams, then after the implementation of the solution, with a three-fold increase in development scale, the number of DevOps engineers will grow insignificantly - for example, to 15 people (without the platform, there would be almost a proportional growth).

Release frequency. Due to the reduction of approvals, checks, and secondary tasks, as well as the improvement of interaction between development teams, Time-to-market is also reduced. This allows increasing the number of releases without sacrificing quality and without a significant increase in the workload on developers.

Number of errors allowed. The unification of tools and technologies, as well as the ability to obtain the necessary resources without complex manipulations, allows for a significant reduction in the number of errors made during deployment.

Performance evaluation. The formalization of approaches and tools allows you to set and track metrics (both technical and business), which, in turn, will help you assess the effectiveness of changes or quickly identify bottlenecks.

Here are some additional points to consider:

The maturity of the organization's DevOps practices. Organizations with a more mature DevOps culture will be better equipped to adopt and use an IDP platform effectively.

The level of support from senior management. The success of an IDP initiative depends on the level of support from senior management.

The availability of resources. Implementing and using an IDP platform requires resources such as time, money, and people.

Overall, the implementation of an IDP platform can be a complex and challenging undertaking. However, the potential benefits can be significant, including improved developer productivity, reduced time to market, and increased software quality.

Platform Engineering vs. DevOps and SRE: Differences in Scope, Focus, and Goals

Platform engineering, DevOps, and SRE (Site Reliability Engineering) are three concepts and methodologies used in information technology to optimize software development and support processes. While they share common principles and goals, they differ in scope, scale, and primary tasks. Depending on the work context and their needs, modern companies may rely on platform engineers, DevOps engineers, and SREs to ensure the excellence of their products and services.

Scope of Responsibility:

Platform engineering focuses on developing and building platforms to support applications.

DevOps and SRE focus on the processes and methodologies for software development and operations.

Scale:

Platform engineering is often geared towards creating highly scalable and flexible platforms.

DevOps and SRE work at the level of processes and operations within a specific system scale.

Goals and Objectives:

DevOps aims to reduce the time between software development and deployment, ensuring continuous delivery and automation of processes.

SRE focuses on creating highly reliable systems and supporting them.

Platform engineering aims to provide infrastructure and services as a platform to support various applications and services.

Focus of Work:

Platform engineers concentrate on creating and maintaining an internal platform that facilitates application development and operations. They provide other developers with tools and services to accelerate the development process.

DevOps encompasses a broader range of areas, combining development, operations, and ensuring continuous application development and improvement.

Processes and Methodologies:

Platform engineers typically follow Agile methodologies with an emphasis on delivering results and continuous improvement. They strive to create flexible and efficient infrastructure for developers.

DevOps covers a wider range of methodologies and processes, such as CI/CD (continuous integration and delivery), test automation, and containerization.

Area of Responsibility:

Platform engineering focuses on the architecture and infrastructure of the internal platform.

DevOps is responsible for integrating application development and operations.

Platform Engineering vs. SRE:

Focus of Work:

Platform engineers focus on developing and supporting an internal platform for other developers, providing tools, services, and abstractions that facilitate application development.

SRE is primarily responsible for ensuring the stable and reliable operation of a product or service. Their main task is to maintain high availability and quickly respond to problems in the production environment.

Culture and Methodology:

SRE emphasizes a culture of reliability, where everyone in the team becomes responsible for the reliable operation of the system. They are often guided by the principles of "build, measure, tune" and "serve the customer."

Platform engineers typically follow Agile principles with an emphasis on continuous delivery and iteration.

Area of Responsibility:

Platform engineering focuses on the architecture and infrastructure of the internal platform.

SRE is responsible for the operational side of the product or service.

How Platform Engineering Compares to DevOps and Cloud Engineering

DevOps focuses on unifying development and operations by giving teams ownership of both application and infrastructure. However, as the complexity of applications and infrastructure grew, the cognitive load on DevOps teams became too high. Platform engineering builds on DevOps by creating a shared platform that reduces this cognitive load and standardizes processes across teams.

Cloud engineers specialize in managing cloud infrastructure, such as AWS or Azure, by setting up services and managing costs. Platform engineers, on the other hand, take these cloud services and integrate them into a broader platform that developers can use. Essentially, platform engineering adds a layer on top of cloud services, making them easier to use and more accessible to internal teams.

Key Differences: Platform Engineering vs DevOps vs SRE

AspectPlatform EngineeringDevOpsSREFocusInternal platform developmentDevelopment and operations integrationSystem reliability and operationsScaleLarge-scale platformsSpecific system scaleProduction environmentGoalsPlatform for applications and servicesContinuous delivery and automationHigh availability and reliabilityProcessesAgile methodologiesCI/CD, test automation, containerizationCulture of reliability, "build, measure, tune"ResponsibilityPlatform architecture and infrastructureIntegration of development and operationsOperational side of product or service

Responsibilities of Platform Engineering

Standardization of Tools and Practices

Platform engineers select the tools needed to deploy and run applications and ensure that they are used consistently across all teams. This includes CI/CD pipelines, cloud platforms, Kubernetes clusters, and security protocols.

Creation of an Internal Developer Platform (IDP)

This platform provides developers with a self-service portal where they can access pre-configured infrastructure and services, such as databases, Kubernetes clusters, and CI/CD pipelines. The IDP abstracts away the complexity of managing infrastructure, allowing developers to focus on coding and business logic.

Collaboration with Development Teams

Platform engineers work closely with development teams to ensure the platform meets their needs while maintaining best practices for security, compliance, and efficiency.

Continuous Development and Improvement

Like any product, the internal developer platform needs continuous updates and improvements. Platform engineers are responsible for iterating on the platform, adding new features, and ensuring it meets the evolving needs of the organization.

Key Problems Solved by Platform Engineering

Cognitive Load on DevOps Teams: Traditional DevOps practices require teams to manage both application development and infrastructure. This increases the complexity of tasks for individual engineers, such as maintaining Kubernetes clusters, managing CI/CD pipelines, and ensuring security compliance. Platform engineering centralizes these responsibilities, reducing the mental load on DevOps teams.

Inconsistency and Duplication: When multiple teams use different tools and configurations, it creates inconsistencies across the organization. Platform engineering solves this by standardizing the tools and environments, reducing duplicated efforts across teams.

Scalability and Efficiency: With traditional DevOps, each team might configure and manage its infrastructure separately, leading to inefficient resource use. Platform engineering provides a shared platform that is scalable, consistent, and optimized, allowing for easier management of resources and infrastructure.

Future of Platform Engineering: 2025 and Beyond

The discipline will continue to evolve with:

AI-Driven Platforms – Predictive scaling, automated optimizations, and intelligent troubleshooting.

Greater Abstraction – Developers interact with higher-level services instead of raw infrastructure.

Internal Platforms as Products – Treating the platform as a maintained and evolving product.

Security-First Design – Automated detection, remediation, and compliance verification.

Key Takeaways from the Article:

Platform Engineering is a major technological trend that companies worldwide are actively following by creating their own IDP platforms. The methodology has become particularly popular due to the active digital transformation of businesses.

Building IDP platforms gives businesses competitive advantages in the era of digital transformation and helps them overtake competitors on the go by maximizing efficiency.

Today, companies do not need to dive into IDP platform development from scratch: they can choose ready-made solutions and partners with experience and expertise in IDP construction.